Smart appliances and devices

Modern living without the modern hassles. Find out how to build out your smart home with the best smart devices and appliances.

Best products

Reviews

How-to's

Features

Guides

All the latest

Smart appliances and devices news

The best robot pool cleaners of 2026: Top picks for all budgets and pool sizes

Edgar Cervantes21 hours ago

0

Roborock's Qrevo Curv 2 Flow is ready to mop up the competition — and your filthy floors

Stephen Schenck22 hours ago

0

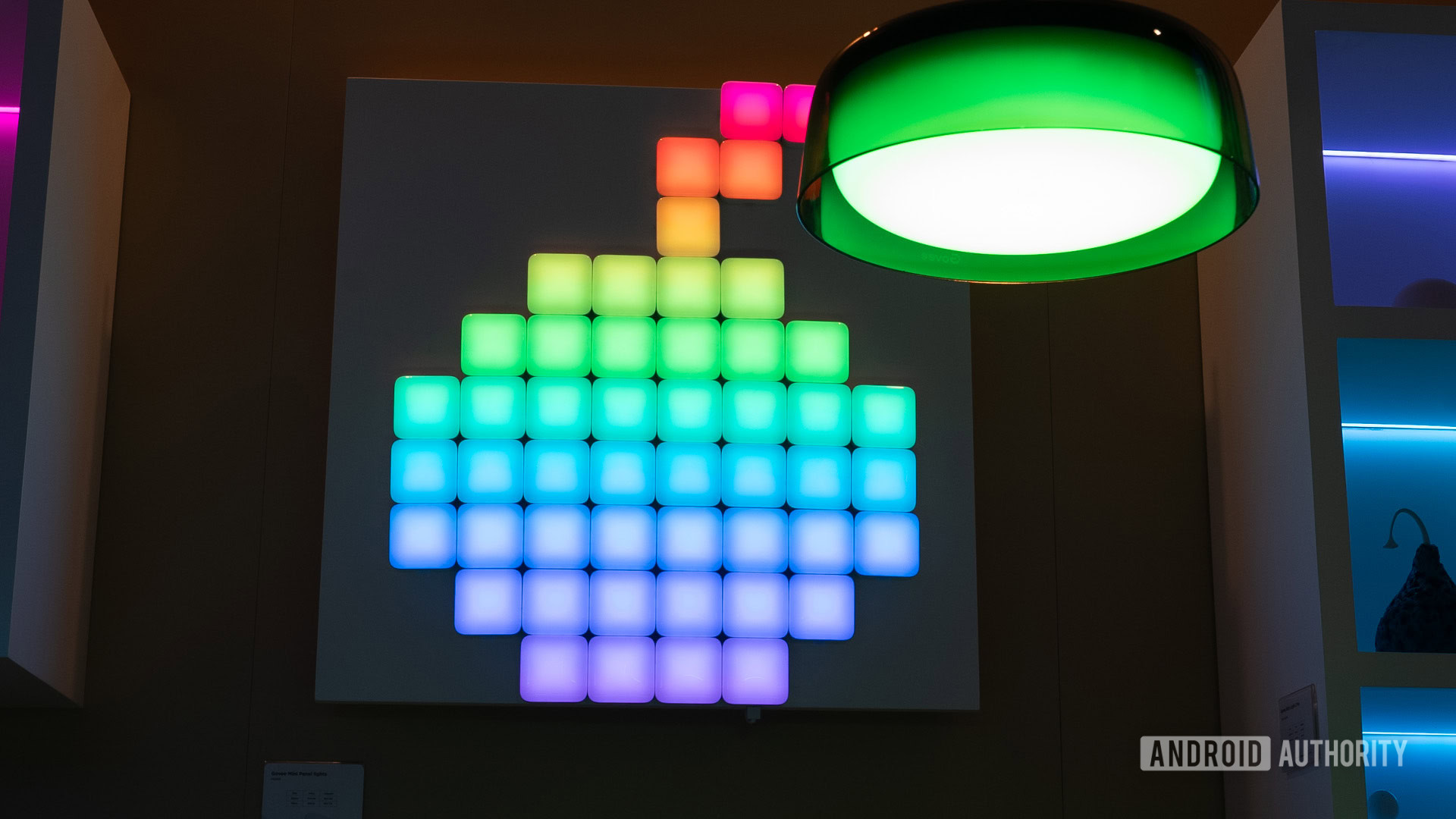

Save 20% on Govee Mini Panel Lights right now in Amazon Choice deal

Matt HorneMay 28, 2026

0

Score a 37% discount on a pair of high-end Arlo 2K security cameras!

Matt HorneMay 26, 2026

0

LIFX Smart Light Bulbs at a record 36% off are a solid pick

Matt HorneMay 19, 2026

0

Beatbot Anniversary Sale - These are the best pool cleaners to buy right now

Brought to you by Beatbot

Deal: Save 25% on the Arlo Essential XL Security Camera

Matt HorneMay 13, 2026

0

DREAME Z1 Pro Robotic Pool Cleaner is now cheaper than Black Friday

Matt HorneMay 7, 2026

0

Dreame NEXT 2026: Everything you need to know about the A3 AWD Pro and L60 series

Brought to you by Dreame

The WYBOT S3 is the world’s first robot pool cleaner that cleans, docks, and empties itself

Brought to you by WYBOT

Walmart's Onn just launched a $35 Google Home camera, and it looks like a steal!

Adamya SharmaMay 28, 2026

0

Google Home is supercharging what your smart cameras can do

Taylor KernsMay 27, 2026

0

Amazon reveals Android 16-based Fire OS 16 is coming to Fire TV devices

Ryan McNealMay 26, 2026

0

Google wants Gemini in every home, so it's giving away the blueprints

Tushar MehtaMay 21, 2026

0

Homey Pro is getting a big price hike next month, and RAM costs are to blame

Adamya SharmaMay 18, 2026

0

Having trouble with Google Nest services? You're not alone (Update: Back online)

Ryan McNealMay 15, 2026

0

Homey just turned your Android TV into a full smart home dashboard

Jay BonggoltoMay 14, 2026

0

Ring's new Spotlight and Floodlight cameras level up with 2K resolution

Ryan McNealMay 13, 2026

0

Google Home's RGB lighting controls should be back to normal now

Taylor KernsMay 6, 2026

0

Google Home's getting ready for summer with camera upgrades, new automations, and much more

Stephen SchenckMay 5, 2026

0