Affiliate links on Android Authority may earn us a commission. Learn more.

What is Dual Pixel Autofocus and how does it compare to PDAF?

Dual Pixel autofocus is an increasingly popular smartphone camera feature, particularly at the flagship end of the market. The technology promised much faster focusing for action shots and superior focusing in low-light environments. But how does it work?

Dual Pixel autofocus is an extension of Phase Detection autofocus (PDAF), featured in smartphone cameras for years. Essentially, PDAF uses dedicated left-looking and right-looking pixels on the image sensor to calculate whether the image is in focus.

As technology advances, so does PDAF. More modern smartphones are starting to use All Pixel autofocus, multi-directional PDAF, and laser autofocus, among other techniques. We’ll focus on Dual Pixel for this post, but remember there is better tech now.

Before we start: Learn about these Photography terms before moving forward

What is it, and how does it work?

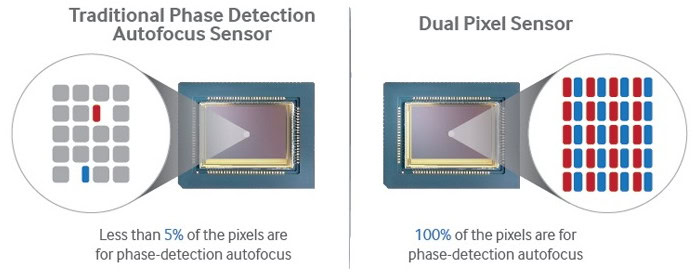

PDAF is the precursor to Dual Pixel autofocus, so understanding how the former works is essential. PDAF is based on the slightly different images created from masked “left-looking and right-looking” photodiodes embedded into the image sensor’s pixels. Comparing the phase difference between these pixels calculates the required focus distance. These phase-detection pixels typically make up around 5-10% of all the image sensor’s pixels. Using more dedicated phase-detection pixel pairs makes PDAF more reliable and accurate.

In the move to Dual Pixel AF, every pixel on the sensor is used for PDAF and aids in calculating phase differences and focus. This improves accuracy and speed compared to standard PDAF. Each pixel is split into two photodiodes; one left and right looking. Using micro-lenses placed on-top pixels makes this possible. When taking a photo, the processor first analyses the focus data from each photodiode before combining the signals to record the full pixel used in the final image.

The diagram above from Samsung’s image sensor team showcases the differences between traditional PDAF and Dual Pixel autofocus technology. The only real drawback is that implementing these tiny phase-detection photodiodes and micro-lenses isn’t easy or cheap, which becomes an important consideration in very high-resolution sensors.

The 108MP sensor inside the Galaxy S22 Ultra, for example, doesn’t use Dual Pixel technology, while the lower resolution 50MP cameras in the Galaxy S22 and Galaxy S22 Plus models do. The Ultra’s autofocus is worse as a result, but the phone’s secondary cameras have Dual Pixel autofocus.

How Dual Pixel autofocus improves on PDAF

Despite the shared fundamentals, Dual Pixel technology results in much faster focusing and a greater ability to maintain focus on fast-moving objects than basic PDAF. This is particularly useful for capturing the perfect action shot. Not to mention just quickly pulling up your camera, knowing it will always be in focus. The HUAWEI P40, for instance, boasts millisecond focusing times by using this technology. You can see this in action in the GIF below.

One of PDAF’s most significant shortcomings is low light performance. Phase-detection photodiodes are made of half a pixel. This means noise makes obtaining accurate phase information difficult in low light. Dual Pixel improves this by taking far more readings across the whole sensor, smoothing out noise for fast AF even in quite dark environments. There are limits here, but this is arguably Dual Pixel autofocus’s greatest enhancement.

If you’re a serious mobile photographer, a top camera with Dual Pixel autofocus technology onboard helps ensure you always capture the very sharpest snaps. It’s certainly a feature to watch for if you want to capture the very best photos from your smartphone.

FAQs

Dual Pixel autofocus technology was a huge step in the right direction when it comes to faster autofocus speeds. It was able to achieve focusing speeds that were as quick as some milliseconds. There is now more accurate technology, such as multi-directional autofocus, but Dual Pixel autofocus is still very fast.

PDAF stands for Phase Detection Autofocus. It’s an autofocus method that detects where light rays travel and meet, within a sensor. For a section to be in focus, the light rays should meet at the same spot. The camera can then determine how to adjust the lens in order to reach focus. You can learn more about PDAF here.

Laser autofocus is usually used in tandem with other autofocusing techniques. It emits a light and measures the subject’s distance from the camera, making it easier to determine lens adjustments to reach focus. It’s not the best in greater distances, but it is very fast and exceptionally good in low light, which is mainly why it’s still used.

There are many phones that use Dual Pixel autofocus. Some of the most popular ones include the Pixel 6 series, the Samsung Galaxy Z Fold 4, the Samsung Galaxy S22 series, and the Sony Xperia 1 IV.

Do you want to improve your chances of taking better pictures? We have a list of the best camera phones available today. We also have a list of great photography tips that will produce immediate results, regardless of which camera you’re using.

Thank you for being part of our community. Read our Comment Policy before posting.