Affiliate links on Android Authority may earn us a commission. Learn more.

What is All Pixel autofocus? OPPO Find X2's camera tech, explained

The OPPO Find X2 Pro uses All Pixel Omni-Directional Phase Detection Autofocus on a custom sensor developed with Sony. But what does “All Pixel” autofocus actually mean? And how does it relate to existing Dual Pixel and Quad Pixel Phase Detect Autofocus (PDAF) systems? Let’s go into detail for each one of these technologies and see how they differ.

First: Photography terms you should understand

Focus Pixels

Focus Pixel is an Apple marketing term for the company’s baseline PDAF approach, first introduced in the iPhone 6 in 2014. A Focus Pixel is simply a PDAF pixel on an image sensor.

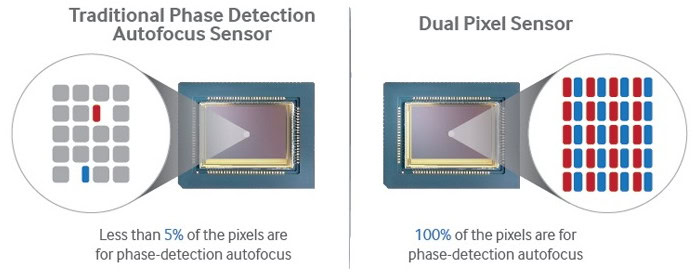

Although faster than Contrast Detect Autofocus, this generation of PDAF pixels are used for focusing, not imaging. This means they need to be spread out across a sensor’s surface. With this arrangement, focus pixels might comprise as little as 5% of the sensor area. This equates to slower and less reliable autofocus.

Dual Pixel Autofocus

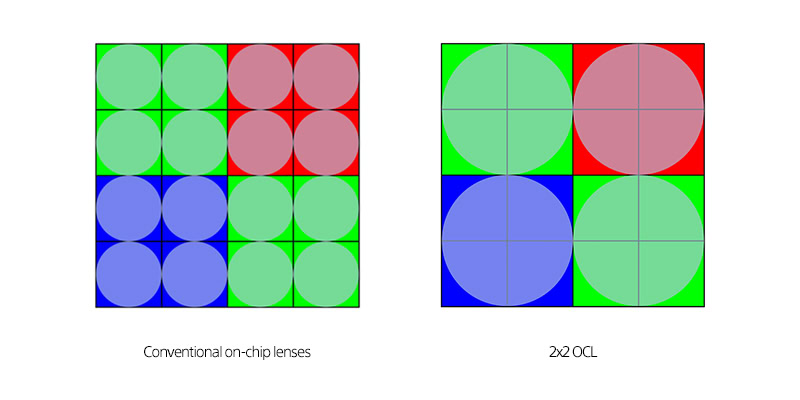

Canon first introduced Dual Pixel PDAF on cameras in 2013. Samsung was the first to use it on smartphones with the Galaxy S7 and S7 Edge back in 2016. Each Dual Pixel PDAF pixel is split into two light-sensitive photodiodes, each with their own microlens or “on-chip lens” (OCL).

With Dual Pixel PDAF, 100% of the pixels on an image sensor are used for both autofocus and imaging. This arrangement greatly improves the focusing performance of a smartphone sensor in terms of speed and reliability.

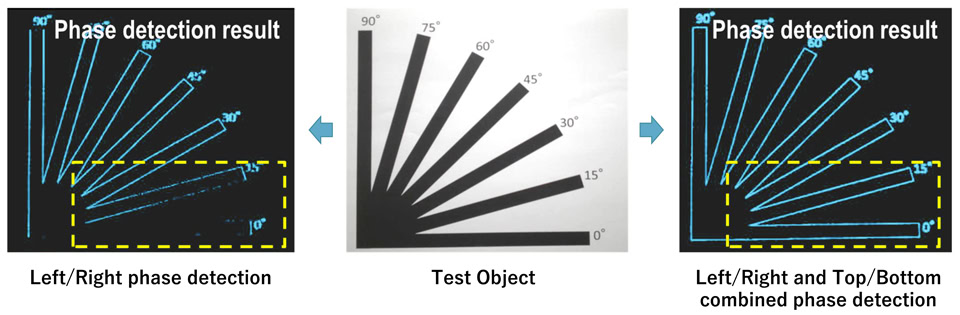

Quad Pixel PDAF is undeniably great, but it does have limitations. Because of the way the photosite is split, Dual Pixel PDAF can struggle to focus accurately on horizontal lines. This is because the orientation of the split makes them less sensitive to objects that lack pattern change in a horizontal direction.

More: Understand how smartphone cameras work

Quad Pixel Autofocus

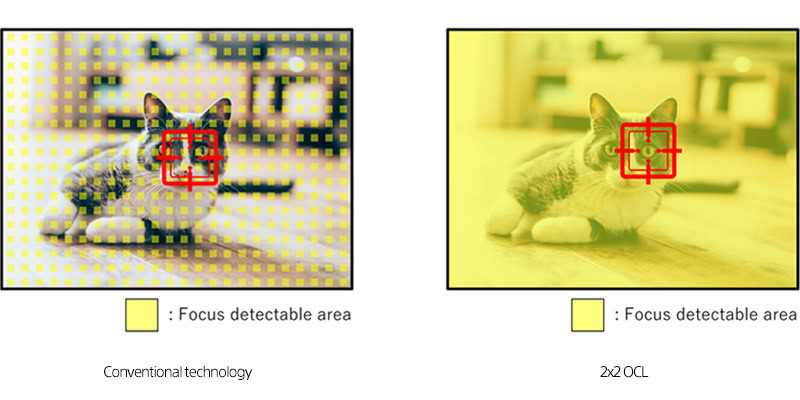

A Quad Pixel setup aims to solve that issue, by splitting a pixel into four. Each pixel in a Quad Pixel PDAF system is able to analyze left/right as well as top/bottom. This alleviates the issue with horizontal autofocus and is even more reliable and accurate than Dual Pixel PDAF.

All Pixel Autofocus

All Pixel Omni-Directional PDAF is OPPO’s nomenclature for the autofocus afforded by Sony’s 2×2 OCL sensor. 2×2 OCL is essentially a Quad Pixel Quad Bayer setup with one condenser lens per pixel, covering all four photodiodes. Once again, 100% of an image sensor’s pixels are in use for both focusing and imaging.

Next: These photography tips will take your images to the next level

The sensor in the upcoming OPPO Find X2 will be larger than normal. This is presumably to accommodate the quad pixel split without lowering sensor resolution or light-gathering capabilities. So All Pixel autofocus will not only be faster than current PDAF methods, it will also provide improved low-light focusing performance.

There are other benefits of the Sony 2×2 OCL solution beyond improved autofocus in all lighting situations and better autofocus irrespective of object shape and pattern. The Quad Bayer structure also means the sensor has higher sensitivity and can reduce noise in low light images and video.

Sony states real-time HDR output is possible through a “unique exposure control technology and signal processing function.” Sony also notes that the design and production technology of the 2×2 OCL increases the efficiency of light utilization.

Photography is a complex art, and learning about autofocus systems is just the tip of the iceberg when it comes to polishing your photo skills. We have put together a series of tutorials and learning material for you, so check it out!

We also have plenty of recommendations for those looking to get new camera equipment!

Thank you for being part of our community. Read our Comment Policy before posting.