Affiliate links on Android Authority may earn us a commission. Learn more.

What is PDAF and how does it work? Phase Detection Autofocus explained

Autofocus technology is one of the key pillars of mobile photography, ensuring crisp, clean captures of even the fastest-moving subjects. But did you know that autofocus comes in a variety of types? Today we’ll dive into Phase Detection autofocus (PDAF), one of the most common types of autofocus.

Before we start: Learn about the most important photography terms

A lot of modern smartphone cameras have Phase Detection autofocus. It’s both faster and more accurate than classic contrast detection. Contrast detection is the simplest and cheapest form of autofocus, but also the slowest and least accurate with moving subjects. So what makes PDAF so much better?

What is PDAF, and how does it work?

PDAF traces its roots back to traditional cameras and DSLRs, like all good camera technologies. DSLR cameras use mirrors to reflect copies of the primary sensor’s light at a dedicated phase-detection sensor. Smartphones don’t have the same space to fit all these parts in. Instead, mobile sensors have dedicated PDAF pixels built into the image sensor, an approach borrowed from compact cameras.

The simplest way to understand how PDAF works is to think about light passing the camera lens at the very extreme edges. When in perfect focus, light from even these extremes of the lens will refract back to meet at an exact point on the camera sensor. This focus/meeting point being set in front of, or behind the image sensor, causes a blurry image. Adjusting the lens to change this focal point is precisely how camera focusing works.

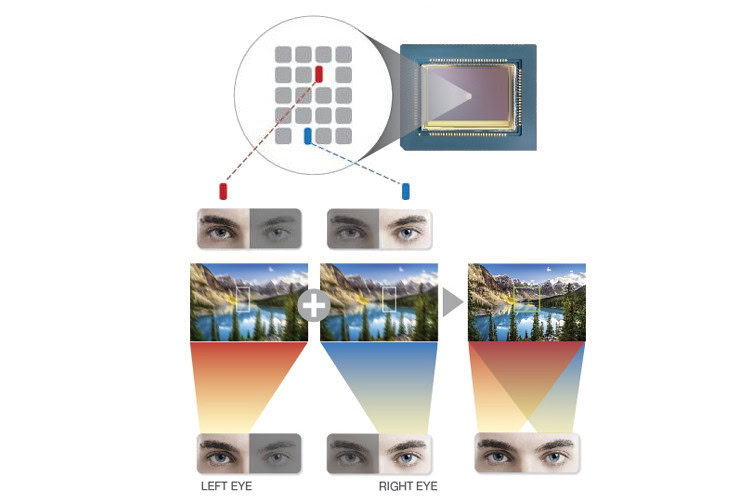

In other words, we can tell if an image is in focus because even light coming from two different points on the lens converge on a single point. DSLR and mirrorless phase detection autofocus cameras use two dedicated PDAF sensors to capture separate images for comparison. Compact cameras and smartphones don’t have this luxury. Instead, the system creates this dual perspective using dedicated phase-detecting photodiodes on the image sensor itself.

These photodiodes are physically masked such that light from only one side of the lens reaches it. This produces left-looking and right-looking pixels on a single image sensor, giving us our two images to compare focus. The phase difference between the two images is calculated to determine the focus point. Samsung’s diagram below offers an intuitive look at this by comparing these left/right pixels to our eyes.

If the image is out of focus, the phase difference data between images is used to calculate how far the lens needs to be moved to bring it into focus. This is what makes PDAF focusing so fast compared to contrast detection. However, with half of the pixel blocked, these photodiodes end up with less light than a regular pixel. This can cause issues focusing in low light, where traditional contrast detection is still often used as a hybrid solution. Furthermore, vertical strips mean that cameras can have problems focusing on horizontal lines, so better sensors use cross-focus patterns.

As you can also see, we don’t need to use every pixel on the camera to figure out the focus. Instead, several pixel strips across the sensor will do. Typically, the camera only uses 5% to 10% of sensor pixels for autofocusing. However, some of today’s high-end sensors with improved PDAF enable to use of every pixel for focusing, making them even faster and more accurate.

PDAF pros and cons

Compared to traditional contrast autofocus, phase detection autofocus is faster and more accurate. Contrast autofocus takes a long time because it has to scan through its entire range of focal points to find the sharpest focus. It’s essentially trial and error. PDAF uses the phase difference to almost immediately calculate how far the lens needs to travel to achieve focus.

Phase detection AF is faster and more accurate than traditional contrast AF.

However, on-sensor PDAF has a few drawbacks compared to DSLR PDAF. The nature of small smartphone sensors and even smaller pixels can make noise an issue, which is problematic in low-light situations. Even phase detection autofocus can take several attempts to obtain perfect focus in less-than-ideal conditions. Using more pairs of detectors helps speed things up. As a result, smartphones sometimes implement a hybrid approach to tackle this shortcoming.

Phase Detection autofocus is a must-have for the serious mobile photographer. Fortunately, you’ll find this technology in all high-end and even most mid-range smartphones launched in the past few years. High-end smartphone cameras now include much improved Dual Pixel, Quad Pixel, and All Pixel autofocus. Additionally, some smartphones use laser autofocus to help slower technologies.

Next: Photography tips that will take your images to the next level

FAQs

PDAF stands for Phase Detection Autofocus. It’s an autofocusing method that can detect where light rays travel and meet when entering a camera. In smartphones, this is done at the sensor level. In order for something to be in focus, the rays should meet at the same point. If they don’t, the system will determine how to adjust the lens to reach focus.

Phase Detection Autofocus is currently the best method for autofocusing in smartphone cameras, but it has evolved. Evolutions of the method include Dual-Pixel Autofocus, Quad-Pixel Autofocus, and All-Pixel Autofocus.

What makes laser autofocus special is that it can determine the distance between the camera and the object you’re trying to focus on. This helps the camera determine how to adjust the lens to reach focus. It is usually used in tandem with other focusing technologies to help speed up focusing times.

Most modern phones use PDAF, but you might not see it labeled as such in spec sheets. This is because modern phones usually use newer forms of PDAF, such as All-Pixel Autofocus.

Thanks to PDAF, smartphones can focus so fast that most manufacturers don’t even mention focusing speeds anymore. It usually takes less than a second, and two seconds would be considered a long time, even in low-light conditions.

Thank you for being part of our community. Read our Comment Policy before posting.