Affiliate links on Android Authority may earn us a commission. Learn more.

Google's Recorder app is like magic, but here's how it works

There is no doubt about the fact that Google is at the forefront of artificial intelligence (AI) and machine learning (ML). The evidence lies in a range of Google products — from industry-leading computational photography to making suggestions while we write emails. AI and ML are clearly at the core of all of Google’s efforts.

The Pixel 4’s Recorder app is yet another example of Google’s ML prowess. The company released the smart audio recorder app alongside the Pixel 4, using on-device machine learning to automatically transcribe the recording. The app also arrived on older Pixel devices a couple of months later. In a blog post, Google has now detailed how the new Recorder app functions.

Transcribing

The app generates real-time transcriptions of audio recordings. The transcribed text is also searchable, allowing you to quickly find a specific word in a conversation without listening to the whole recording.

To do this, Google used improvements it made in its on-device speech recognition model. This model makes sure that the Recorder app can transcribe lengthy audio files, up to a few hours. Words are mapped to the timestamp of an audio recording. So when you tap a particular word in the transcription, the audio playback is initiated from that point in the recording too. This is also how you are able to search for a word and jump to that exact point in the recording.

Visualizing sounds

Further, Google explains that it uses convolutional neural networks to associate different sounds with different colors. This is the same on-device machine learning model Google uses for Android 10’s Live Caption feature.

The model identifies different sounds like a dog barking or a musical instrument playing. It then assigns a color to that sound in the audio waveform. This helps users recognize sounds visually. So the next time a dog is barking in your recording, you can easily skip over it without having to scrub through the audio file.

Recorder checks for different types of sound profiles — speech, music, etc — every 50 milliseconds in a 960 millisecond window. The company says this process “makes it possible to pinpoint exact start and end times in a manner that is less prone to mistakes than analyzing consecutive large 960ms window slices on their own.”

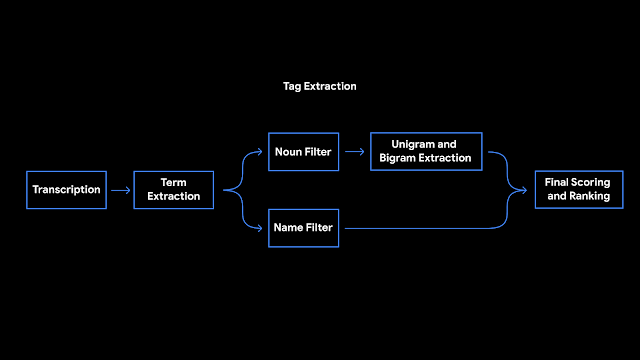

Suggesting titles and tags

Once a recording has ended, the app suggests tags and titles for it. To do this, Recorder counts term occurrences and their grammatical role in a sentence. The terms identified as entities are capitalized. An on-device algorithm then tags nouns and proper nouns, which users tend to remember easily. After this, the terms go through a language model for scoring and ranking. The final selections are what you see as title or tag suggestions.

Phew! that’s a lot of behind-the-scenes work. Clearly, making a smart recording app is no joke. Google also seems to have put a lot of thought into user privacy by keeping these processes restricted to your device. The app still can’t differentiate between speakers yet, but maybe Google can add that in the future to make the app even better.

Are you using the new Google Recorder app? Let us know your experience in the comments section below.

Thank you for being part of our community. Read our Comment Policy before posting.