Affiliate links on Android Authority may earn us a commission. Learn more.

Here's how Android 10's Live Caption actually works

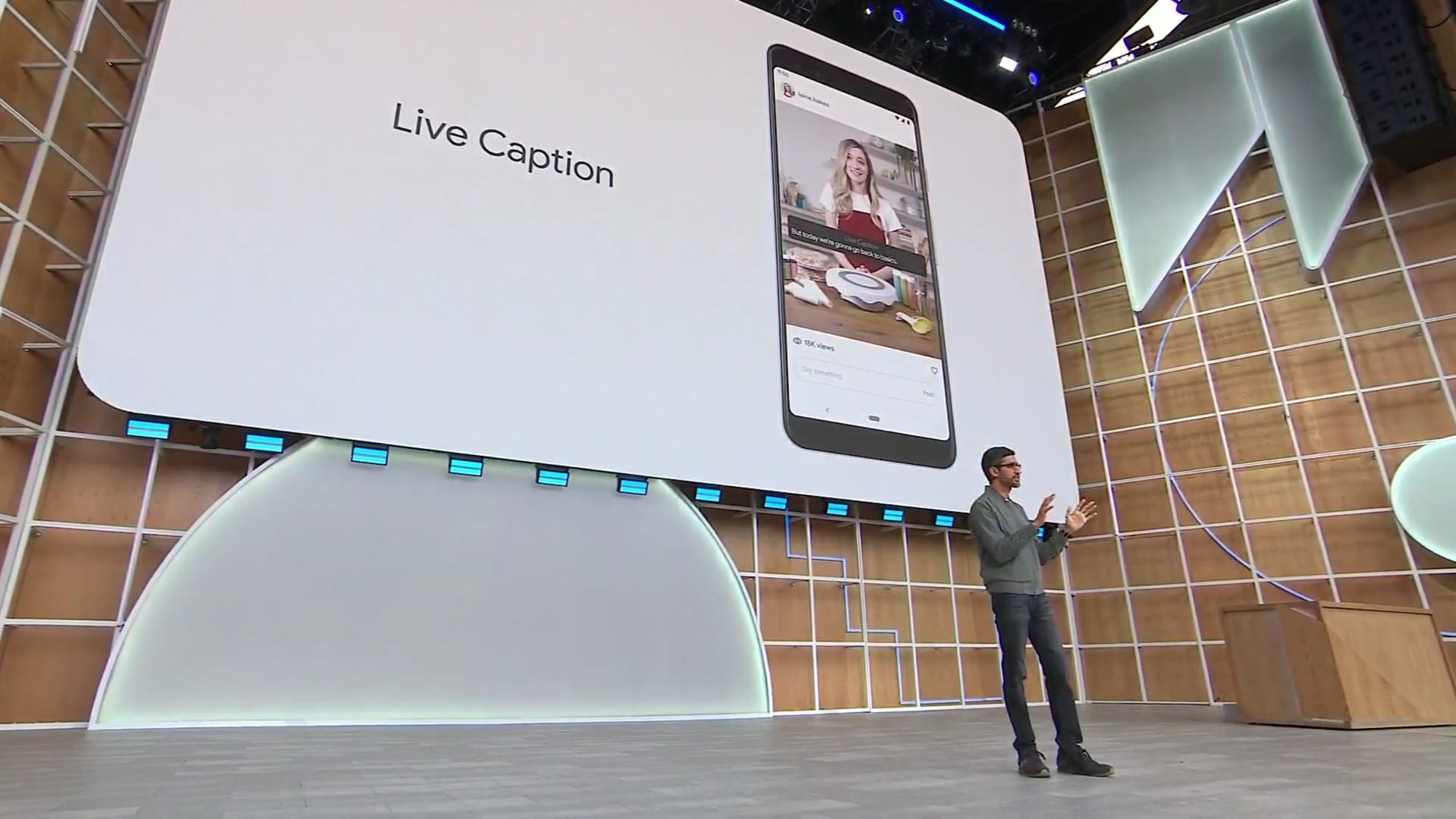

Live Caption is one of the coolest Android features yet, using on-device machine learning to generate captions for local videos and web clips.

Google has published a blog post detailing exactly how this nifty feature works, and it actually consists of three on-device machine learning models, for starters.

There’s a recurrent neural network sequence transduction (RNN-T) model for speech recognition itself, but Google is also using a recurrent neural network for predicting punctuation.

The third on-device machine learning model is a convolutional neural network (CNN) for sound events, such as birds chirping, people clapping, and music. Google says this third machine learning model is derived from its work on the Live Transcribe accessibility app, which is able to transcribe speech and sound events.

Reducing the impact of Live Caption

The company says it’s taken a number of measures to reduce Live Caption’s battery consumption and performance demands. For one, the full automatic speech recognition (ASR) engine only runs when speech is actually detected, as opposed to constantly running in the background.

“For example, when music is detected and speech is not present in the audio stream, the [MUSIC] label will appear on screen, and the ASR model will be unloaded. The ASR model is only loaded back into memory when speech is present in the audio stream again,” Google explains in its blog post.

Google has also used techniques such as neural connection pruning (cutting down the size of the speech model), reducing power consumption by 50% and allowing Live Caption to run continuously.

Google explains that the speech recognition results are updated a few times each second as the caption is formed, but punctuation prediction is different. The search giant says it delivers punctuation prediction “on the tail of the text from the most recently recognized sentence” in order to reduce resource demands.

Live Caption is now available in the Google Pixel 4 series, and Google says it’ll be available “soon” on the Pixel 3 series and other devices. The company says it’s also working on support for other languages and better support for multi-speaker content.

Thank you for being part of our community. Read our Comment Policy before posting.