Affiliate links on Android Authority may earn us a commission. Learn more.

What is deepfake? Should you be worried?

Deepfake content is building chaos among people who grew up with the idea that seeing is believing. Photos and videos, once considered undeniable evidence, are now being questioned by the masses. This is to be expected after finding out the amazingly realistic video of Barack Obama calling Donald Trump a “total and complete dip****” is nothing but a deepfake creation.

The tech involved is raising eyebrows and generating questions, which is why we are here to give you the full deepfake rundown of what it is, how it works, and if there is really a reason to worry.

What is deepfake?

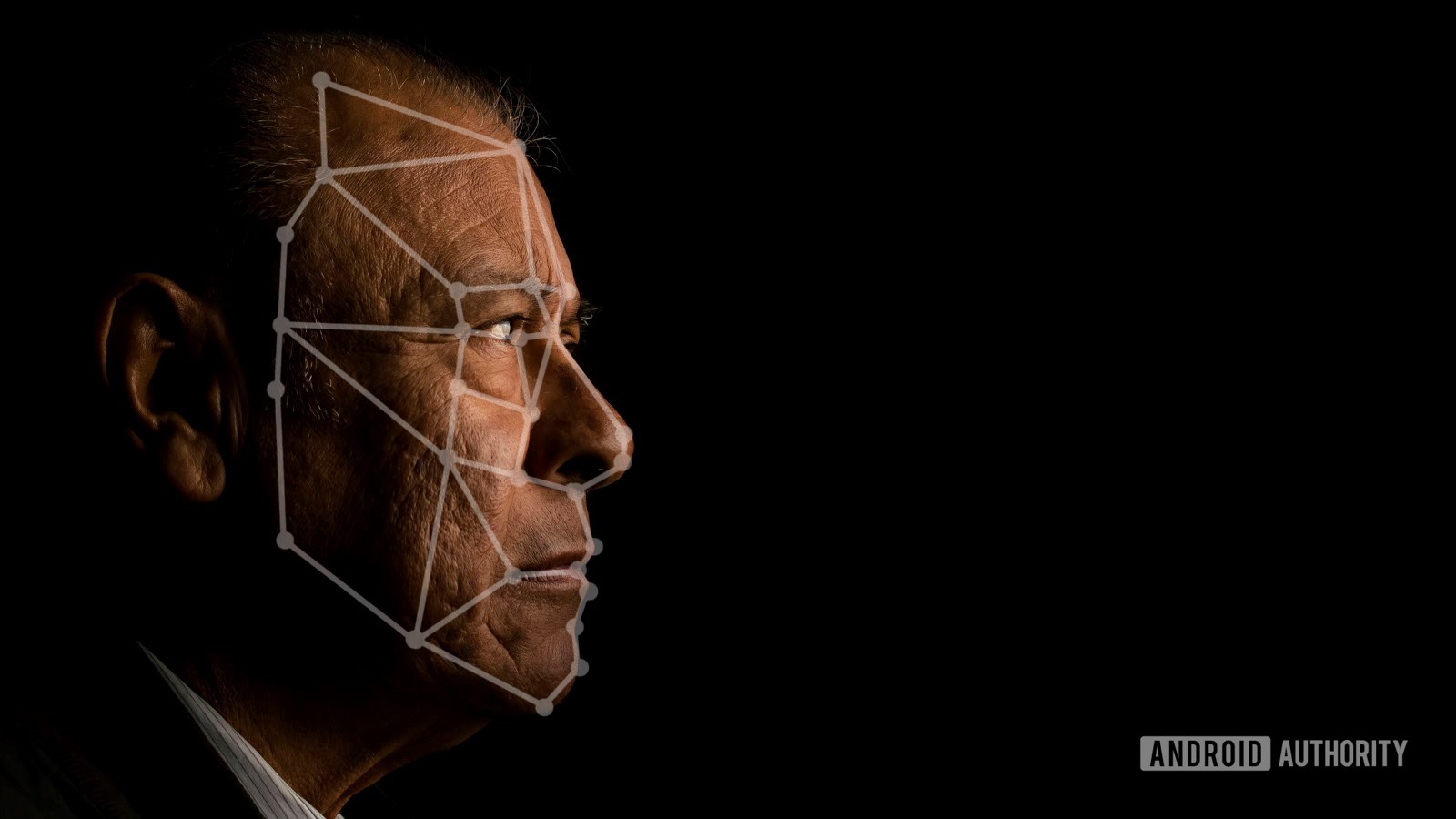

Deepfake is an AI (artificial intelligence) technique that uses machine learning to create or manipulate content. It is often used to create montages or superimpose a person’s face on top of another, but its capabilities extend far beyond that.

This technology has plenty of other applications. These include manipulating or creating sound, movement, landscapes, animal content, and more.

How does deepfake work?

Deepfake content is created through a machine learning technique known as GAN (generative adversarial network). GANs use two neural nets: a generator and a discriminator. These are constantly competing against each other.

The generator will try to create a realistic image, while the discriminator will attempt to determine if it is a deepfake or not. If the generator fools the discriminator, the discriminator uses information gathered to become a better judge. Likewise, if the discriminator determines the generator’s image is a fake, the generator will get better at creating a fake image. The never-ending cycle can continue until an image, video, or audio is no longer noticeably fake to human perspective.

Also: What’s next for machine learning?

The origins of deepfake

The first deepfake videos were obviously porn! More specifically, it was common to see celebrity faces superimposed over porn actresses. Nicholas Cage memes were also popular, among other fun inventions.

The first deepfake videos were obviously porn!Edgar Cervantes

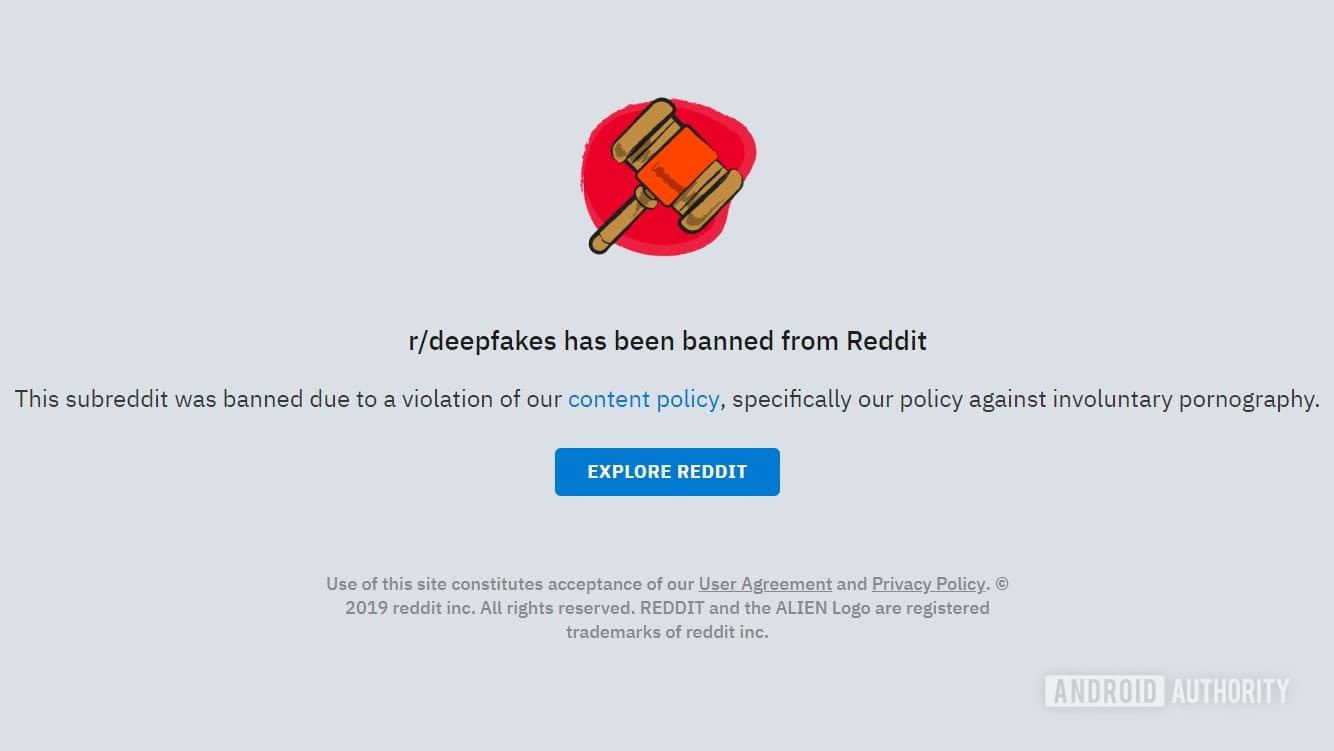

The word deepfake became synonymous with this technique in 2017, thanks to a Reddit user who went by the name of “deepfakes.” Others joined the user at the now-banned r/deepfakes subreddit, where they shared their creations with the world.

The real creator of generative adversarial networks is Ian Goodfellow. Along with his colleagues, he introduced the concept to the University of Montreal in 2014. He then moved on to work for Google, and is currently employed by Apple.

Related: The difference between artificial intelligence and machine learning

The dangers

Content manipulation used to require great skills. You needed a powerful computer and a really good reason (or just too much free time) to make fake content. Deepfake creation software like FakeApp is free, easy to find, and doesn’t require much computer power. And because it does all of the work on its own, you don’t need to be a skilled editor or coder to make insanely real deepfake media.

In the wrong hands, deepfake creation can be used for falsifying much more than silly Nicholas Cage memes.Edgar Cervantes

This is why the general public, celebrities, political entities, and governments worry about the deepfake movement. In the wrong hands, deepfake creation can be used for falsifying much more than silly Nicholas Cage memes. Imagine someone creating fake news or incriminating video evidence. You can add fake and revenge porn to the problem. Things can get messy real quick.

Another reason to worry about deepfake content is that important personalities could also deny past actions. Because deepfake videos seem so real, anyone could claim a real clip is a deepfake.

Also read: The complexities of ethics and AI

Finding a solution

While very close to real, a trained eye can still spot a deepfake video by paying close attention. The concern is that we may not be able to tell the difference at some point in the future.

Twitter, Pornhub, Reddit, Facebook, YouTube, and others have been trying and pledging to get rid of such content. On the more official side, DARPA (Defense Advanced Research Projects Agency) is working with research institutions and the University of Colorado to create a way to spot deepfakes. Of course, Google’s Alphabet is also in on the action, working on software that can help identify deepfake content efficiently. Alphabet calls this service Assembler.

Furthermore, the government is worried about deepfakes. Fake videos and images may cause a commotion among citizens. Especially in a time of pandemics, world tensions, and very conflicting political ideas.

The best we can do to combat deepfake videos is to be more observant and less gullible.Edgar Cervantes

The state of California joins the efforts against deepfake content by signing a couple of laws. Governor Newsom signed bills AB-730 and AB-602. AB-730 protects politicians from fake videos that may misrepresent them. Bill AB-602 protects victims of pornographic video creators who may use your image in their content.

Until we have more refined software that can spot irregularities in such videos, the best we can do is be more observant and less gullible. After all, a true detection solution is not easy to make. Facebook recently held the Deepfake Detection Challenge. The best-scoring software was only able to achieve about 65% accuracy.

More recently, Facebook announced they can now detect deepfakes and recognize where they came from. This seems to be a bit stride towards combating deepfakes. At least the bad ones, because there are some pretty fun ones too! Check them out in the next section.

Popular deepfake content

Jordan Peele joins Buzzfeed to put together this video, which is meant to create consciousness.

Online manipulation expert Claire Wardle shows us how realistic these videos can look by appearing as Adele for the first 30 seconds of the video. This video from The New York Times gives us great insight into the topic.

Watchmojo has curated a list of some of the most popular videos around. It is a fun video with plenty of great examples.

Do you like Rick and Morty? Apparently, John F. Kennedy thinks you have to be a real smart fellow to understand the cartoon. Whether you side with him or not, you can bet the real president wasn’t around to watch the modern, raunchy, adult cartoon.

This one is hilarious, but also awkward. It’s just so realistic, yet it makes no sense, all at the same time.

Love him or hate him, we can’t deny Trump makes for some fun moments. Some good deepfakes feature the former USA president, but this one is definitely one of the funniest. It comes from YouTuber Ctrl Shift Face.

The new Wonder Woman played by Gal Gadot is great, but some of us old-timers miss the original one. In this deepfake, YouTuber Deepfaker gives the new Wonder Woman Lynda Carter’s face. It’s actually pretty awesome!

Thank you for being part of our community. Read our Comment Policy before posting.