Affiliate links on Android Authority may earn us a commission. Learn more.

What goes into making a great smartphone camera?

Our smartphones are little marvels of photography. They are so advanced they even challenge dedicated camera systems. Surely, many of you have wondered how these tiny cameras got so good.

Companies spend billions in R&D so you can take an Instagram-worthy photo of your meal. It’s all very complicated, and most people don’t understand a bunch of the terms and concepts involved.

We are here to clear the waters and help you understand what makes those beautiful pictures possible. A lot goes into making a great smartphone camera, so let’s take you through every component.

Companies are spending billions in R&D so that you can take an Instagram-worthy photo of your meal.Edgar Cervantes

Sensor

Smartphone cameras have come a long way, but the industry will always struggle with image sensors. Larger sensors outperform smaller ones (of the same quality). Size matters, and there is no way around it.

Size matters and there is no way around that in the world of image sensors.Edgar Cervantes

This is a challenge for smartphone manufacturers. They can’t exactly pack a full-frame sensor into a small, thin handset. Considering a larger sensor also needs a larger lens, smartphone makers usually stick with 1/2.3-inch to 1/1.7-inch sensors.

To put these numbers into perspective, the HUAWEI P30 Pro has a 1/1.7-inch sensor. The Google Pixel 3 XL is known for its amazing camera too, and it has a 1/2.55-inch sensor. Those are dwarfs compared to a full-frame 1.38-inch sensors in some DSLR cameras.

When space is a limitation, manufacturers need to create better quality sensors and alter a few things. Having a smaller sensor is a drawback, but companies can do certain things to improve image quality.

One popular method is to create sensors with larger pixels, which would allow more light to be captured. Pixel size is measured in µm (micro-meters) and it usually ranges between 1.2µm and 2.0µm in the smartphone world.

Another interesting method HUAWEI introduced with the P30 Pro was to use a RYB (red-yellow-blue) sensor, as opposed to the traditional RGB (red-green-blue) configuration. Switching to yellow-capturing photosites allows more light to be captured. You can learn more about that in our dedicated article.

As a user, you will notice a better sensor will create less noise and grain, better low-light performance, enhanced colors, improved dynamic range, and sharper images.

Glass/lenses

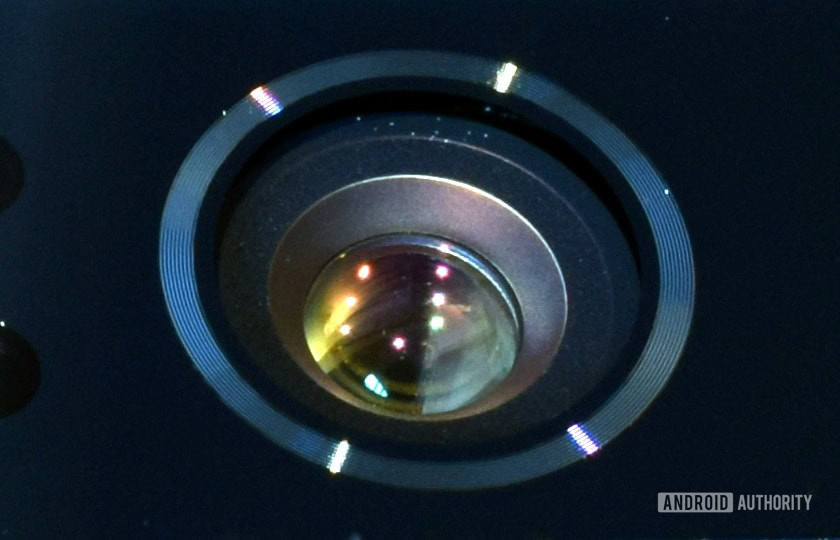

Lenses are usually ignored when talking about smartphone photography. This is odd considering it is one of the most important subjects in regular photography. A well designed, transparent, and clean lens will provide better image quality.

We all love to hear about wide aperture lenses, but these come with a risk.Edgar Cervantes

Lenses also determine aperture, which is a significant factor to take into consideration. We all love to hear about wide aperture lenses, but these come with a risk. Camera lenses are built from multiple “correcting groups” designed to focus the light properly and reduce aberrations. Cheaper lenses tend to feature fewer groups and are therefore more prone to issues. Lens materials also play an important part here, with higher quality glass and multiple coatings offering better correction and less distortion.

It’s hard to tell how good or bad smartphone lenses are, because manufacturers usually don’t talk about them. There are some brand names we can trust in the smartphone industry, though. Sony and Nokia work with ZEISS, and HUAWEI works with Leica. These brands are renowned for offering quality lenses.

Multiple cameras

Smartphones used to have a single camera, but adding more has become common. Many phones have two or three cameras nowadays. Then we have the crazy ones, like the Nokia 9 PureView and its five shooters.

If you can't have a larger sensor or more advanced glass, you might as well have a bunch of them.Edgar Cervantes

There are varied reasons for putting multiple cameras on a phone — it makes the photo experience more flexible. Take the HUAWEI P30 Pro; it has a main camera for general purposes, a wide-angle camera, and the famous 125mm periscope zoom lens. This makes it possible to use each camera for specific circumstances.

Multi-camera setups also play a big role in computational photography. For example, the Nokia 9 PureView has 3 monochrome sensors, two RGB sensors, and a ToF (time of flight) camera. All these images work together in every shot to capture the most detail, color, light, and depth information. In fact, every shot coming from this smartphone is an HDR photo.

If you can’t have a larger sensor or more advanced glass, you might as well have a bunch of them. This is how manufacturers make up for the photography limitations presented by smartphones.

- Multiple lenses: The next big trend in mobile photography?

- Best phones with a triple-camera setup — what are your options?

Image stabilization

Smartphone cameras use two types of image stabilization: optical image stabilization (OIS) and electronic image stabilization (EIS). Depending on the phone, you may have none, one, or both of these features.

Image stabilization technologies are meant to reduce shake and provide a smoother, sharper shot. Ideally you would want to have the option of using both, because OIS is better for photo and EIS is focused on video. If you must pick between one of them, the best option is OIS.

OIS

OIS compensates for small movements of the camera during exposure. In general terms it uses a floating lens, gyroscopes, and small motors. The elements are controlled by a microcontroller which moves the lens very slightly to counteract the shaking of the camera or phone — if the phone moves to the right, the lens moves left.

This is the best option due to the fact all stabilization is being done mechanically, and not through software. This means no quality is lost in the process.

EIS

Electronic image stabilization works through software. Essentially, what EIS does is break up the video into chunks and compares it to the previous frames. It then determines if movement in the frame was natural or unwanted shake, and corrects it.

EIS usually degrades quality, as it needs space from the content’s edges to apply corrections. It’s improved in the last few years, though. Smartphone EIS usually takes advantage of the gyroscope and accelerometer, making it more precise and reducing quality loss. As per usual nowadays, software is killing it.

Pixel binning

You have probably heard this term before and have no idea what it means. The point is it reduces noise and helps in low-light situations.

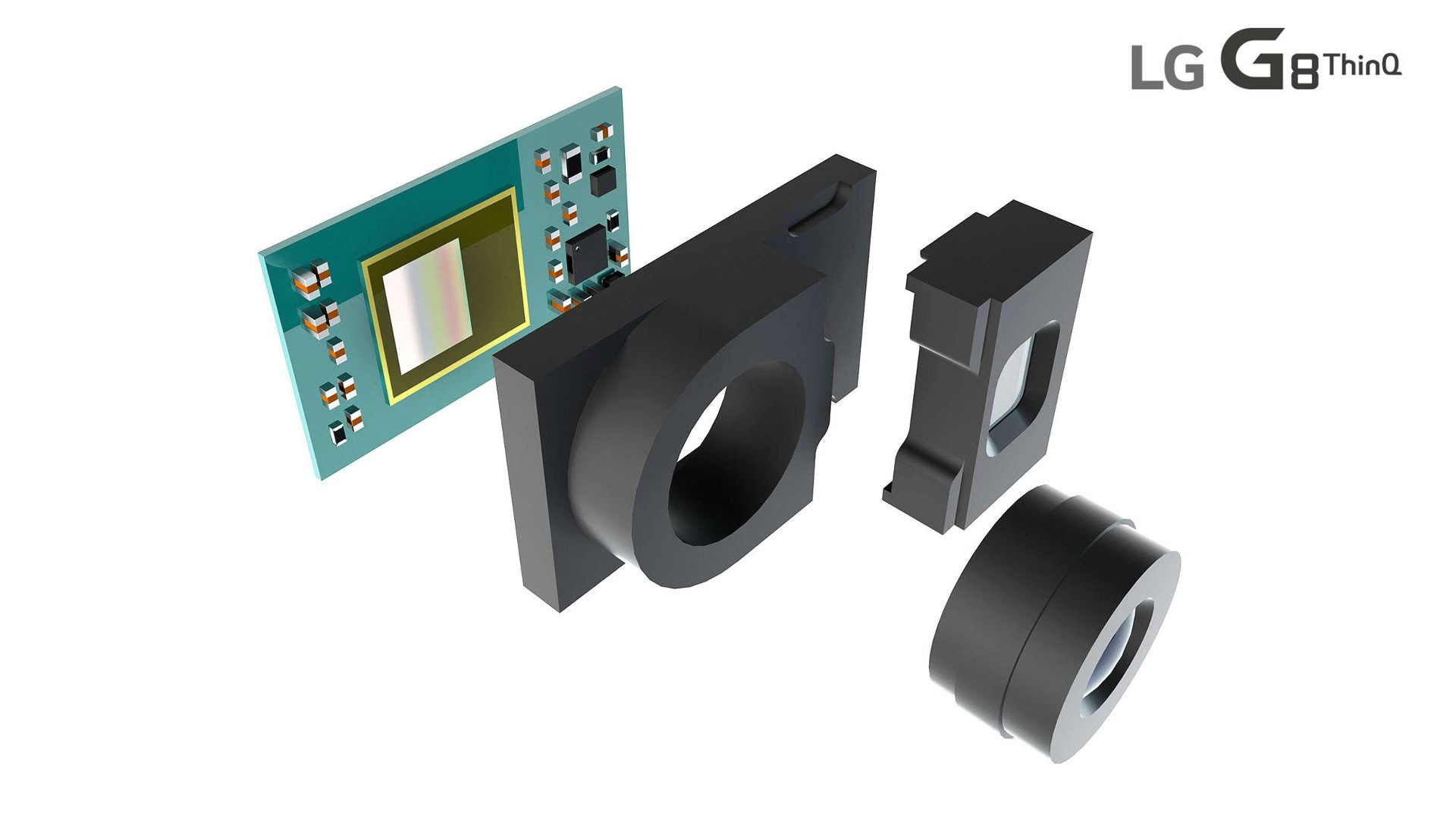

Pixel-binning is a process that combines data from four pixels into one. Using this technique, a camera sensor with 0.9 micron pixels can produce results equivalent to 1.8 micron pixels.

The biggest downside is resolution is also divided by four when taking a pixel-binned shot. That means a binned shot on a 48MP camera is actually 12MP.

Pixel binning is generally made possible thanks to the use of a quad-Bayer filter on camera sensors. A Bayer filter is a color filter used in all digital camera sensors, sitting atop the pixels and capturing an image with red, green and blue colors.

Your standard Bayer filter is made up of 50 percent green filters, 25 percent red filters, and 25 percent blue filters. According to photography resource Cambridge Audio in Color, this arrangement is meant to imitate the human eye, which is sensitive to green light. Once this image is captured, it’s interpolated and processed to produce a final, full color image.

Not many phones use pixel binning, but it’s a nice treat. Some of them include the LG G8 ThinQ, Xiaomi Redmi Note 7 series, Xiaomi Mi 9, HONOR View 20, HUAWEI Nova 4, vivo V15 Pro, and the ZTE Blade V10.

Autofocus

Smartphone cameras generally use three types of autofocus systems: dual-pixel, phase-detect, and contrast-detect. We will tell you about them in order, from worst to best.

Contrast-detect autofocus

This is the oldest of the three, and works by measuring contrast between areas. The idea is that a focused area will have a higher contrast, as edges will be sharper. When an area reaches a certain contrast, the camera will consider it in focus. It is an old, slow technique, as it requires moving focus elements until the camera finds the right contrast.

Phase-detect autofocus

“Phase” means that light rays originating from a specific point hit opposing sides of a lens with equal intensity – in other words they are “in phase.” Phase-detect autofocus uses photodiodes across the sensor to measure differences in phase. It then moves the focusing element in the lens to bring the image into focus. It is really fast and accurate, but falls behind dual-pixel autofocus because it uses dedicated photodiodes instead of using a large number of pixels.

Dual-pixel autofocus

This is by far the best autofocus technology available for smartphones. Dual-pixel autofocus is like phase-detect, but it uses a greater number of focus points across the sensor. Instead of focusing on dedicated pixels, each pixel is comprised of two photodiodes that can compare subtle phase differences in order to calculate where to move the lens. Because the sample size is much higher, so is the camera’s ability to bring the image into focus quicker.

Some believe a faster autofocus doesn’t matter much, but it makes a huge difference when taking an action shot, for example. Even fractions of a second are valuable during fleeing moments. Nobody likes a blurry, half-focused image.

Megapixels

Is a higher megapixel count better? The answer is, it depends. It depends on your own needs and other factors.

When having more MP is better

More megapixels means more definition. While it won’t always necessarily make your photo better, it will give it more detail. This is a nice treat for those who like to crop, as a higher-megapixel image will have more pixels to work with, and therefore, more pixels to spare.

More pixels might also warrant better printing quality if you ever decide to make hard copies of your images. It will only make a difference if your prints are large enough, though. The Nyquist Theorem teaches us that an image will look substantially better if we record it at twice the maximum dimensions of our intended medium. With that in mind, a five by seven inch photo in print quality (300dpi) would need to be shot at 3,000 x 4,200 pixels for best results, or about 12MP.

When having fewer MP is better

Printing smartphone photos is a rare and dying habit, so having more printing power won’t make a difference to most of us. What it will do is make image files larger, which will occupy your precious storage space. Not to mention editing them in low-powered devices might make for a sluggish experience.

It is also important to keep in mind having more pixels in a tiny space will make pixels smaller. Smaller pixels can take in less light and produce more noise. Smartphone manufacturers seem to have found a balance between size and quality, keeping sensors at about 12MP and enlarging pixels.

There are always exceptions, though. A clear example is the HONOR View 20, which has a 48MP sensor, but it is also a larger 1/2-inch sensor and the camera uses pixel binning. In this case the manufacturer had a reason to use a higher megapixel count, and set up the device’s hardware accordingly.

Software

Some physical limitations we simply can’t improve on — at least not by much. Smartphone camera hardware is reaching a plateau and eventually manufacturers won’t be able to sell a camera that is between two and five percent better. Until some breakthrough imaging technology replaces the current one, this has become a coding battle.

Software steps in to the rescue wherever hardware can't deliver.Edgar Cervantes

Software steps in to the rescue wherever hardware can’t deliver. With computational photography, phones know what you are shooting, where you are shooting, and at what time you are shooting. This technique can analyze a frame and make decisions for you, such as making a sky more blue, adapting white balance in the dark, and enhance colors when needed.

Software also makes complex features possible, like portrait mode, HDR, and night mode. All these processes used to require special equipment, time, knowledge, and effort. Now software takes a lot of the grunt work from the shooter. Thanks to phones with multiple cameras, software can also take multiple images and merge them to produce a single, improved photo.

Phones may one day beat traditional cameras, and it’s all thanks to software. We are touching the tip of the iceberg here, but you can let our very own David Imel give you his thoughts on computational photography and how it will revolutionize everything.

So, what should you look for?

That was a lot of information to take in, so here’s a brief summary of what you should look for when shopping for a good smartphone camera.

- A larger sensor is always best. 1/1.7 inches is about as big as most smartphone camera sensors go. There are bigger, but they are rare.

- Look for more megapixels if you absolutely have to print (or really need larger images). Otherwise, prioritize larger pixels, or techniques like pixel binning. Pixel size is measured in µm (micrometers), and anything over 1.2µm should be good. A good smartphone should have at least a 12MP, which is preferred for online use and small image printing.

- Lenses are very important. Though the information is not always readily available, try to verify your phone has quality glass. Some manufacturers partner with renowned brands like Leica or ZEISS.

- Good software is key. Research software enhancements. All manufacturers approach software differently and this results in varied results. Samsung is known for high contrast and saturated colors. Google’s Pixel devices also have great software that produces high dynamic range, natural colors, and crisp images.

- Dual-pixel autofocus is the best in smartphones. Phase-detect autofocus is also very good, but it will be a tiny bit slower.

To think all of these components can fit in such a small space. Smartphone cameras are true marvels of technology. Now take all this information and find out where your next camera phone stands in terms of smartphone photography.

Thank you for being part of our community. Read our Comment Policy before posting.