Affiliate links on Android Authority may earn us a commission. Learn more.

Google I/O 2019 keynote: Everything you need to know!

Google I/O 2019 is upon us and one of the most important events is the first one. This year’s keynote has a lot of promise with expected topics ranging from a new phone to the next Android Q beta and a bunch of other stuff. We’ll be watching live via the video above and updating this article as the keynote progresses.

In addition, we expect to see some stuff about apps and games along with Google Home products. We also have Eric Zemen, David Imel, and Justin Duino on the floor to bring even more Google I/O 2019 coverage over the next several days.

The live stream

You can watch the live stream with the video above at 1PM EST when the event starts. We expect the link to continue to work after the event is over as well. Below, we’ll update the article as more information becomes available.

Google I/O 2019 opening

We open Google I/O 2019 with a montage featuring gaming, virtual reality, augmented reality, and some Star Trek and Night Rider. Sundar Pichai hits the stage to begin the presentation by talking about stuff Google has been doing over the last few weeks and he makes a quip about an upcoming Liverpool soccer match. He talks about this year’s I/O app that uses augmented reality to help visitors get around this year. This is also a new feature in Google Maps.

Pichai talks briefly about all of Google’s useful services, including Google Photos, Google Maps, and Google Assistant. He further talks about products that billions of people use all around the world with an emphasis on Google Search and Google News. The full coverage feature in Google News is also heading to Google Search, including a full timeline of events and news from a variety of sources. Google is also bringing indexing podcasts into Google Search and you can listen directly from Google Search results.

”

Aparna Chennapragada, Google Search, Camera, and augmented reality

Read our coverage of augmented reality models coming to Google Search!

Aparna enters the stage to talk about augmented reality and camera in Google Search. The first new Search feature lets you see 3D models directly from Google Search and use augmented reality to place those objects in your camera app. You can even do some neat stuff like look for shoes and see how it fits with your outfit. Finally, see 3D models in your camera app and it seems to scale to size with whatever else is in the camera app. The demo showed a great white shark on stage and it’s very impressive.

Google is marrying augmented reality to both camera and Search over the next year.

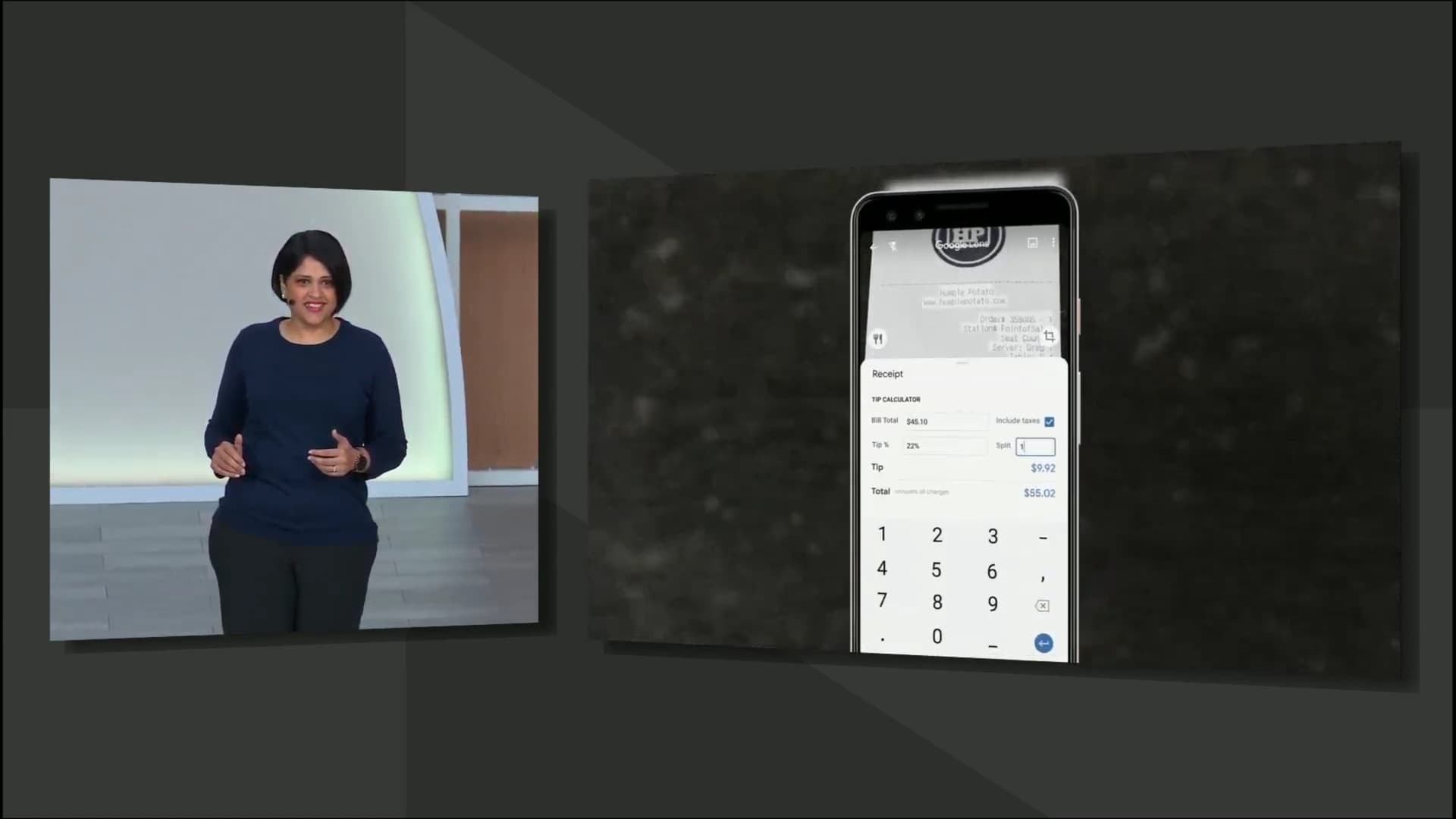

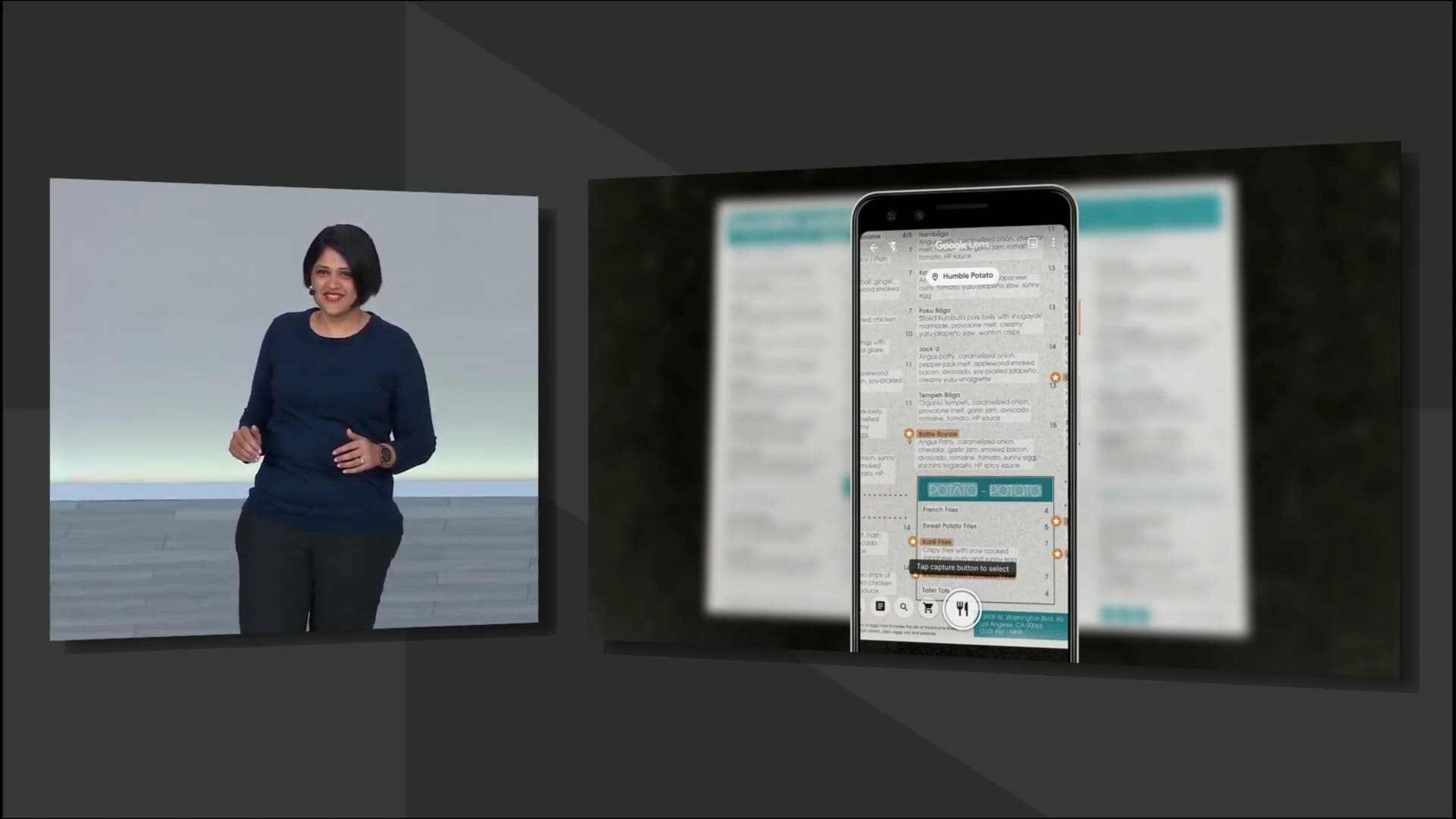

Aparna moves on to Google Lens, available on most newer Android phones these days. It’s been built into Google Photos, Assistant, and Camera. Over one billion people have used lens already. Lens now has the ability to work natively with the camera and highlight things like popular dishes on a menu without the user doing anything with data from Google Maps. Lens can also calculate the tip and split totals of restaurant receipts in real time from the camera app without much user input. Google is partnering with many companies to improve these visual experiences.

Finally, Google is integrating Google Translate and the camera into the Google Search bar to read signs out loud to you in your native language or translate in real time like you can do in the Google Translate app already. Aparna throws it to a video clip of an Indian woman who never had a proper education using the app to live a normal life. This new feature works on phones that cost as little as $35 and uses a very small amount of space to make accessible to as many places as possible.

Pichai retakes the stage

Sundar takes the stage again and starts talking about Google Duplex as well as Google Search in terms of making reservations. You can ask Assistant to make reservations for you and, well, it does. The demo on stage was very impressive. This works with Calendar, Gmail, Assistant, and more. This new feature is called Duplex on the web and Google will have more information about it later this year.

Sundar also announced that Google’s voice models went from 100GB to 0.5GB, making it small enough to store it directly on the phone. This should help make Assistant faster. Pichai throws it to Scott Huffman for more.

Scott Huffman, Google Assistant, and voice models

Scott comes out and talks about making Google Assistant faster than ever. Another Googler, Maggie, then rings off a good couple of dozen commands and Assistant handles them all with aplomb to show off how much faster Assistant can get. She then demos Google Assistant working without using the hot-word and she uses her voice to reply to a text, find a photo of an animal at Yellowstone, and send that picture back to her text. She proceeds to use Assistant to find a flight time and send that information through text as well. Everything was done with voice with no touch input whatsoever. It’s very impressive to watch Assistant understand when Maggie was dictating and when she was calling for Google to complete a command.

Google Assistant is about to get a lot faster, easier to use, and more powerful.

Scott also announced Picks for you, a new Google Home feature that tries to personalize your results based on things Assistant has helped you do before. This includes things like directions, recipes, and other areas where your results may be different because of your personal preferences. Google calls this Personal References. You can ask Google what the weather is like at your mom’s house and Google will know where you mean, the traffic between you and that place, and what the weather is like at your mom’s house. Google Assistant will just get it.

Finally, Scott touches on improvements with Google products in the car, including easy commands for music, Maps, and more. Oh, and Assistant on Google Homes can now stop alarms with a simple command to stop. Scott concludes his segment with a fun little montage video clip of people using Google Assistant.

Sundar returns again!

Sundar returns and reiterates Google’s goal to build a more helpful Google for everyone. He also talks about machine learning and AI with a focus on wanting them to not be biased like humans are. Google is working on a new machine learning model called TCAV to show what AI uses in its determinations. Google wants to use this to remove bias and help people while using AI technology.

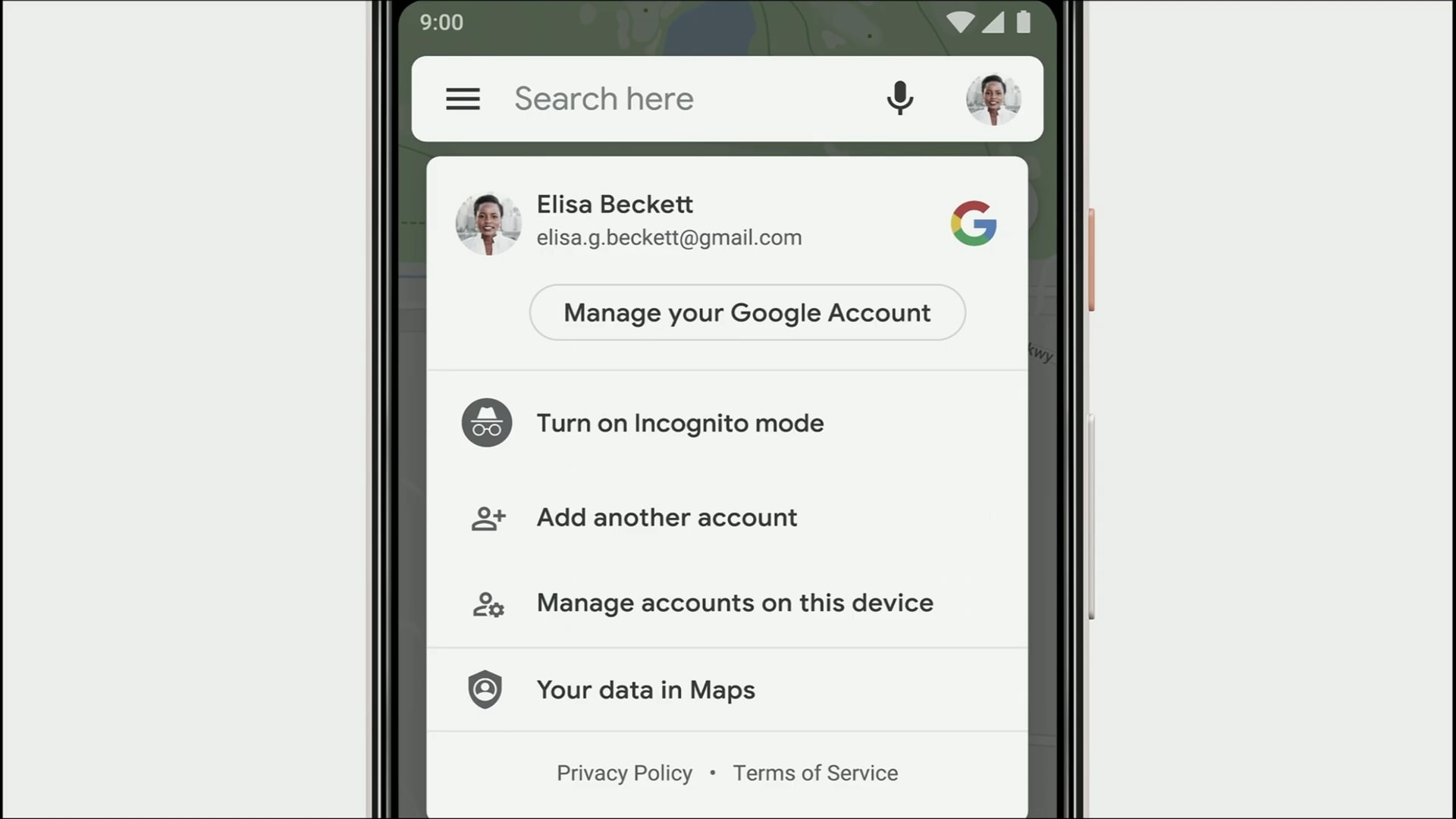

Pichai moves on to user security with a timeline of Google’s privacy and security features, including Incognito Mode and many other enhancements over the years. Your security settings will soon be even easier to access through Google Chrome with your profile picture in the top right corner. A new feature launching today (May 7th, 2019) will let you delete old data that Google collected on a rolling basis. In addition, Incognito Mode will soon exists in Maps so you can search for stuff without it saving to your account. Privacy Key is another feature Google launched today.

Google continues to improve its security and privacy features with easier access.

Federated Learning is another new thing Google has been doing. It lets Google learn stuff you’ve done, upload it to Google servers, combine it with everyone else, and then re-download the new model for smarter products. Pichai used Gboard as an example. Google is also focusing on accessibility to help disabled people. Pichai talks about Live Transcribe and other accessibility apps over the last few months.

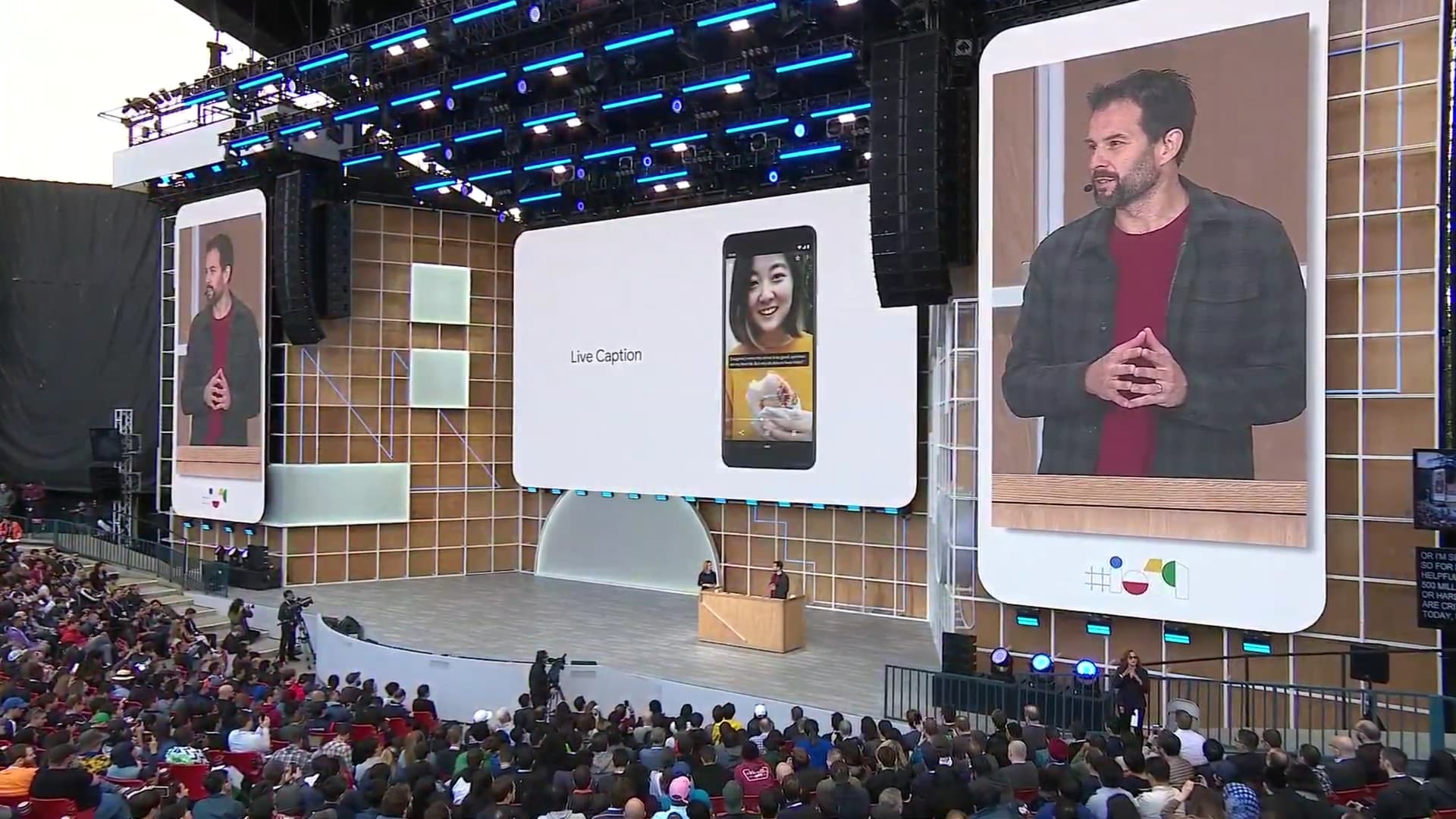

Live Caption is another new app to caption things for the disabled. Google wants to combine all of this technology into Duplex, Smart Reply, and Smart Compose to help the deaf and mute make functional phone calls even easier with a new feature called Live Relay. This is all part of a thing Google is working on called Project Euphonia. Sundar ends this part of the presentation by showing how Google creates speech models for those who can’t speak well due to deafness, stroke, or other problems.

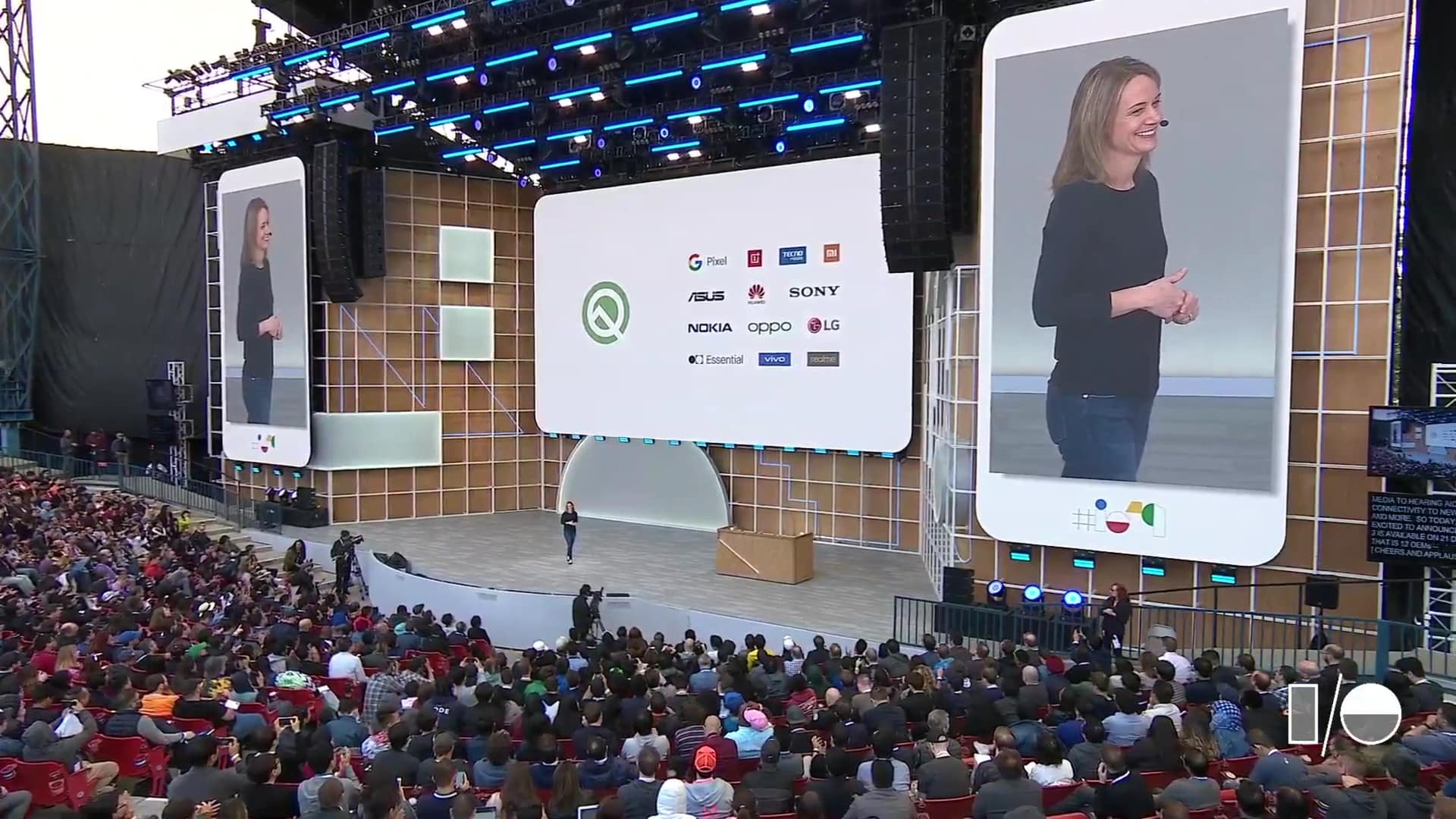

Stephanie Cuthbertson, Android, mobile OS innovation

Stephanie starts out her presentation saying that over 2.5 billion Android devices active right now. She then talks about foldable phones. Android Q will support foldables natively to help OEMs make better ones. That includes app continuity, a feature from the Samsung Galaxy Fold. Android Q will also natively support 5G. Stephanie brings Tristan to the stage to talk about Live Caption working on Android Q. He demos the live caption and it works pretty well and he did it in airplane mode to show it’s doable offline. The whole live speech model functions on the device with just 80MB, down from 2GB.

Stephanie talks about Smart Reply, an older feature with some new tricks in Android Q. It works for all messaging apps in Android now. You also get actions to save more time. A full, real dark mode is also coming to Android Q, finally! It’s a true black theme for you OLED folks out there, at least according to the screenshots. However, the central focus of Android Q is security and privacy. Stephanie humble bags that Android scored top scores in 26 out of 30 tests for malware protection and security.

Android Q features a whole settings menu for privacy. It lets you control these things much easier on your phone. Android Q also alerts you when apps use your location permission and how you share location data. Another new feature is the ability to apply security updates without ever rebooting the device. It should work exactly like updating an application. That is very exciting for OEMs that don’t send security updates often.

Stephanie pivots to distraction. A new feature in Android is Focus Mode. You can disable distracting apps and they won’t so much as send a notification. This will also be available on Android Pie this autumn. In addition, Android Q has native family controls, including Family Link. Family Link lets you set daily screen time limits, approve app installs, and even set bed times. The beta is available on 21 devices, including all of the Pixels.

Rick Osterloh, AI, software, and hardware

Rick enters the stage to talk about some more developer-friendly topics. He starts his presentation with a video clip about Google Home. Rick talks about bringing all of the Home products together under the Nest name. It includes all of Nest’s current products along with a bunch of Home stuff. Rick announces the Nest Hub as well as the Nest Hub Max. The camera on these devices are usable as a security camera like a Nest Cam. It’s also usable with Google Duo.

The camera also has a green indicator light as well as a switch on the back of the device that flat turns off the camera for your security and privacy. We’ll have more details in our hands-on about these devices. These devices uses machine learning to identify hand gestures for additional control functions like the LG G8 as well. These devices are available coming summer. The Nest Hub will also be available in 12 new regions. Check out our hands-on with the Nest Hub Max here!

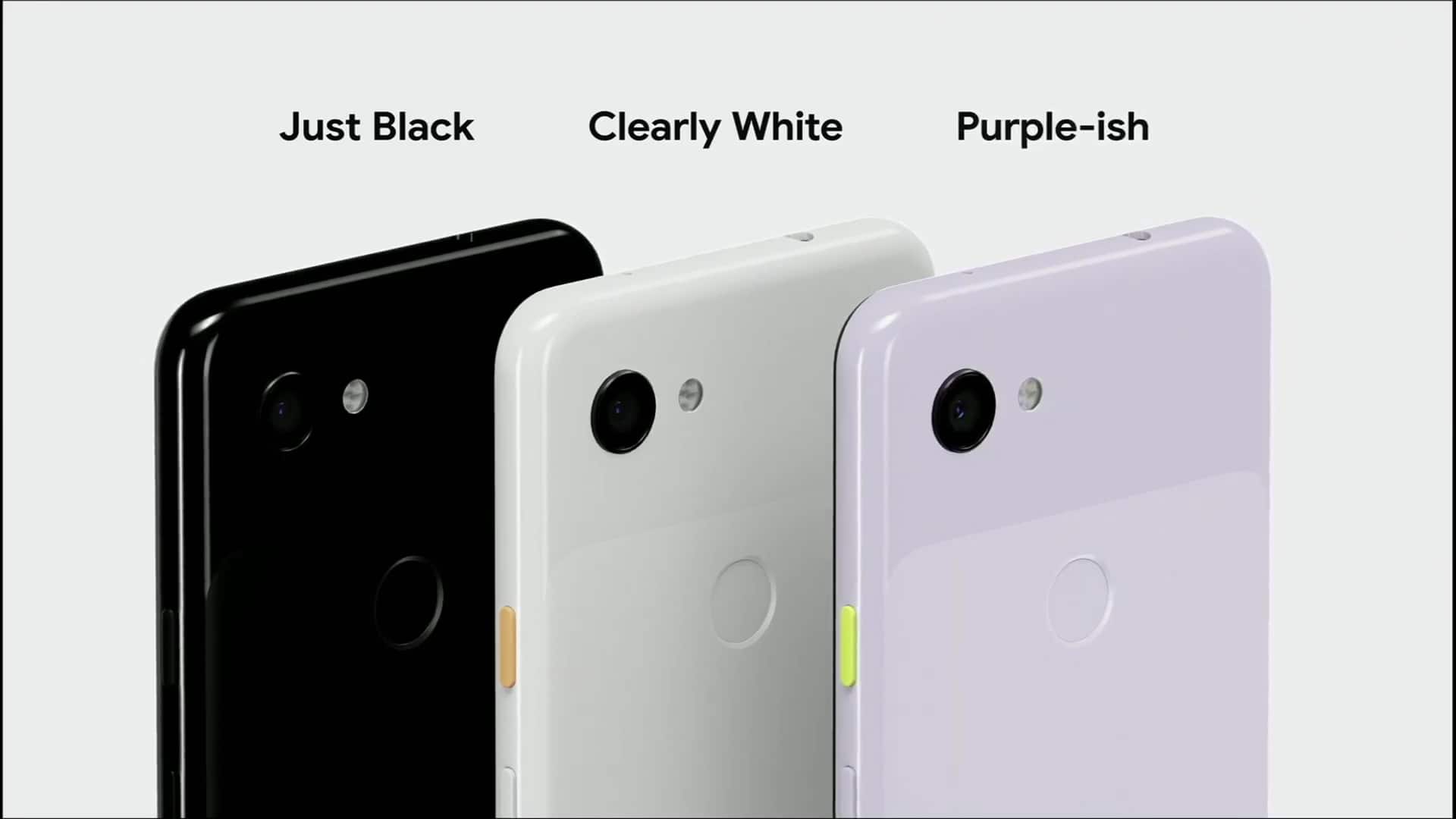

Sabrina Ellis, Pixel 3A and 3A XL

Rick throws it to Sabrina Ellis to talk about the two new Pixel devices. The Pixel 3A and 3A XL start at just $399. They come in three colors, including a new one, Purple-ish. These devices also come with a 3.5mm headphone jack. Sabrina makes a quip about the new Pixel 3a being able to deliver high-quality photos without the expensive hardware. It’s basically just like the more expensive Pixels, just with a less powerful processor.

Starting today, Pixel devices will be able to use the new AR mode in Google Maps. Sabrina pivots and talks about all of the Android Pie, Android Q, and Pixel features that the Pixel 3A and 3A XL also has. They will also be available in a variety of countries and they will not be Verizon exclusives in the U.S. They are available starting today. However, they lack the free max quality storage upgrade for Google Photos. Users are restricted to the high-quality version that everyone else gets.

Jeff Dean, AI (again)

Jeff takes the stage to talk about AI. This part of the keynote is a bit more technical, talking about the language fluency of computers. This includes Bidirectional Encoder Representations from Transformers (BERT), or the ability for computers to understand the context of words. They train the model by using a fun, high-tech version of mad libs. Jeff then talks about TensorFlow and updates to the platform over the last year. He throws it to Lily Peng for more information.

Lily Peng, medical technology

Lily Peng takes the stage to talk about Google’s machine learning model for medical uses. This includes things like vision, diabetes, oncology, and others. She talks further about using machine learning to view CT scans for better malignant lesion detection in lung cancer with surprisingly good success. It’s in the early stages, though. She throws it back to Jeff.

Back to Jeff for more AI and machine learning

Jeff returns to the stage to talk about researching, engineering, and building the ecosystem. He talks about flood detection models and how it’ll help people in India avoid flooding this year. It’s a beautiful marriage of thousands of satellite photos, machine learning, neural networks, and physics. Google wants to improve these models even more to make sure everybody knows when a place is going to flood.

Google has partnered with 20 organizations to work on some of the world’s biggest problems. That includes anti-microbial imaging, speeding up emergency response times, and high resolution monitoring networks for improving air quality. Those companies will also get free fundong. Jeff then closes out the Google I/O 2019 keynote with some inspiring words about the next decade.

Wrap up

What was your favorite part of this year’s Google I/O 2019 keynote? Tell us in the comments! Also check out our podcast on the topic, linked just above or it’s available in your favorite podcatcher!