Affiliate links on Android Authority may earn us a commission. Learn more.

Google’s impressive Live Caption will add subtitles to any audio on your phone

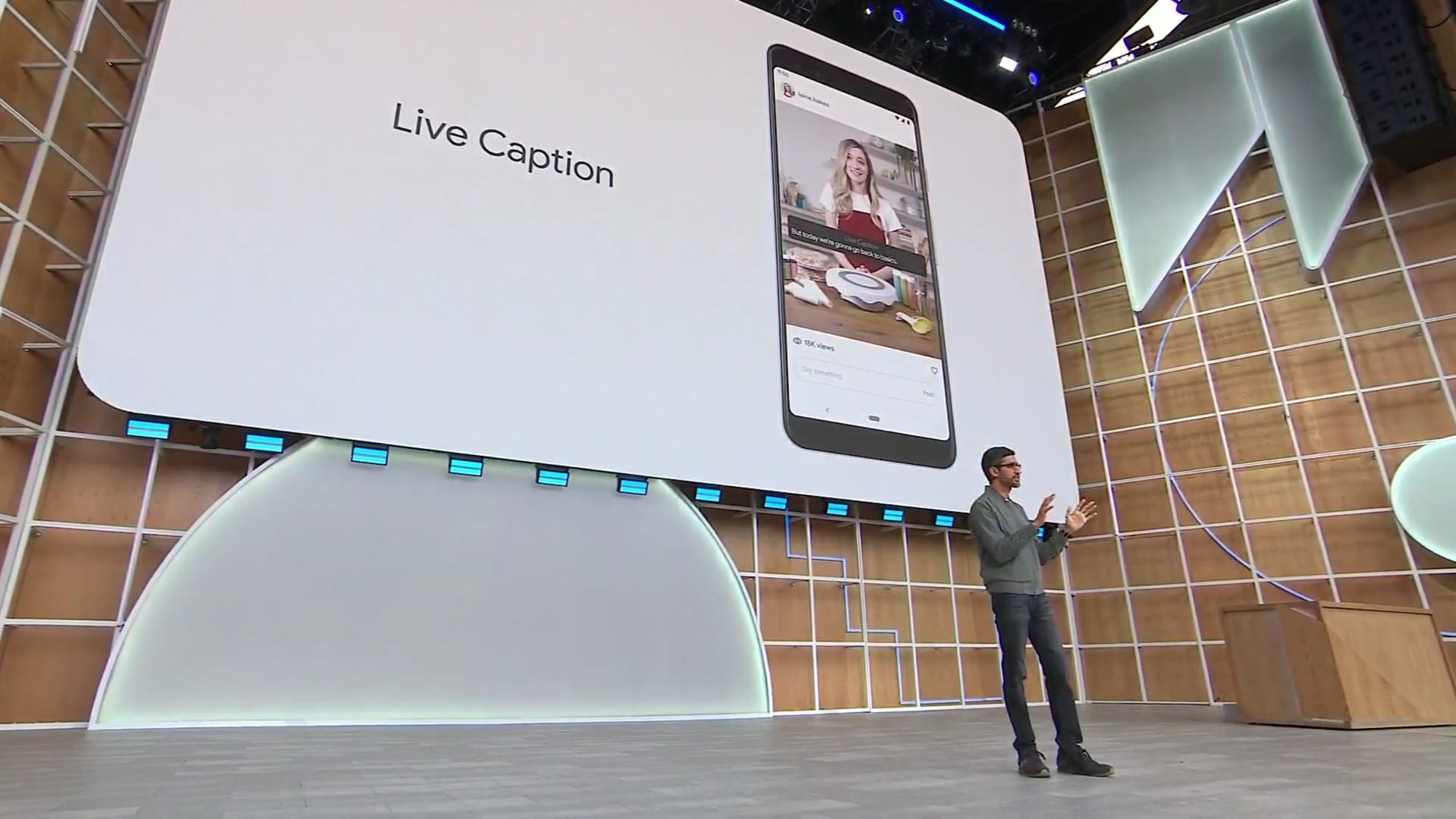

One of the big themes of the Google I/O 2019 opening keynote was inclusivity. A new feature in Android Q aims to improve inclusivity for persons who are deaf and hard of hearing by offering instant captions to just about any audio or video played on a phone.

Called Live Caption, the feature employs AI to translate speech played back on a smartphone to fast, accurate captions. The beauty of it is the feature works with any app, regardless if it plays audio or video, and regardless if the content is streamed from a server, played from local storage, or generated on the fly by a human.

Google Pixel 3a XL review: Come for the camera, stay for the experience

Live Caption works with podcasts, videos, audio, and video chat apps like Duo. The demo we saw on the stage of the Google I/O keynote seemed very smooth and impressive, though obviously real world results may vary.

Live Caption will be accessible with one tap – users will be able to activate it by clicking on a new icon visible when changing the system volume. Everything is processed locally, meaning you won’t need to worry about third-parties listening in on your conversations.

Captions are shown in a black window overlaid on top of the normal interface. The captions are not saved for later, so you will only see them when the corresponding audio is played.

Live Caption works with podcasts, videos, audio, and video chat apps like Duo.

While deaf people may benefit the most of this cool new feature, Live Caption has the potential to be useful for lots of other users, in a variety of situations. It even works when audio is turned down to zero, allowing users to consume content without disturbing anyone around.

Live Caption is a new accessibility feature baked into Android Q. You’ll need to enable it from the settings before using it and it’s not clear for now whether the feature will be included by all OEMs in their Android Q devices.

Live Relay

While the ability to watch videos on mute is pretty cool, it’s also trivial in comparison to the life-changing effect live captioning technology could have for some people. Google showed how Live Caption, coupled with its Smart Reply and Smart Compose features it first debuted last year, can help people who can’t speak have conversations. The tech, called Live Relay, can turn speech into written text that deaf users can easily interact with. Next, the answer is turned into synthesized voice and relayed to the person at the end of the line.

Project Euphonia

Taking things a step forward, Google’s researchers are also looking for ways to train speech recognition models to understand non-standard speeches, like those from people who stutter, had strokes, or suffer from other impairments. The long term goal is to make computers understand the millions of people out there that have speech impairments or can’t even speak at all.

Google warned that there’s still a lot of work to be done in this quest to make technology work for literally everyone. CEO Sundar Pichai invited people with speech impairments to contribute speech samples that will help the company build more inclusive recognition technologies.

Stay tuned for more from Google I/O.

Thank you for being part of our community. Read our Comment Policy before posting.