Affiliate links on Android Authority may earn us a commission. Learn more.

Apple visionOS: Everything you need to know about Apple's OS for AR-VR headsets

The Apple Vision Pro is one of the most feature-rich AR-VR headsets intended to reach customers. It’s a standalone headset powered by the same impressive M2 SoC that Apple uses in its MacBook Air and Mac Mini, coupled with a dedicated R1 chip used for processing input from the litany of cameras and sensors. While the Apple Vision Pro hardware is impressive, its software is equally impressive. Meet visionOS, Apple’s dedicated OS for AR-VR headsets that helps the Vision Pro become the best version of itself.

What is visionOS?

visionOS is what Apple claims is the world’s first spatial operating system, blending digital content with physical space. It is the operating system that powers the Apple Vision Pro and everything that it can do, just like how iOS powers all the experiences on the iPhone.

Apple claims it designed visionOS from the ground up to support the low-latency requirements of spatial computing. There’s also the backing of decades of experience and innovation from developing macOS, iOS, and iPadOS. The result of all this coming together is a dedicated OS for the headset built to take advantage of the space around the user.

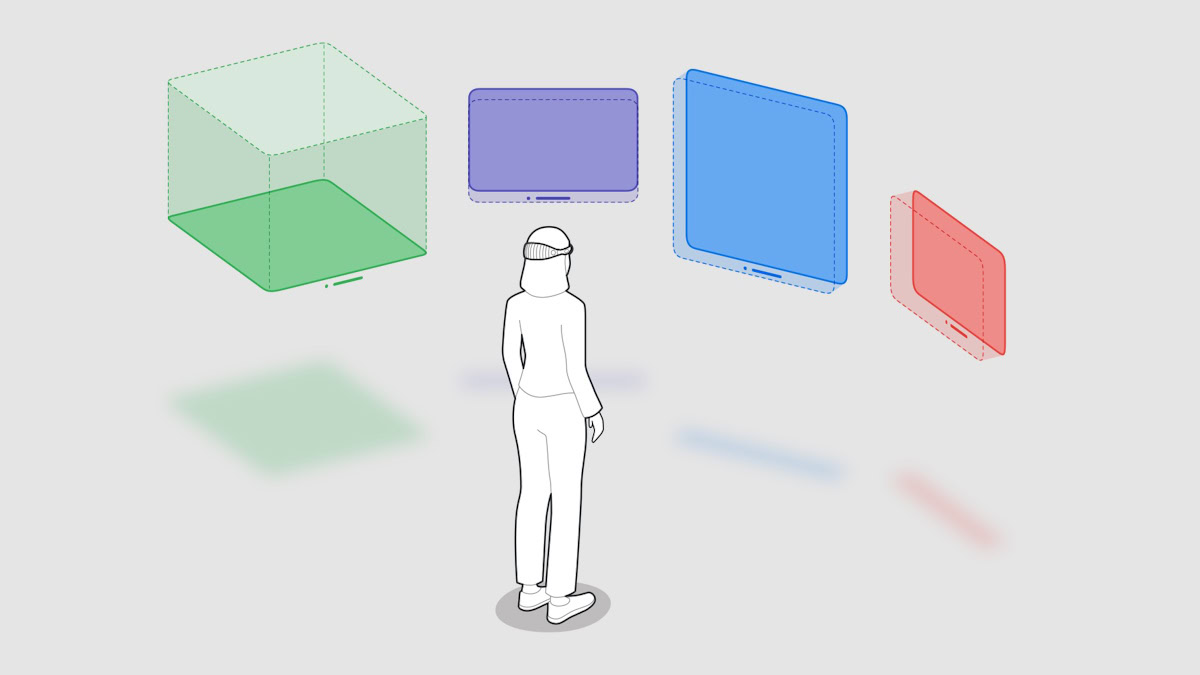

The three primary building blocks for spatial computing on visionOS are Windows, Volumes, and Spaces.

Windows are traditional app windows that contain traditional controls and content. Volumes are depth components, adding a spin of 3D to the app itself (and not just the content), providing experiences that can be viewed from any angle. Spaces are environments in which apps can exist.

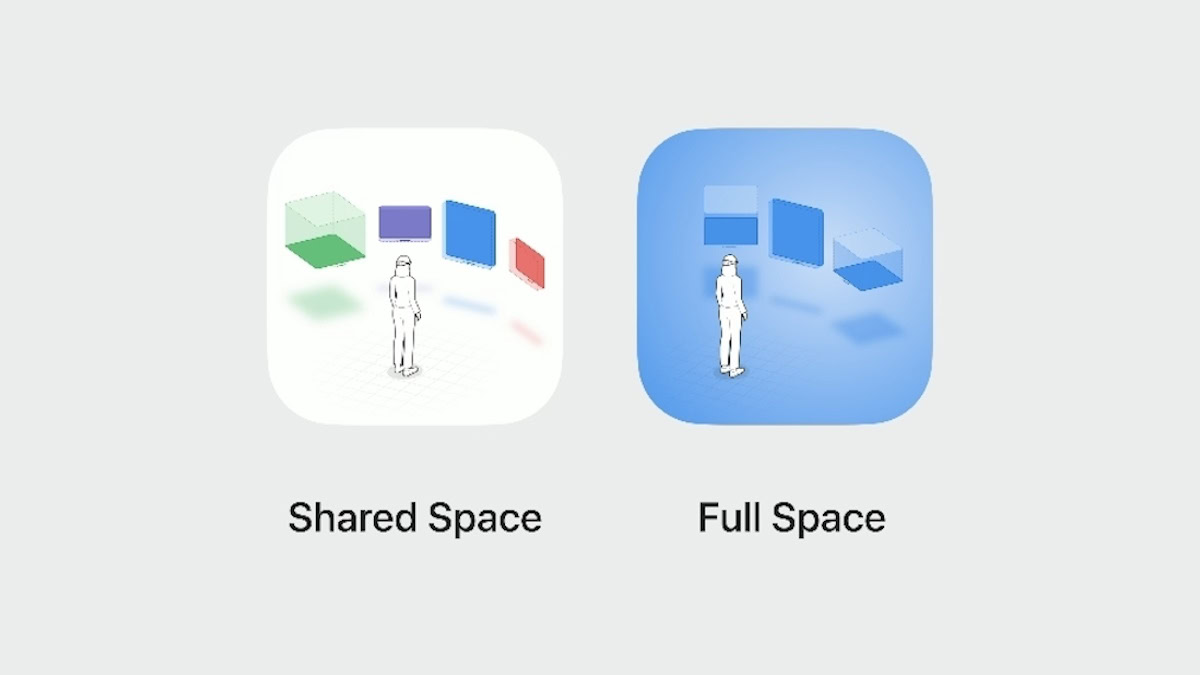

visionOS has two spaces for apps to exist in: a Shared Space (Augmented Reality with scope for multitasking) and a Full Space (Virtual Reality with absolute focus on a single app).

Shared Space is the default configuration for apps to launch in, but if needed, the app can open a dedicated Full Space for absolutely immersive content.

visionOS Features

The biggest challenge for visionOS was reimagining user experience in an environment entirely out of control of Apple: the user’s physical space. As such, visionOS would need to exist and extend beyond physicality and dimensional space.

Apple has managed to achieve the same with visionOS. It features a brand-new three-dimensional interface, unlike what we are used to seeing on our phones and laptops. Digital content looks and feels present in a user’s physical world.

The UX elements of visionOS respond dynamically to natural light. They also cast shadows, giving the user a sense of scale and distance. You can see the UI floating in front of you and get a taste of how far ahead it is floating in front of you. This is important, as you need to interact with the digital elements of the UX without any haptic feedback.

Imagine how the user experience would be if you tried to touch your phone screen but could never hit the display and just continued to press your finger forward indefinitely through the display. Apple needed to avoid that, and it has managed to do so convincingly.

Shadows help depict distance and scale while the headset recognizes your fingers, their position, and speed of movement, avoiding situations where your fingers pass through the UI floating in front of you.

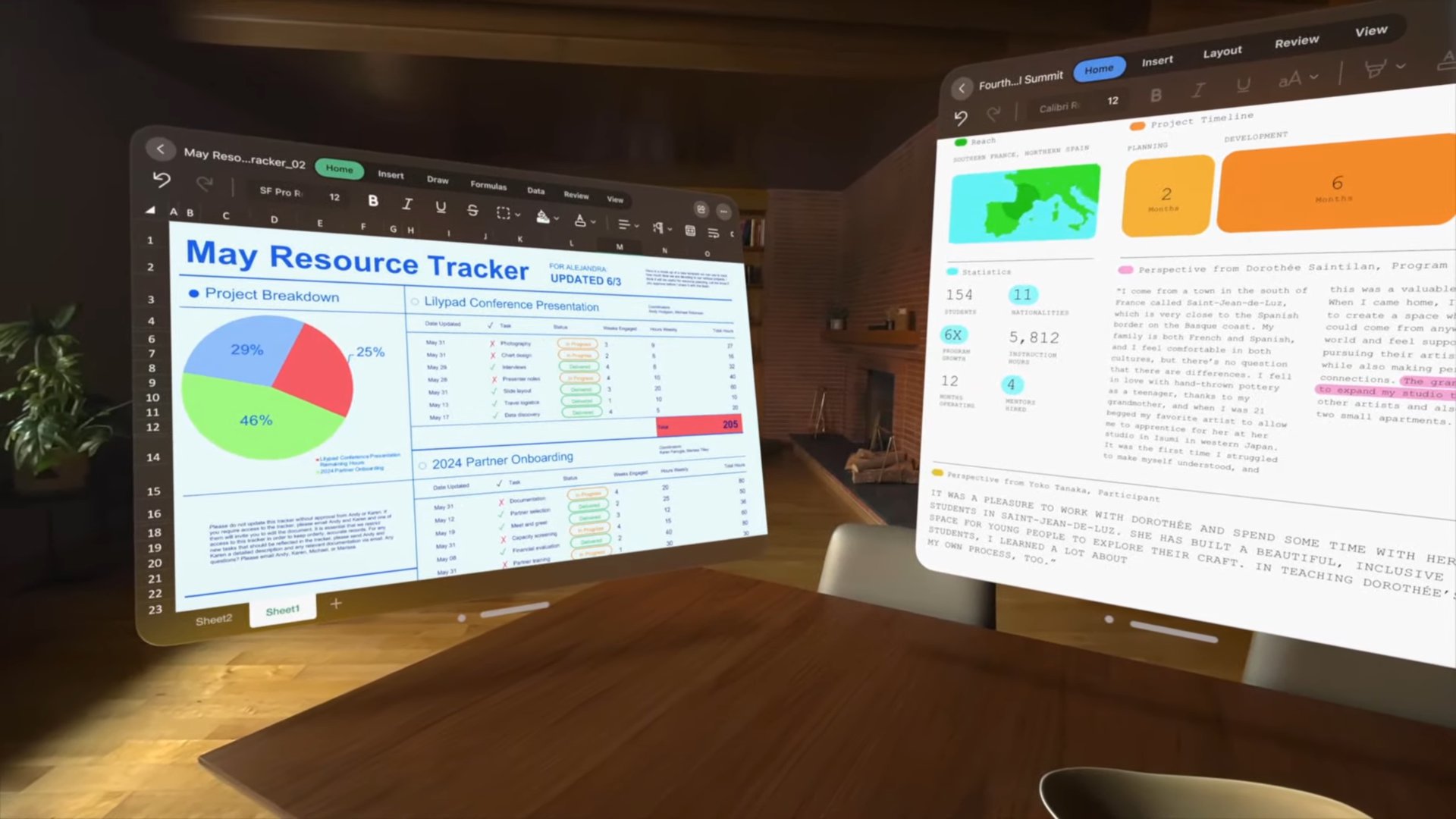

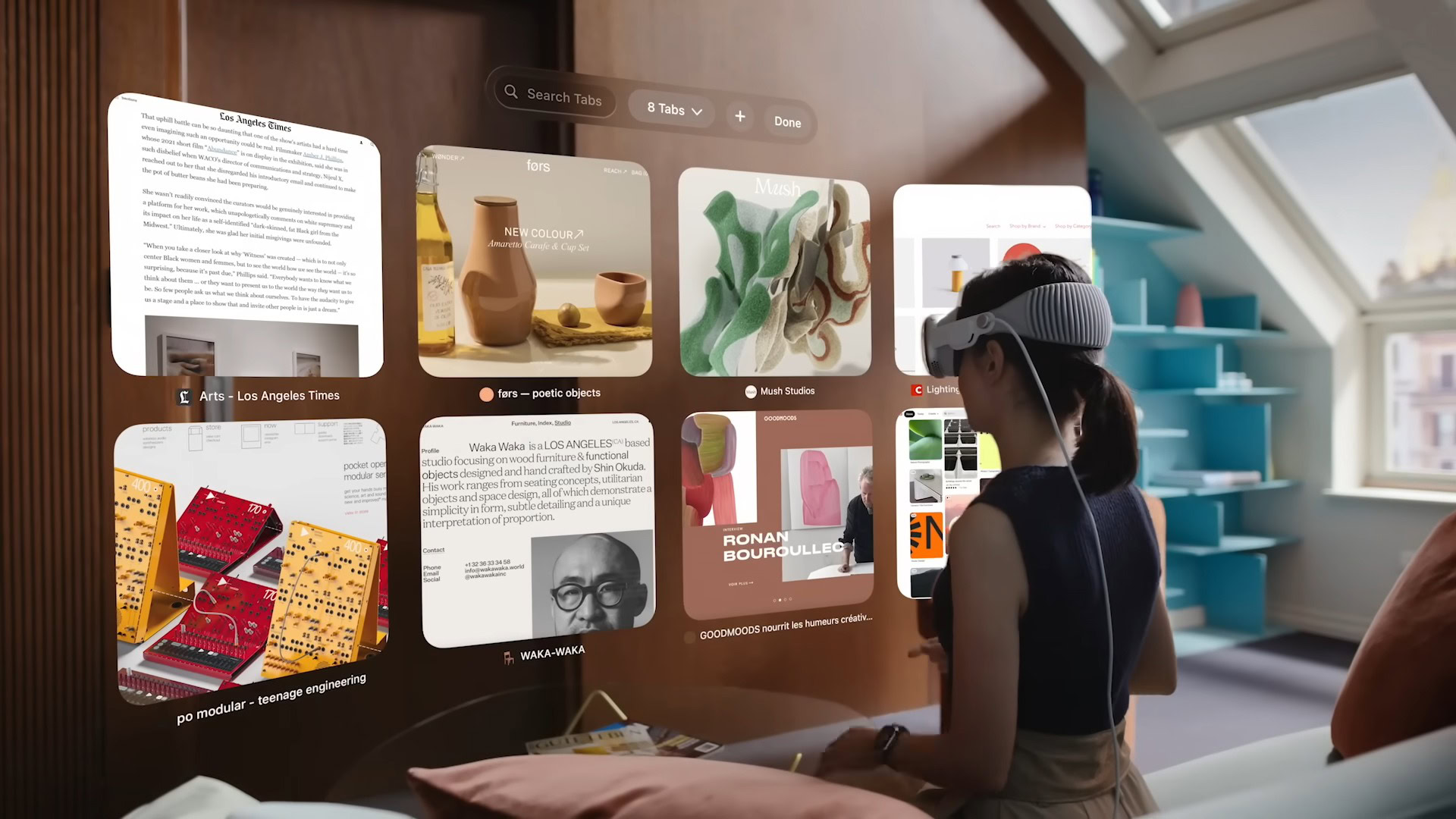

With the loss of physicality, visionOS gains an infinite canvas for your apps. You can place apps side by side at any scale, as far as you and your neck are willing to turn. There’s seemingly infinite screen real estate at play here, opening up new multitasking methods.

The Apple Vision Pro with visionOS supports Magic Keyboard and Magic Trackpad, so users can set up their ideal workspace and take advantage of the immense multitasking potential.

visionOS Controls

The Apple Vision Pro headset does not need dedicated controllers. That poses a unique challenge for visionOS, as controllers would have simplified Apple’s job. So how do you navigate around visionOS?

Apple solves this by opting for an entirely new input system controlled by a combination of a person’s eyes, hands, and voice rather than by clunky handheld controllers that need plugging in or charging.

To point at something, you have to look there, as the Apple Vision Pro headset has cameras inside that track where your eye is looking. This makes it highly intuitive to navigate the UI, as you are looking around and doing nothing more. The UI elements react to your sight, with icons getting enlarged and highlighted and text fields selected where your gaze stops.

Don’t want to select something? Just look away. It doesn’t get simpler than this, and the visionOS on the Apple Vision Pro makes it look deceptively easy. Furthermore, Apple promises that eye-tracking information is neither shared with the company itself nor third-party apps or websites.

Sight alone only solves half the cursor problem, taking care of positioning. But what about clicks? How do you actually select something? On visionOS, you simply click your fingers.

The Apple Vision Pro has several cameras to track your hand and fingers for gestures. You don’t need to position your hand anywhere in particular, as you can operate the headset while resting your arms and shoulders in their natural state. To scroll, just flick your wrist up or down as needed.

Want to type out text? Don’t. visionOS allows you to dictate words by voice as soon as you enter a text field, so you don’t have to use a virtual keyboard if you don’t want to.

Combining eyes, hands, and voice, visionOS allows you to navigate the entire UX and ecosystem without feeling confused or out of place.

visionOS Apps

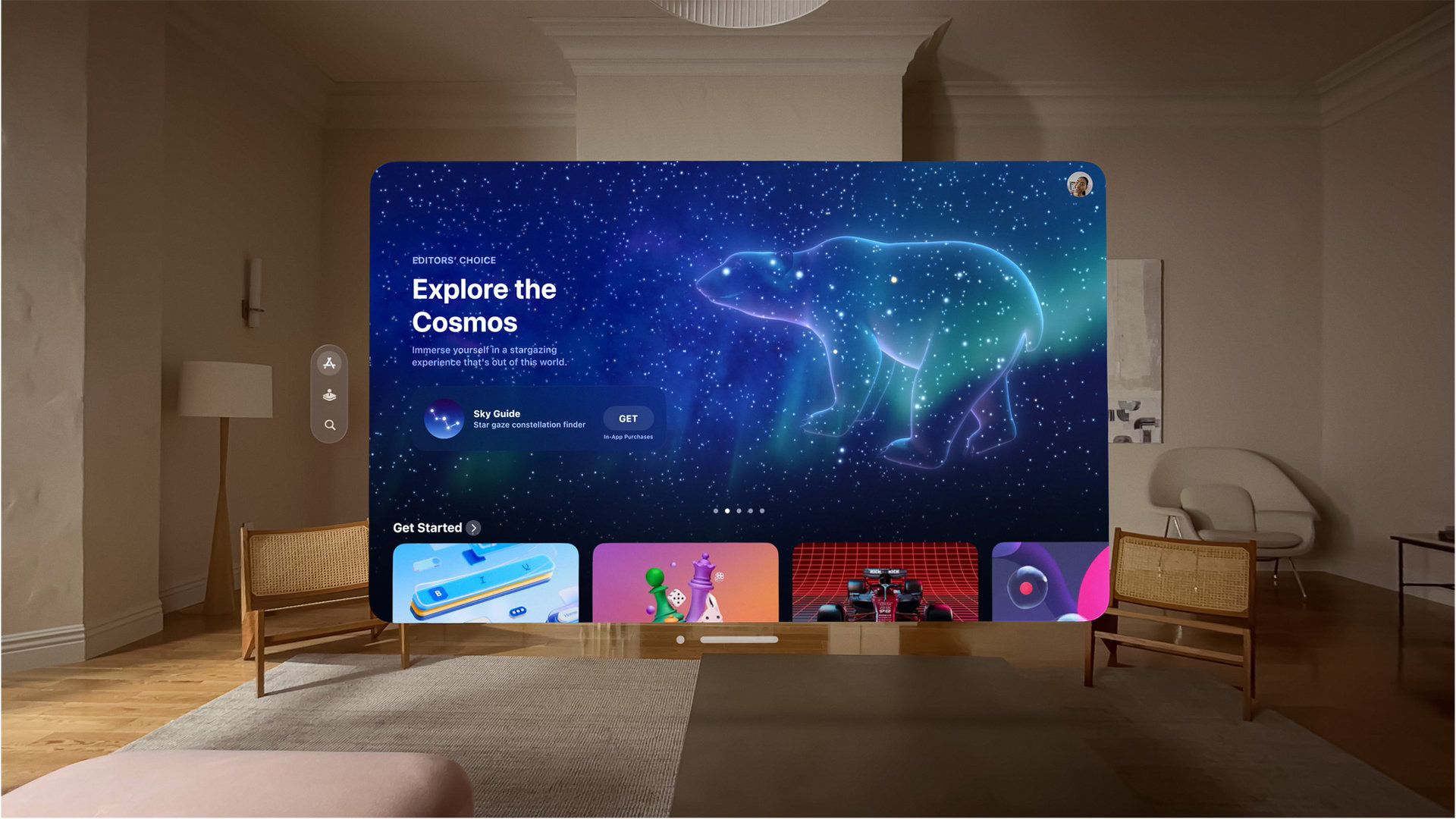

visionOS is a new operating system for a new product category, so it is obvious that it will also come with its own apps and app store.

Apple has created a special version of the app store for the headset, featuring apps from third-party developers who will be designing new app experiences that take advantage of everything that the Vision Pro and other future headsets from Apple have to offer.

But admittedly, since the tech is so nascent, the app store will be thinly populated with third-party apps that specifically cater to the headset. As a remedy, the visionOS App Store will also showcase hundreds of thousands of iPhone and iPad apps that run well and automatically work with the new input system for Vision Pro.

For the Vision Pro’s launch, you can expect a bunch of Apple’s first-party apps on visionOS, along with tens or hundreds of native apps from high-profile third-party developers who have jumped onto the Apple Vision Pro bandwagon. Following that, for the first few months after release, you can expect the native app catalog to grow steadily as more developers get their hands on a Vision Pro headset to develop and test their app.

Are you an app developer and want to develop for visionOS? Apple has some handy documentation and an SDK to help you get started.

visionOS Connectivity and Ecosystem

Apple’s visionOS forms part of the Apple software ecosystem, and if there’s one thing Apple does right, it is ecosystem play. So if you have other Apple devices you use regularly, you can be sure that the Apple Vision Pro with visionOS will fit right into your life.

Apple hasn’t detailed too many connectivity and ecosystem propositions for the visionOS. One, visionOS works seamlessly with Bluetooth accessories like the Magic Keyboard and the Magic Trackpad.

Two, MacBook users simply have to wear the Vision Pro and look at the Mac to expand the screen beyond the Mac. With this, you can take advantage of spatial computing and sight input on the Vision Pro, with a unified workflow from your Mac, alongside the mouse and keyboard input support from the laptop.

In the future, if we can speculate, we expect more Apple products to play well with Vision Pro and visionOS. You could theoretically expand the displays of the iPhone, iPad, and Apple Watch in the same way as you can for the MacBook, as visionOS is a complete OS on its own, and the Vision Pro is a complete computer by itself. You could use any of these products for input.

You can perhaps use an Apple Watch for haptic feedback or an Apple Pencil to draw in the air in AR space. There’s a lot of potential here, and we bet we will see more as the technology matures.

FAQs

No, visionOS is not open source. Since this is an Apple product, there is no chance this will change in the future.

No. visionOS runs standalone on the Apple Vision Pro headset and does not require an active connection to an iPhone or MacBook for regular use. However, you will need an iPhone for the initial setup for the Apple Vision Pro.

Apple has not commented on the ability to sideload apps on visionOS. Developers will likely be able to sideload apps for testing on the Apple Vision Pro. However, it is extremely unlikely that Apple will allow regular consumers to sideload apps onto visionOS on their Vision Pro headset.