Affiliate links on Android Authority may earn us a commission. Learn more.

YouTube to block disturbing adult-themed videos designed to trick young children

YouTube is readying a number of policy and behind-the-scenes changes to stop the spread of disturbing videos aimed at young children. Under the new rules, any videos reported by users as showing “age-inappropriate” content will be hidden from under 18s and for viewers that haven’t signed in.

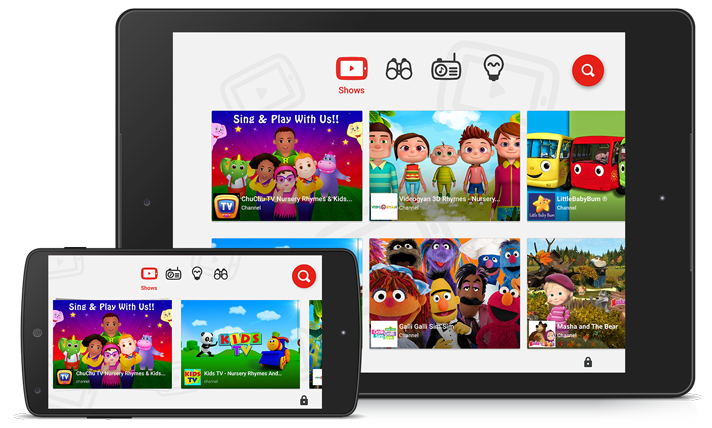

While the new policies will still rely on YouTube’s algorithms to do much of the heavy lifting, YouTube has stressed that any content directly flagged by users will also be assessed by a dedicated team. This will also impact the YouTube Kids app, as any videos found to violate the new rules will be automatically blocked from the child-friendly service.

The YouTube team announced the changes in the wake of a Medium blog post by writer James Bridle. The post highlighted unsettling videos aimed at youngsters that show characters like Peppa Pig, Frozen’s Elsa, and Marvel superheroes acting out violent, sometimes sexually suggestive themes.

“Someone or something or some combination of people and things is using YouTube to systematically frighten, traumatise, and abuse children, automatically and at scale,” Bridle wrote.

The post has been broadly cited in news reports and shared across social media, and is an essential read for any parents with young children who regularly watch YouTube. Despite the increased public pressure, YouTube claimed that its policy changes have been in the works for several months.

“Earlier this year, we updated our policies to make content featuring inappropriate use of family entertainment characters ineligible for monetisation,” said YouTube’s director of policy, Juniper Downs.

“We’re in the process of implementing a new policy that age restricts this content in the YouTube main app when flagged. Age-restricted content is automatically not allowed in YouTube Kids. The YouTube team is made up of parents who are committed to improving our apps and getting this right.”

While the policy changes seem like a step in the right direction, it’ll be interesting to see how effective the policy changes are given that it relies heavily on users reporting the offending videos.

What do you think of the changes? Is YouTube doing enough to combat these strange videos? Let us know in the comments.

Thank you for being part of our community. Read our Comment Policy before posting.