Affiliate links on Android Authority may earn us a commission. Learn more.

8K TV explained: The script on television's next big upgrade

I wandered passed hundreds of TVs earlier this year during CES, and came away impressed with what we, the TV-owning and -viewing public, have to look forward to. Let me tell you, it ain’t 8K.

While higher-resolution television sets are certainly on their way, technologies beyond the pixel count will have a broader impact on picture quality and the overall experience of kicking back to watch a game or movie. Most importantly, the 8K story needs a few more edits before it’s ready for viewing.

What is 8K TV?

The TV industry is chock full of alphabet soup, with meaty morsels like 1080p, Ultra HD, and 8K floating around. For those who aren’t tech savvy, these acronyms can make your head spin once a sales person starts rattling through them. Here’s a primer.

When DVDs first arrived about 20 years ago, most content and TV sets were capable of producing 480p resolution. The “480” here refers to the number of pixels from the top of the screen to the bottom. The term most commonly used to describe 480p is SD or Standard Definition.

| Resolution | Measurements (In pixels) | Pixel Count |

|---|---|---|

| Resolution 480p (SD) | Measurements (In pixels) 640 x 480 | Pixel Count 307,000 |

| Resolution 720p (HD) | Measurements (In pixels) 1,280 x 720 | Pixel Count 921,600 |

| Resolution 1080p (Full HD) | Measurements (In pixels) 1,920 x 1,080 | Pixel Count 2,073,600 |

| Resolution 4K (Ultra HD) | Measurements (In pixels) 3,840 x 2,160 | Pixel Count 8,294,400 |

| Resolution 8K (Ultra HD) | Measurements (In pixels) 7,680 x 4,320 | Pixel Count 33,177,600 |

Then 720p arrived, not long after DVDs took off. With 1,280 by 720 pixels, 720p was the first High Definition or HD standard.

Full HD, or 1080p, quickly replaced 720p as the resolution used for television sets. Full HD includes 1,920 pixels from side to side and 1,080 up and down, or 2,073,600 total pixels. By way of comparison, 720p HD has just 921,600 total pixels, or fewer than half as many as Full HD. The majority of Blu-Ray discs sold between 2006 and 2015 were Full HD.

The next jump was from Full HD to Ultra HD, or what is often called 4K. Ultra HD resolution contains 3,840 horizontal and 2,160 vertical pixels. Why 4K? Because 3.8K would be annoying and movie cameras at the time shot in 4,096 pixels. The industry blended the name of the two for sanity’s sake. Since Ultra HD / 4K doubles the number of pixels both horizontally and vertically, it contains four times as many pixels as Full HD / 1080p, at an amazing 8,294,400.

This is where we are today. Most television sets larger than 40 inches are sold with 4K resolution. Inexpensive TV sets, or sets with screens smaller than 40 inches, are typically kept at 1080p. Only the smallest and cheapest TVs still ship at 720p. Ultra HD Blu-Ray discs on sale today offer movies in 4K resolution to match the majority of TVs.

Leaping to 8K represents another quadrupling of the total number of pixels.

An 8K screen has 7,680 pixels across and 4,320 pixels up and down, making for a staggering total of 33,177,600 pixels. That’s 16 times the amount of information of a 1080p screen and four times the data of a 4K screen. It’s a lot of pixels.

Can we see those 33 million pixels?

It depends on how close you sit to your TV. The human eye can only perceive so much detail, and after a while, you reach a point of diminishing returns.

Let’s look at some numbers based on a 65-inch TV. At 480p, you can see all the available detail on the screen from as far away as 19 feet. The distance drops to 13 feet from a 720p TV and 8 feet from a 1080p TV. This means people who sit 8 feet from their 1080p HDTV (or closer!) can see all the detail created by the TV’s 2,073,600 pixels.

If you upgrade to 4K, then you’d have to sit 4 feet from the set (or closer!) to perceive all the available detail on the screen.

For 8K, shuffle 2 feet or closer to see all the detail. The numbers don’t change all that much if you go with a bigger screen. A 100-inch TV, for example, would still require you to sit 6 feet or closer to see all the detail at 4K, and 3 feet or closer to see all the detail at 8K resolution.

Most people can't see the difference between 1080p and 4K, let alone between 4K and 8K.

I don’t know about you, but I like to watch TV from my couch a comfortable distance from the TV. Sitting on the floor with my face pressed against the screen? Not so much.

The bottom line here is that from a normal viewing distance most people can barely tell the visual difference in resolution between 1080p and 4K, to say nothing of the difference between 4K and 8K.

Is 8K content available?

The answer is pretty much no. Hell, there’s hardly enough 4K content available. It will be years before 8K content is plentiful enough to warrant seeking out an 8K TV. Here’s why.

Three core requirements must be met to get 8K content to your eyeballs. First, the original movie, show, or game itself needs to be recorded in 8K; second, that content must be transmitted or transported in 8K; finally, it must be replayed in 8K on a capable set.

Few cameras are able to capture 8K content.

The majority of cable and broadcast television content today is shown in Full HD / 1080p resolution. Some 4K TVs will upcovert that signal from Full HD to Ultra HD to improve the experience, but the source signal is still just Full HD. The upconverting process on modern TVs is fairly good and can make 1080p content look sharp on a 4K TV set. Some TV makers point to upconverting as a stop-gap as 4K content plays catch-up.

Upscaling will apply to 8K, too. Sony’s 8K TV set can upscale 720p signals to 8K using raw processing power and machine learning. Sony claims its set can make most any source content look good when upscaled to 8K. Whether or not it can has yet to be proven. This is something all 8K TV sets will need to be good at from the get-go.

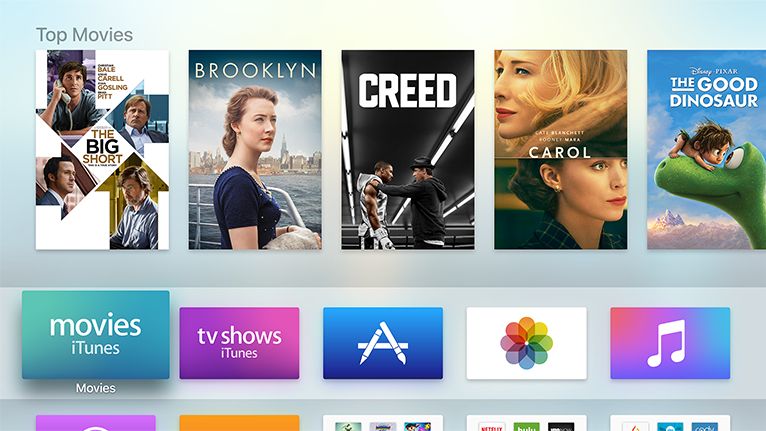

A quick check of cable providers in the U.S., including Comcast/Xfinity, AT&T/Spectrum, and Verizon FiOS, shows that each offers minimal 4K content. If you read the fine print, you’ll learn that 4K content is limited to programming via Netflix, YouTube UHD, and select sporting/live events. That’s it, at least from your TV service provider. A handful of online streaming video services do have movies and shows in 4K, including Apple and Google.

Today, very few cameras are able to capture 8K content. Red, the company behind the Hydrogen One phone, and several other camera makers including ASTROdesign, Hitachi, and Panasonic do have a few in the market, but they cost tens of thousands of dollars. These are strictly for movie and TV studios. Even with source 8K content, however, you’d run into real roadblocks transmitting it.

The primary issue is size. An 8K camera captures a 33MP picture for every frame, and records at 60 frames per second. That’s a lot of data. Consider the size of movie file. A Full HD movie generally falls between 3GB and 6GB, depending on the running time. A 4K movie has about four times the visual information as a Full HD movie, and an 8K movie would have four times the visual information of a 4K flick. An 8K movie file won’t necessarily be 16 times the size of a Full HD movie file, but it will be significantly bigger.

Most U.S. households don’t have the broadband speed or capacity to support the necessary bit rate for streaming 8K, and there are currently no 8K cable boxes

How much do 8K TVs cost?

Too much.

Samsung made an 8K TV set available to U.S. consumers in late 2018. The 65-inch Samsung Q900 8K TV set starts at $5,000. The 85-inch model costs $15,000.

More sets are on the way from the likes of LG and Sony, but prices haven’t been announced and the TVs won’t arrive until later this year. Don’t expect them to be cheap.

The Samsung is more or less the only legit option right now and isn’t what I’d call affordable for most people.

HDR is where it’s at

Up at the top of this article I mentioned that plenty of TVs at CES impressed me. They were all 4K HDR TVs. The human eye may not be able to resolve 33 million pixels at 10 feet, but it can see the difference in color accuracy and contrast that HDR brings to the table.

HDR stands for high dynamic range and refers to the delta between the blackest blacks and the brightest whites produced by a display. The higher the contrast ratio, the more detail is defined in very dark and very bright regions of the picture.

Brightness is measured in nits. Modern TVs generally produce between 300 and 500 nits of brightness. HDR TVs produce a minimum of 1,000 nits, and high-end HDR TVs can manage up to 2,000 nits. Pure black is 0.0 nits and is something only LED and OLED TVs can achieve. Contrast is often expressed as a ratio, such as 1,000:1. The higher the ratio, the better the contrast.

Contrast is only half the HDR picture. The other is color. In order to earn the HDR rating, a TV set has to reproduce 10-bit color. This is huge. Most TVs are capable of 8-bit color, which supports up to 16.8 million color variations. Advancing to 10-bit improves the number of colors by a factor of four, or to more than one billion color variations. That’s quite a jump. This makes for far smoother transitions between light and dark regions of the picture.

In terms of marketing, you’ll probably see Dolby Vision or HDR10 on TV spec sheets. Where Dolby Vision is proprietary and dynamic, HDR10 is an open standard and static. (Yes, there’s already HDR10+, but we’ll ignore that for now.) For all intents and purposes, Dolby Vision and HDR10 lead to about the same experience for your eyes, even if they come at it from different angles.

All the best TVs I’ve seen were 4K HDR TVs.

What do I buy?

If you’re in the market for a TV set right now, get a 4K model. Don’t spend $5,000 or more on an 8K TV. There’s no content, the TV sets cost way too much, you can’t see the difference visually, and the transmission standards may change between now and when 8K TV’s truly take off.

Electronics retailers have a wide range of 4K TVs on their shelves and a surprising number of them cost less than $500. Adding HDR to the mix bumps up the price less than you’d think.

Find a 4K TV that is the size you want and fits your budget. It will be good for years to come.

Thank you for being part of our community. Read our Comment Policy before posting.