Affiliate links on Android Authority may earn us a commission. Learn more.

Smartphones — not computers — are pushing the silicon industry forward

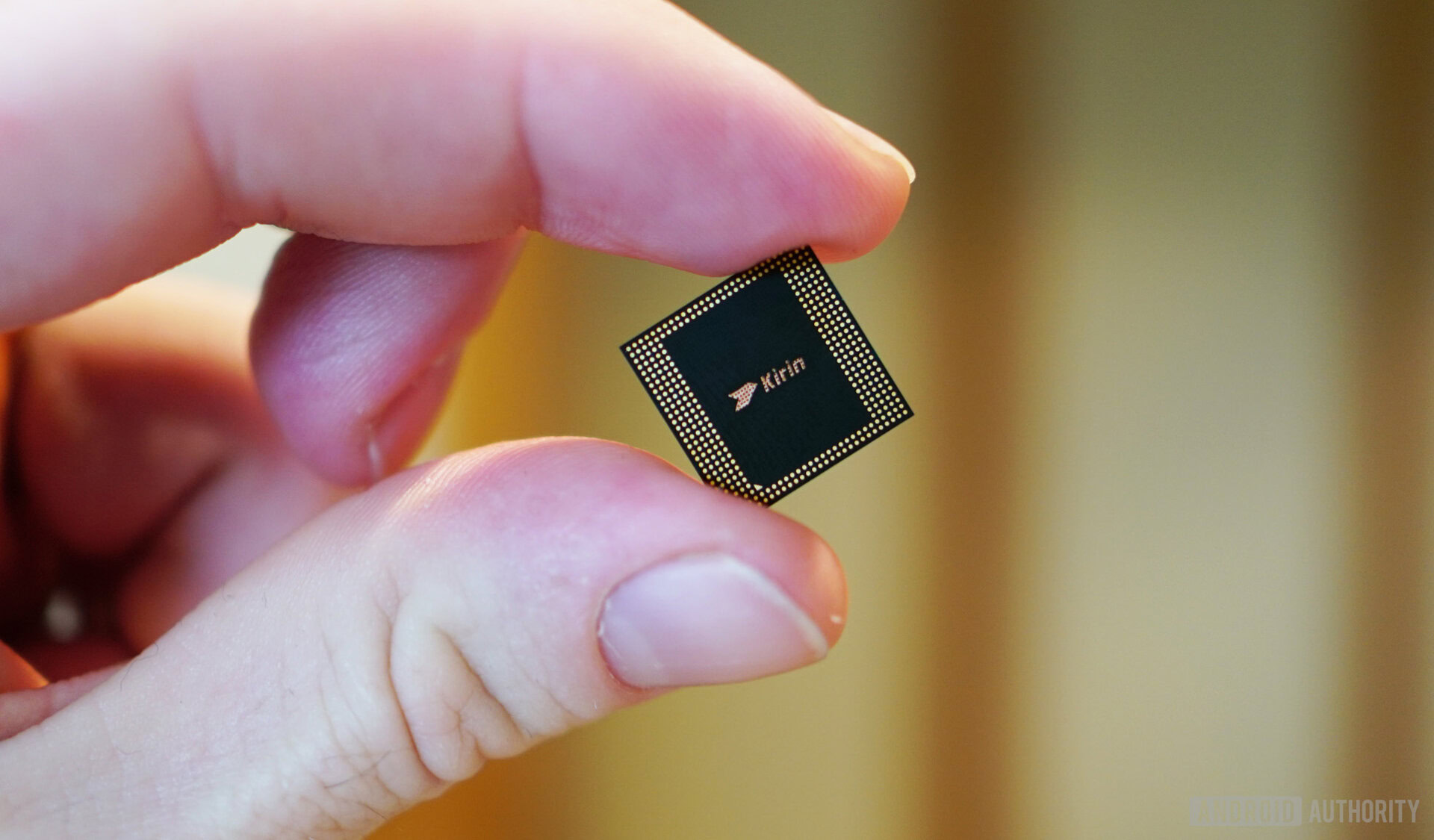

Mobile application processors achieved another major milestone this year. Both Apple and HUAWEI have their first 7nm products officially out in the open, and Qualcomm’s set to follow before the year’s end. Smartphone-class chips have been pushing the envelope for the past few years, beating out legacy semiconductor companies like AMD and Intel to smaller cutting-edge processing nodes.

The mobile industry has undoubtedly been the driving force behind ubiquitous computing too, producing chips with ever faster processors and integrated modems poised to challenge legacy companies in the low-end laptop space. Not only that, but the market has been quick to adopt cutting-edge machine learning techniques right into silicon, next to traditional CPU and GPU components.

Mobile chips have shot to the forefront of the silicon industry and there’s plenty more potential still left in the tank. Smaller process nodes, deeply integrated artificial intelligence, and major leaps in processing power are just some of what’s coming.

Fitting more into a single chip

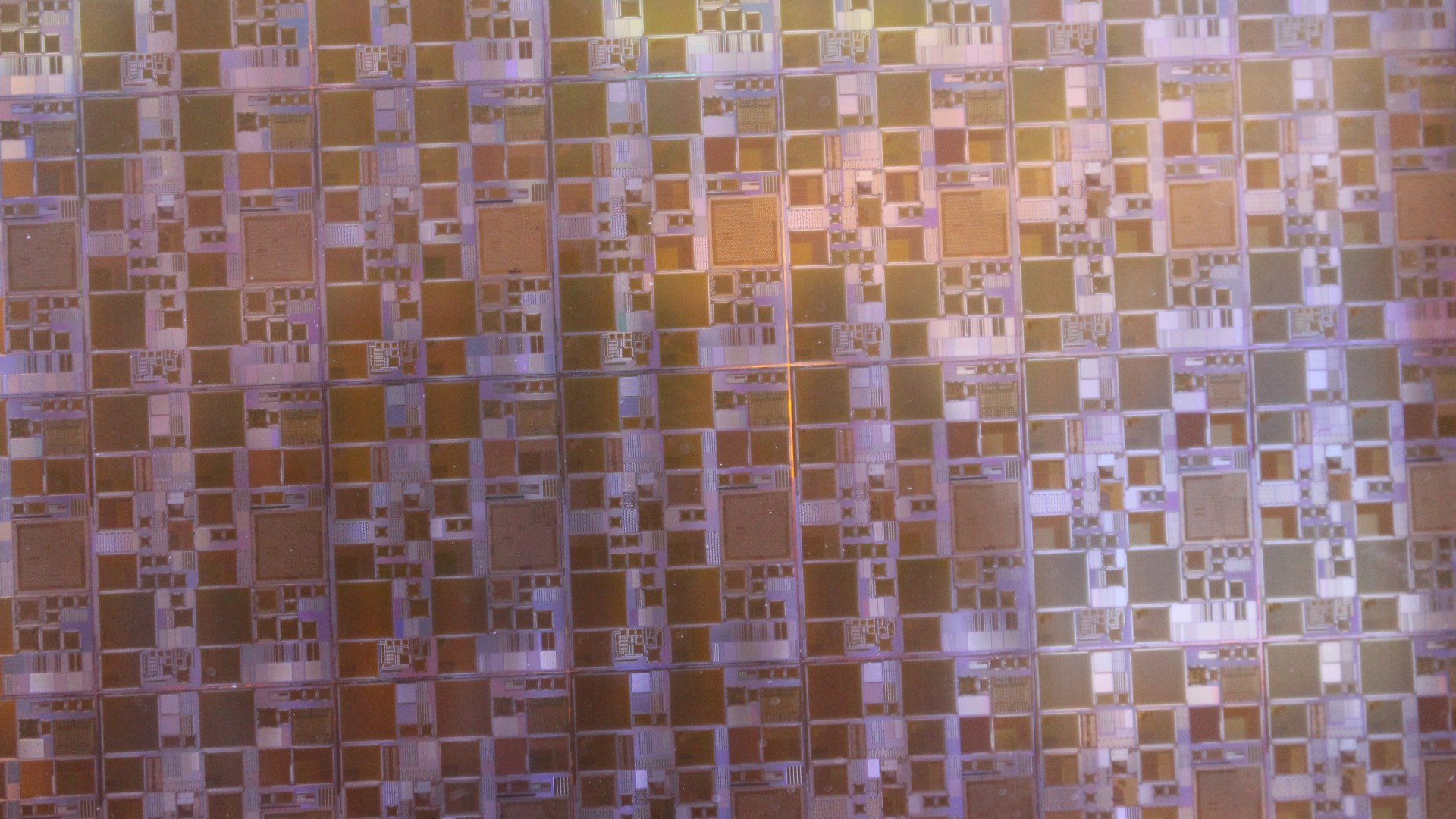

The heavily integrated system-on-a-chip (SoC) is the linchpin that makes smartphones possible. Combining processing and modem hardware into a single chip helped to make early smartphones both cost and power efficient. Today the idea has been pushed further. Heterogeneous computing passes out complex workloads to the most suitable components. Today’s cutting-edge smartphone processors contain not only CPU, GPUs, and modems, but image and video, display, and digital signal processors all in a single package.

The idea is simple enough: include separate hardware blocks better suited for specific tasks. This not only boosts performance but also improves energy efficiency. Speaking at Google I/O 2018, John Hennessy talked about the benefits of Domain Specific Architecture approach to computing and how to tackle the new challenges this way of thinking presents. Neural networking or dedicated AI hardware is the latest component to join the party. It’s already having a big impact across a range of industry segments.

Silicon density has reached the point where fitting multiple components onto a single small chip isn’t a problem. Highly heterogeneous and parallel computing is already here. The next bottlenecks are improving memory and interconnect bandwidths, refining the best architectures for right workloads, and further improving power efficiency.

4G data, neural network-based security, and multi-day battery life presents consumers with new value propositions over traditional PCs.

For smartphone chips, leading in this way presents them with an opportunity to disrupt some traditional markets. NVIDIA’s Tegra has moved into gaming with the Nintendo Switch, and 4G LTE equipped laptops and 2-in-1s now use mobile chipsets over standard chipsets.

Arm predicts enough major growth in the performance of its CPU architecture over the next couple of years to make it a viable competitor in the laptop space. Windows 10 on Arm still requires work to flesh out native software support and enterprise solutions, but it’s advancing enough for Qualcomm to invest in its first dedicated connected PC chip, the Snapdragon 850. The inclusion of 4G and 5G modems, neural network based facial recognition for security, and multi-day battery life presents consumers with new and interesting value propositions over traditional PCs.

Specialized yet highly integrated computing isn’t a trend reserved for smartphones and 2-in-1s though. The explosion in Bitcoin mining oversaw huge growth in highly specialized number-crunching ASIC SoCs. The autonomous vehicle space continues to draw CPU, graphics, and neural networking capabilities together into single chips in a bid to reach lofty performance requirements. Google’s Cloud TPUs closely integrate computing using different hardware. This is the definitive trend in the broader computing industry right now.

Not stopping at 7nm

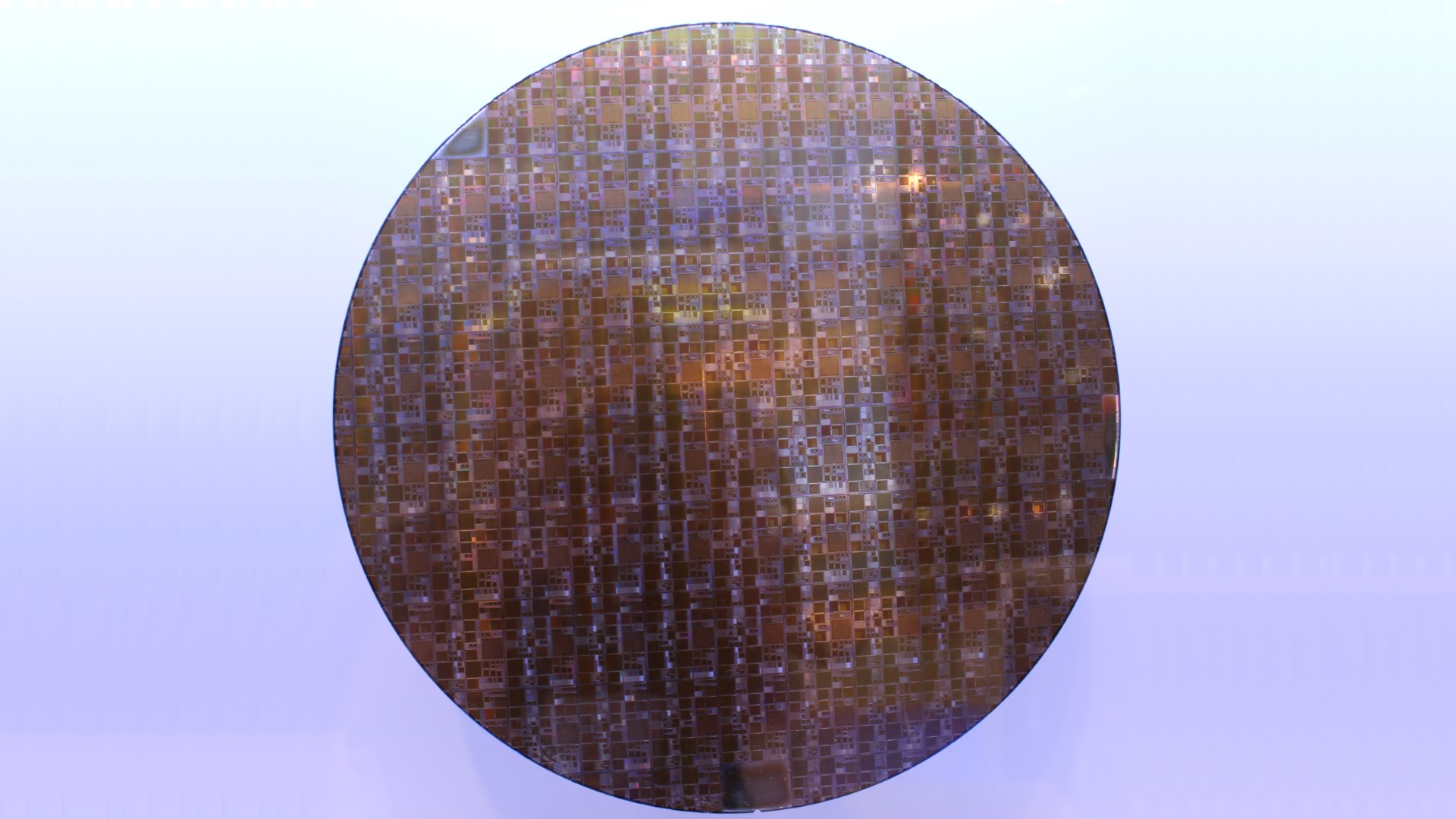

Mobile chipset designers and manufacturers have been keen to tout their latest achievements at 7nm, but this node marks a more important transition in the industry. It phases out the 193nm immersion lithography of previous successive generations, in favor of new higher accuracy Extreme Ultraviolet Lithography (EUV).

EUV is a key technology, as manufacturers plan for even more power efficient 5nm nodes in the near future. Industry leaders TSMC and Samsung also both have plans to scale down even smaller to 3nm in the coming years. Just as important are new advanced FinFet transistor structures like Gate-All-Around, new high-k metal gate materials and germanium graphene, as well as 3D stacking memory for tighter integration with processing components and improved efficiency.

According to TSMC’s Mark Lui, “EUV shows that lithography is no longer the limiting factor in scaling.”

7nm is a major achievement, but foundries are already looking to 5nm and beyond.

The driving force for 7nm chips and beyond is silicon density for increasingly integrated and complex chips and, perhaps most importantly, energy efficiency. More energy efficient manufacturing keeps portable devices running longer and ensures the most powerful cloud computers are cost effective. With neural network training hours coming at a considerable cost, lower electricity bills will save companies millions a year and help make powerful computing affordable to the business and researchers that need it.

SEMI President and CEO Ajit Manocha expects the chip industry to reach sales of $500 billion in 2019 and $1 trillion by 2030. Much of this will come from the growth of neural network computing, as well as high-end consumer SoCs for phones, laptops, and more. It isn’t just cutting-edge small processing nodes driving this trend — plenty of products are happy on 14nm and even 28nm — but it’s an increasingly significant factor driven by the hunt for improved efficiency.

I hope you’re not sick of AI yet

The term AI is certainly overused in the chip and product markets these days, but the consensus is the most recent advances in neural networking and machine learning will keep the technology around this time. Smartphones have been leading the advance, with architecture support for INT16 and INT8 math operations and cutting-edge neural networking hardware like the NPU inside HUAWEI’s Kirin or Google’s Visual Core inside the Pixel 2.

We’ve only begun to scratch the surface of what neural network hardware and software can do. Enhanced speech detection, face recognition security, and scene-based camera effects are all neat features, but we’re already seeing signs for even smarter machine learning techniques, both in the cloud and in consumer devices.

Huawei’s GPU Turbo technology, for instance, can manage smartphone power delivery and performance more efficiently once trained for a specific app. NVIDIA’s Deep Learning Super Sampling support in its latest RTX series of graphics cards is another impressive example where machine learning can replace existing computationally expensive algorithms with a higher performing alternative. The graphics giant’s AI Up-Res and InPainting image reproduction tools are similarly impressive, as is its interpolated Slow-Mo effect.

Machine learning is breaking out of image and voice recognition into even more advanced use cases. Consumer processors, and not just smartphone chips, will want to support machine learning inference to benefit from these emerging technologies, while dedicated training chips spur demand on the business side of the industry.

With hundreds of millions of smartphones shipping each year, it perhaps isn’t surprising to see competition and innovation driving mobile SoC designs forward so aggressively. Few probably would have predicted reasonable low power mobile chips, rather than heavy duty desktop class products, would be notching up so many silicon industry firsts, though.

It’s an odd situation compared to just over a decade ago, but smartphone SoCs are now leading part of the silicon industry. They are a good place to look if you want to see what’s coming next.

Next: AI camera shootout: LG V30S vs HUAWEI P20 Pro vs Google Pixel 2

Thank you for being part of our community. Read our Comment Policy before posting.