Affiliate links on Android Authority may earn us a commission. Learn more.

Google Tensor vs Snapdragon 888 series: How the Pixel 6 chip shapes up

Google’s Pixel 6 series launched back in late 2021 and they were the first phones powered by a semi-custom Google SoC, dubbed Tensor. The chipset raises some big questions. Can it catch Apple? Was it really using the latest and greatest technology around at the time?

Google could have bought chipsets from long-time partner Qualcomm or even purchased an Exynos model from its friends at Samsung. But that wouldn’t have been nearly as much fun. Instead, the company worked with Samsung to develop its own chipset using a combination of off-the-shelf components and a little of its in-house machine learning (ML) silicon.

The Tensor SoC is a little different from other top-end Android chipsets that were available in 2021 and especially 2022’s processors. We already have plenty of information to dive into an on-paper comparison with Qualcomm’s 2021 chipset (and Samsung’s 2021 SoC too), as well as some benchmark info. How does the Google Tensor fare against the Snapdragon 888 series? Let’s take a look at how they stack up.

More reading: Google Pixel 6 Pro review | Google Pixel 6 review

Google Tensor vs Snapdragon 888 series vs Exynos 2100

Google has already launched the second-generation Tensor G2 processor, used inside the Pixel 7 series. This chipset straddles the line between 2022 and 2023’s silicon. However, the first-generation Tensor is designed to compete with 2021’s Qualcomm Snapdragon 888 series and Samsung Exynos 2100 flagship chipsets. So we’ll use these as the basis for our comparison.

| Google Tensor (2021) | Google Tensor G2 (2022) | Snapdragon 888 (2021) | Exynos 2100 (2021) | |

|---|---|---|---|---|

CPU | Google Tensor (2021) 2x Arm Cortex-X1 (2.80GHz) 2x Arm Cortex-A76 (2.25GHz) 4x Arm Cortex-A55 (1.80GHz) | Google Tensor G2 (2022) 2x Arm Cortex-X1 (2.85GHz) 2x Arm Cortex-A78 (2.35GHz) 4x Arm Cortex-A55 (1.80GHz) | Snapdragon 888 (2021) 1x Arm Cortex-X1 (2.84GHz, 3GHz for Snapdragon 888 Plus) 3x Arm Cortex-A78 (2.4GHz) 4x Arm Cortex-A55 (1.8GHz) | Exynos 2100 (2021) 1x Arm Cortex-X1 (2.90GHz) 3x Arm Cortex-A78 (2.8GHz) 4x Arm Cortex-A55 (2.2GHz) |

GPU | Google Tensor (2021) Arm Mali-G78 MP20 | Google Tensor G2 (2022) Arm Mali-G710 MP7 | Snapdragon 888 (2021) Adreno 660 | Exynos 2100 (2021) Arm Mali-G78 MP14 |

RAM | Google Tensor (2021) LPDDR5 | Google Tensor G2 (2022) LPDDR5 | Snapdragon 888 (2021) LPDDR5 | Exynos 2100 (2021) LPDDR5 |

ML | Google Tensor (2021) Tensor Processing Unit | Google Tensor G2 (2022) Next-gen Tensor Processing Unit | Snapdragon 888 (2021) Hexagon 780 DSP | Exynos 2100 (2021) Triple NPU + DSP |

Media Decode | Google Tensor (2021) H.264, H.265, VP9, AV1 | Google Tensor G2 (2022) H.264, H.265, VP9, AV1 | Snapdragon 888 (2021) H.264, H.265, VP9 | Exynos 2100 (2021) H.264, H.265, VP9, AV1 |

Modem | Google Tensor (2021) 4G LTE 5G sub-6Ghz & mmWave | Google Tensor G2 (2022) 4G LTE 5G sub-6Ghz and mmWave | Snapdragon 888 (2021) 4G LTE 5G sub-6Ghz & mmWave 7.5Gbps download 3Gbps upload (integrated Snapdragon X60) | Exynos 2100 (2021) 4G LTE 5G sub-6Ghz & mmWave 7.35Gbps download 3.6Gbps upload (integrated Exynos 5123) |

Process | Google Tensor (2021) 5nm | Google Tensor G2 (2022) 5nm | Snapdragon 888 (2021) 5nm | Exynos 2100 (2021) 5nm |

As we’d expect given the nature of their relationship, Google’s Tensor SoC leans heavily on Samsung’s technology found in its Exynos 2100 processor. The modem, for one, is believed to be borrowed from the Exynos 2100. Meanwhile, the two chipsets share the same Mali-G78 GPU, albeit with the Google SoC offering a 20-core version and the Exynos topping out at 14 cores. The similarities are said to extend down to similar AV1 media decoding hardware support.

On paper, we’d expect better graphical performance than the Exynos 2100, but it’s the comparison to the Snapdragon 888 series that’s a different story. Still, that will be a relief for those hoping for proper flagship tier performance from the Pixel 6. However, it seems like the chip’s Tensor Processing Unit (TPU) will offer even more competitive machine learning and AI capabilities.

The Google Tensor SoC seems to be competitive across CPU, GPU, modem, and other technologies.

Google’s 2+2+4 CPU setup is an odd design choice. It’s worth exploring in more detail, which we’ll get to, but the prominent point is that two powerhouse Cortex-X1 CPUs should give the Google Tensor SoC more grunt for single-threads but the older Cortex-A76 cores may make the chip a weaker multitasker. It’s an interesting combination that harkens back to Samsung’s ill-fated Mongoose CPU setups. However, there were questions to be answered about this design’s power and thermal efficiency, which Google has attempted to answer.

On paper, the Google Tensor processor and the Pixel 6 series look to be very competitive with the Exynos 2100 and Snapdragon 888 series found through some of 2021’s best smartphones.

Understanding the Google Tensor CPU design

Let’s jump into the big question on every tech enthusiast’s lips: why would Google pick 2018’s Arm Cortex-A76 CPU for a cutting-edge SoC? The answer lies in an area, power, and thermal compromise. Either that or Google and Samsung simply didn’t have access to newer cores when work on Tensor began.

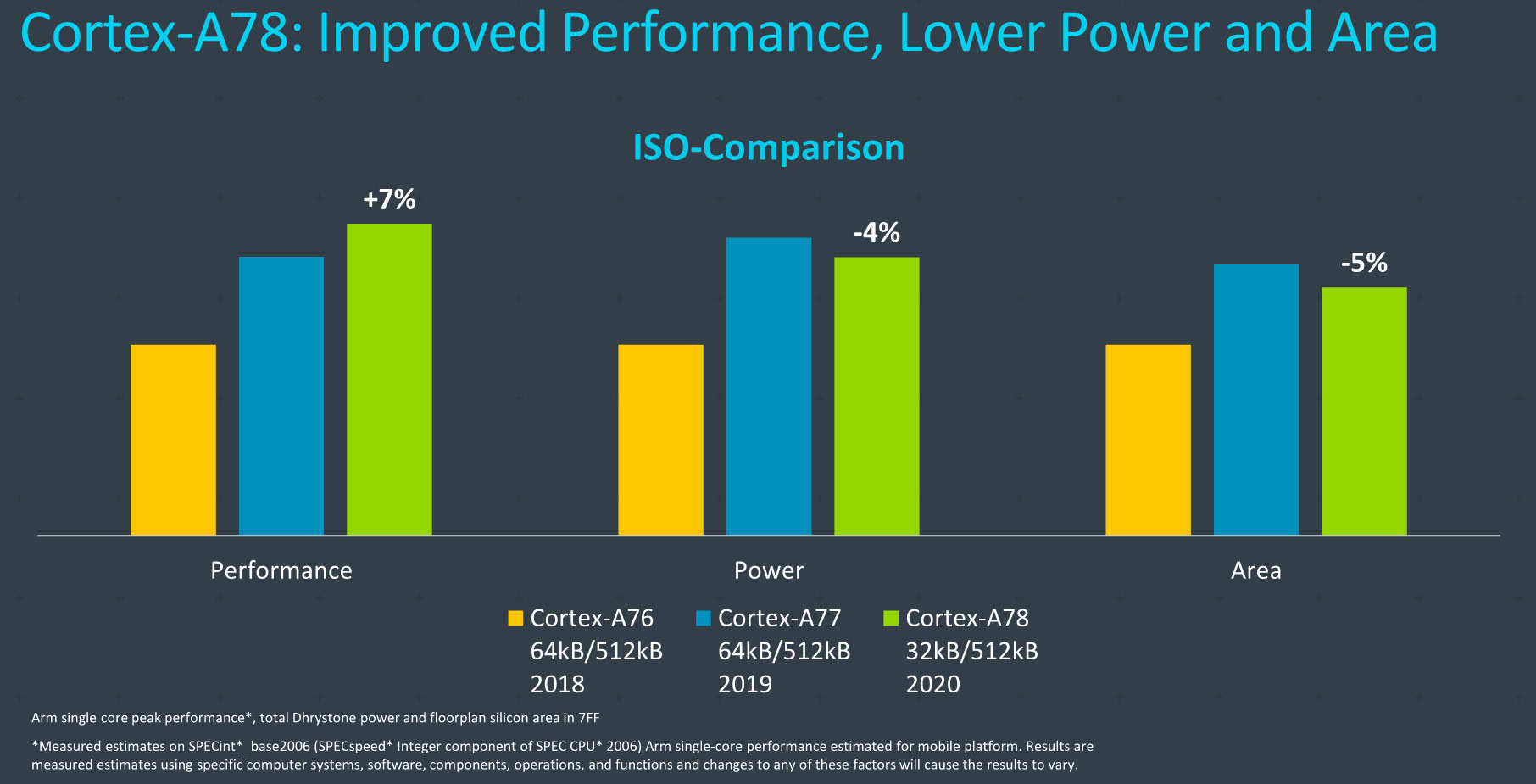

We dug up a slide (see below) from a previous Arm announcement that helps visualize the important arguments. Granted the chart’s scale isn’t particularly accurate, but the takeaway is that the Cortex-A76 is both smaller and lower powered than the newer Cortex-A77 and A78 given the same clock speed and manufacturing process (ISO-Comparison). This example is on 7nm but Samsung has been working with Arm on a 5nm Cortex-A76 for some time. If you want numbers, the Cortex-A77 is 17% larger than the A76, while the A78 is just 5% smaller than the A77. Similarly, Arm only managed to bring power consumption down by 4% between the A77 and A78, leaving the A76 as the smaller, lower power choice.

The trade-off is that the Cortex-A76 provides a lot less peak performance. Combing back through Arm’s numbers, the company managed a 20% micro-architectural gain between the A77 and A76, and a further 7% on a like-for-like process with the move to A78. As a result, multi-threaded tasks may run slower on the Pixel 6 than its Snapdragon 888 rivals, although that of course depends a lot on the exact workload. With two Cortex-X1 cores for the heavy lifting, Google may feel confident that its chip has the right mix of peak power and efficiency.

This is the crucial point — the choice of the older Cortex-A76s is perhaps bound to Google’s desire for two high-performance Cortex-X1 CPU cores. There’s only so much area, power, and heat that can be expended on a mobile processor CPU design, and two Cortex-X1s push against these boundaries. But why would Google want two Cortex-X1 cores when Qualcomm and Samsung are happy and performing well with just one?

Well, Google Silicon vice-president and general manager Phil Carmack told Ars Technica that this arrangement was done with more efficient “medium” workloads in mind. Carmack cited the example of using the camera viewfinder.

“You might use the two X1s dialed down in frequency so they’re ultra-efficient, but they’re still at a workload that’s pretty heavy. A workload that you normally would have done with dual A76s, maxed out, is now barely tapping the gas with dual X1s,” the Google representative was quoted as saying. Carmack further asserted that one big core was great for single-threaded benchmarks, but that two big cores were the most efficient solution for high performance.

Read more: What is Google’s Tensor chip? Everything you need to know

Besides the raw single-threaded performance boost — the core is 23% faster than the A78 — the Cortex-X1 is an ML workhorse. Machine learning, as we know, is a big part of Google’s design goals for this semi-custom silicon. The Cortex-X1 provides 2x the machine learning number-crunching capabilities of the Cortex-A78 through the use of a larger cache and double the SIMD floating-point instruction bandwidth.

In other words, Google is trading down some general multi-core performance in exchange for two Cortex-X1s that augment its TPU ML capabilities. Particularly in instances when it might not be worth spinning up the dedicated machine learning accelerator. The chipset is also believed to offer 8MB of system-level cache and 4MB of L3 cache, which should make a difference to performance as well.

Two powerhouse Cortex-X1 cores are a departure from Qualcomm's successful formula that comes with its own pros and cons.

Despite the use of Cortex-A76 cores, there’s still potentially a trade-off with power and heat. Testing suggests that a single Cortex-X1 core is quite power-hungry and can have trouble sustaining peak frequencies in today’s flagship phones. Some phones even avoid running tasks on the X1 to improve power consumption. Two cores onboard double the heat and power problem, so we should be cautious with suggestions that the Pixel 6 will blow past the competition simply because it has two powerhouse cores. Sustained performance and energy consumption will be key. Remember, Samsung’s Exynos chipsets powered by its heavy-hitting Mongoose cores suffered due to this very problem.

If you ask Google, extra responsiveness and more efficient medium workloads are the reason for adopting two Cortex-X1 cores. Clearly, the company is convinced it’s found the sweet spot on the performance/efficiency curve.

Google’s TPU differentiator

One of the few remaining unknowns about the Google Tensor SoC is its Tensor Processing Unit. We do know it’s primarily charged with running Google’s various machine learning tasks, such as voice recognition to image processing, and even video decoding. This suggests a reasonably general-purpose inference and media component that’s hooked into the chip’s multimedia pipeline.

Related: How on-device machine learning has changed the way we use our phones

Qualcomm and Samsung have their own silicon parts dedicated to ML too, but what’s particularly interesting about the Snapdragon 888 is how diffuse these processing parts are. Qualcomm’s AI Engine is spread across its CPU, GPU, Hexagon DSP, Spectra ISP, and Sensing Hub. While this is good for efficiency, you won’t find a use case that runs all these components at once. So Qualcomm’s 26TOPS of system-wide AI performance isn’t used often, if ever. Instead, you’re more likely to see one or two components running at a time, such as the ISP and DSP for computer vision tasks.

Google states that its TPU and ML prowess will be the key differentiator.

Google’s TPU no doubt comprises various sub-blocks, particularly if it’s running video encoding and decoding too, but it seems as if the TPU will house the bulk of if not all the Pixel 6’s ML capabilities. If Google can leverage most of its TPU power all at once then it may well be able to leapfrog its competitors for some truly interesting use cases.

Speaking of use-cases, Google touts features like offline voice dictation, offline voice translation, face unblurring for photos, and 4K 60fps HDR video shooting using dedicated “HDR Net” hardware built into the Pixel 6’s chip.

Testing the Tensor chipset

Now that we’ve taken a look at how the Tensor compares to the Snapdragon 888 on paper, what do benchmarks tell us? Well, we ran several tests to get a better idea of where the Google chipset ranks, using GeekBench 5 for CPU testing, 3DMark Wild Life for the GPU, and our in-house Speed Test G for an overall picture.

You can check out our graphic below for a look at the results:

The GeekBench test and CPU portion of Speed Test G show that the Tensor’s CPU is more in line with the Snapdragon 865 series than the Snapdragon 888 and Exynos 2100.

Google acknowledged at the time of the Pixel 6’s release that one big CPU core as seen on SoCs like the Snapdragon 888 and Exynos 2100 was better for benchmarks. But the decision to use two older CPU cores for the medium cores had an effect on these benchmarks too, particularly in multi-core tests.

Meanwhile, the 3DMark test shows that the Google processor is handily ahead of the Snapdragon 888 and Exynos 2100. But the GPU leg of Speed Test G shows that Qualcomm and Samsung’s chipsets are ahead instead. So graphical superiority might come down to factors like the specific workload, app, or graphics API, as well as the ability to deliver sustained performance.

The Google Tensor trades blows with 2021's flagship silicon, but it understandably lags behind 2022 SoCs.

For what it’s worth, our reviewers thought the Pixel 6 phones delivered a smooth experience in everyday tasks and when playing games. But the benchmarks suggest that there’s still a gap of sorts to the Snapdragon 888 in some areas.

How does the Tensor fare against 2022’s flagship silicon though? Well, Geekbench CPU scores show that the Snapdragon 8 Gen 1 and Exynos 2200 have similar single-core and multi-core performance as the previous-generation SoCs. In other words, the new chips have a healthy lead over the Tensor when it comes to multi-core performance, but the gap narrows when looking at single-core speeds.

Switch to the 3DMark Wild Life benchmark and it’s clear that the Snapdragon 8 Gen 1’s Adreno GPU blitzes the Tensor’s Mali-G78 MP20 setup as well as Apple’s A15 Bionic. The Exynos 2200 also enjoys a healthy performance advantage in this benchmark, although the gap is nowhere near as large as the one between the Snapdragon 8 Gen 1 and Tensor, while it’s still behind Apple’s latest SoC.

What’s concerning is that our reviewers felt the Tensor-toting Pixel 6 series and Pixel 6a ran very hot. It’s unclear why this is the case, but we have seen several chipsets with a single Cortex-X CPU core running hot. So it wouldn’t be a surprise if Google’s decision to use two Cortex-X1 cores came with increased heating and issues with sustained performance.

Google Tensor vs Snapdragon 888: The verdict

With HUAWEI’s Kirin effectively out for the count, the Google Tensor SoC has thrown some much-needed fresh blood into the mobile chipset colosseum. On paper, the Google Tensor looks every bit as compelling as 2021’s Snapdragon 888 and Exynos 2100.

As we’ve expected all along though, the Google Tensor doesn’t quite leapfrog these processors, trading blows with the Snapdragon 888 in benchmarks and occasionally being more in line with the Snapdragon 865 range. Needless to say, it falls way behind 2022’s Snapdragon 8 Gen 1 and Exynos 2200 chipsets, particularly when it comes to GPU performance. However, Google is clearly pursuing its own novel approach to the mobile processing problem.

With two high-performance CPU cores and its in-house TPU machine learning solution, Google’s SoC is a little different than its rivals. Although the real game-changer could be Google offering five years of security updates by moving to its own silicon.

What do you make of the Google Tensor vs Snapdragon 888 and Exynos 2100? Is the Pixel 6’s processor a true flagship contender?

Thank you for being part of our community. Read our Comment Policy before posting.