Affiliate links on Android Authority may earn us a commission. Learn more.

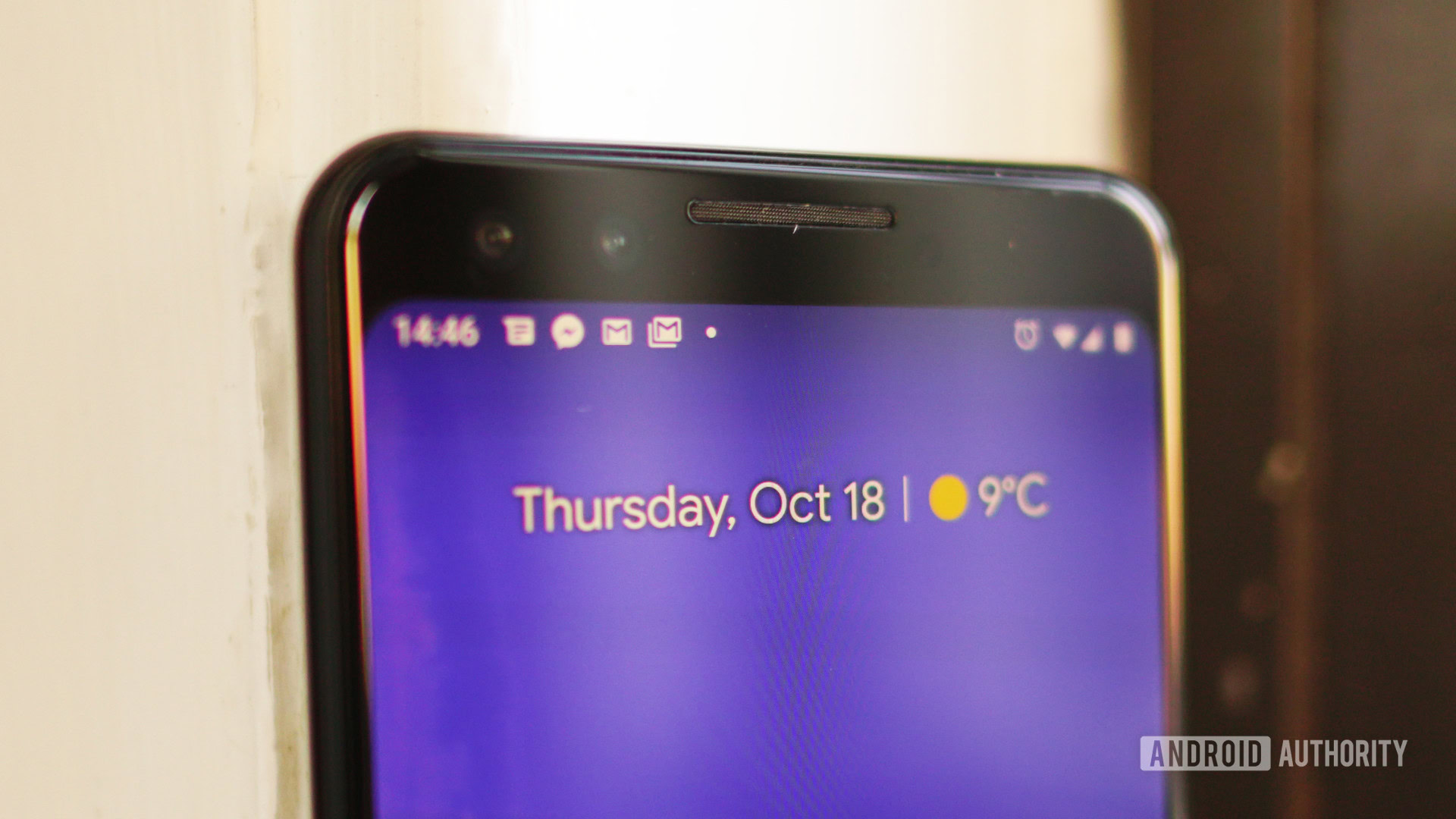

Here's how Google made portrait mode on the Pixel 3 even better

- Google has blogged about its recent improvements in AI and photography — specifically regarding portrait mode on the Pixel 3.

- The post discusses how Google has improved the way its neural networks measure depth.

- The result is an improved bokeh effect in its portrait mode shots.

Google has detailed one of the major photography accomplishments it achieved on the Pixel 3 on its AI blog. In the post published yesterday, Google discussed how it improved portrait mode between the Pixel 2 and the Pixel 3.

Portrait mode is a popular smartphone photography mode that blurs the background of a scene while maintaining focus on the foreground subject (what’s sometimes called the bokeh effect). The Pixel 3 and the Google Camera app take advantage of advances in neural networks, machine learning, and GPU hardware to help make this effect even better.

In Portrait Mode on the Pixel 2, the camera would capture two versions of a scene at slightly different angles. In these images, the foreground figure, a person in most portrait images, would appear to shift to a smaller degree than the background images (an effect known as parallax). This discrepancy was used as the basis for interpreting the depth of an image, and thus which areas to blur out.

This provided strong results on the Pixel 2, but it wasn’t perfect. The two versions of the scene provided only a very small amount of information about the depth, so problems could occur. Most commonly, the Pixel 2 (and many others like it) would fail to accurately separate foreground from background.

With the Google Pixel 3’s camera, Google included more depth cues to inform this blur effect for greater accuracy. As well as parallax, Google used sharpness as a depth indicator — more distant objects are less sharp than closer objects — and real-world object identification. For example, the camera could recognize a person’s face in a scene, and work out how near or far it was based on its number of pixels relative to objects around it. Clever.

Google then trained its neural network with the help of the new variables to give it a better understanding — or rather, estimation — of depth in an image.

What does it all mean?

The result is better-looking portrait mode shots when using the Pixel 3 compared to previous Pixel (and ostensibly many other Android phones) cameras thanks to more accurate background blurring. And, yes, this should mean less hair being lost to background blur.

There’s an interesting implication of all this which relates to chips too. A lot of power is required to process the necessary data to create these photographs once they’re snapped (they’re based on full resolution, multi-megapixel PDAF images); the Pixel 3 handles this pretty well thanks to its TensorFlow Lite and GPU combination.

In the future, though, better processing efficiency and dedicated neural chips will widen the possibilities not only for how quickly these shots will be delivered, but for what enhancements developers even choose to integrate.

To find out more about the Pixel 3 camera, hit the link, and give us your thoughts on it in the comments.

Thank you for being part of our community. Read our Comment Policy before posting.