Affiliate links on Android Authority may earn us a commission. Learn more.

Android 11 Developer Preview (Updated for DP3): What developers need to know

We live in strange times and it seems like much of the world has come to a standstill. Not Google though! The first Developer Preview for Android 11 dropped out of nowhere, and now we’re already at Developer Preview 3!

Make no mistake though: like its predecessors, this is a very early build and we will likely see many new features and UI tweaks before the final version, just as Android 10 changed a lot between beta 1 and the final release.

We also have no idea when Android 11 will exit beta, though Google has given us a target for “Platform Stability” (more on this in a moment). This is planned for next June and Google has even provided a development timeline this time around! So that gives us some clue, at least.

This is a very early build and we will likely see many new features and UI tweaks before the final version.

Overview of Android 11 Developer Preview

Google stresses that Developer Preview 2 is not aimed at consumers, and even developers might find the changes here a little barebones. You’ll find a detailed breakdown of what you can find below, but much of this will pertain only to select developers (there are a few features that will be useful for call screening apps, for example).

The key takeaways that should be on every developer’s radar are:

- Bubbles are still coming

- Dedicated conversations section in the notification shade

- Copy and paste images between inline replies

- Dynamic meteredness API and bandwidth estimator API offer more information about 5G connections

- Scoped storage mandatory for apps targeting Android 11

- BiometricPrompt now supports authenticator types and levels of granularity

- “Breaking” changes in Android 11 have been made toggleable for easier testing and debugging

- ImageDecoder API now supports HEIF files

- Apps can send camera capture requests enabling bokeh mode

- Low-latency video-decoding

- DP 2 brings a 5G state API so you can check if a user is connected

- You can now also get information about the location of the hinge on foldable devices

- In DP 3, ADB incremental lets you install large APKs up to 10x faster

- New wireless debugging with no cable necessary for setup

Even these features are somewhat niche and only likely to apply to a select few developers, for now at least.

Still, the sooner we can start playing around with new APIs and preparing for new rules and restrictions, the less of a headache we’ll have in the long run. So thanks Google!

With that in mind, you’ll find a more detailed breakdown of Android 11 beta for developers below, updated for version 2!

Note: This post will be updated regularly as Google rolls out new betas.

Detailed changes

Android 11’s focus (at the moment) appears to be preparing for forthcoming infrastructure, software innovations, and hardware trends. That means preparing for 5G, foldable devices, and machine learning. And like Android 10, there will also be an increased focus on privacy and security.

Also read: Android 11 hands on and first impressions

That latter point means there are more new features designed to help users control app behavior and restrict access to sensitive data. It’s all good stuff, but for devs it can mean reworking file systems and permissions.

5G

Android 11 brings updates to the current connectivity APIs. The bandwidth estimator API for example can now check downstream/upstream bandwidth without polling the network, which could be useful for managing downloads and updating progress bars. The dynamic meteredness API meanwhile will let developers check whether a connection is unmetered. This of course means that we can offer higher resolution streaming where appropriate, while also being mindful of user bank accounts.

As of developer preview 2, we are now also getting a 5G state API, to let us know whether a user is on a 5G network or not.

Screen Types

One of the most useful updates from a UI perspective is the inclusion of new API to be used in conjunction with the current display cutout APIs. This is designed to support waterfall screen edges (so Samsung devices), to include insets and thereby to handle interactions (and prevent accidental swipes and taps). Seeing as the new S20 devices largely do away with the waterfall effect, this feature may be a case of too little too late, though it’s worth noting that devices like the HUAWEI Mate X include a curved edge by necessity.

Also read: Android 11 will help prevent curved screens from breaking your apps

As of developer preview 2, there’s now also a new API for identifying where the hinge is located. This is useful for taking advantage of specific hardware, and avoiding jank!

Notifications

Bubbles never quite made the leap to Android 10. They’re available in this developer preview however, and will allow users to interact with messaging apps via floating chat heads (ala Facebook Messenger). Devs can play around with this by using the Bubbles API.

The notification shade now has a dedicated “conversations section,” and inline replies now support copy and pasting from the clipboard. In this developer preview, image copy support is only available in Chrome, while image paste is only available in Gboard clipboard.

Also read: Exploring Android Q: Adding bubble notifications to your apps

Neural Networks API 1.3

The Neural Networks API allows computationally intensive ML operations to be run directly on Android devices. The latest update will add several new operations and controls: expanded quantization support, a memory domain API, and a quality of service API. For those that want to find out more, Google handily supplied some NDK sample code.

Three more updates for the Neural Networks API were introduced in the second preview. A hard-swish op is an efficient function for faster training and higher accuracy. Control ops meanwhile support more advanced machine learning models. And asynchronous command queue APIs will help to minimize overhead.

Privacy

Privacy is the big one, and Google is once again placing a lot of focus on this area.

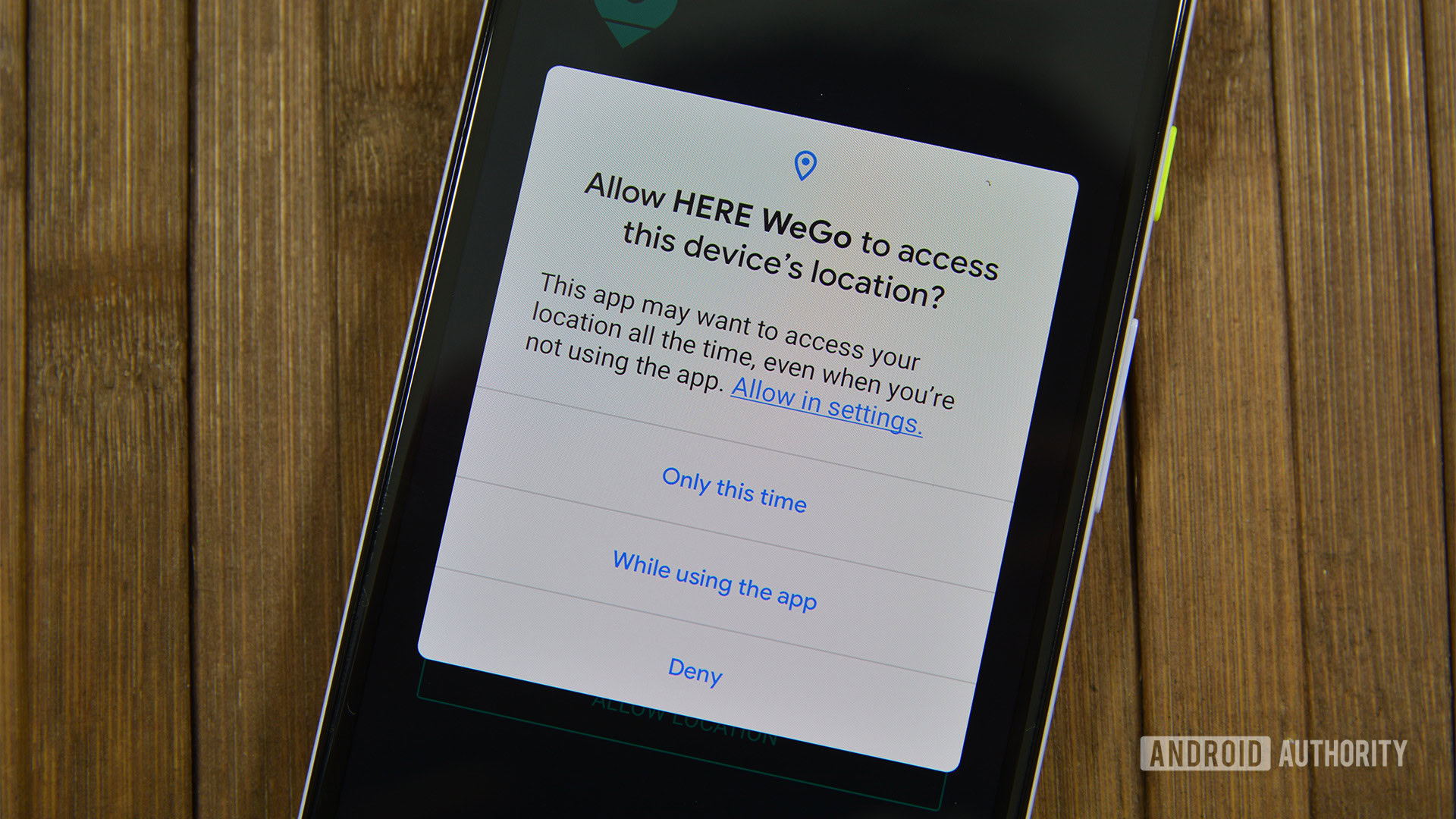

One big update for developers is the one-time permission, which will allow users to accept a permission a single time only. This will require a few changes to the way you currently handle permissions, and more information is offered here.

Scoped storage will be mandatory for apps targeting Android 11.

Scoped storage has received a few updates, including opt-in raw file path access for media, batch edits for MediaStore, and updates to DocumentsUI. A more complete list can be found here. Scoped storage will be mandatory for apps targeting Android 11. Remember: users will be able to control access to shared files in the Photos, Videos, and Audio folders using new runtime permissions, and access to the Downloads folder will only be available via the systems file picker. More changes were introduced with DP2, including the option to migrate files from the legacy model to the new system.

Security

The BiometricPrompt API will now support three authenticator types with different ratings: strong, weak, and device credential.

Google has increased the use of compiler-based sanitizers in security-critical components. This should result in a more secure Android 11, but it may produce repeatable bugs and crashes in apps that should be tested for. Google now offers a system image with HWASan to help devs find and fix memory leaks. A BlobstoreManager will make it easier for apps to safely share data blobs.

Android 11 will also offer support for the secure storage and retrieval of verifiable ID documents such as driving licenses. Google says it will provide more details on this feature soon, but it could mean we’re really able to leave our wallets at home soon!

No in DP2, apps will need to declare themselves as foregroundServiceType if they wish to access the camera or microphone.

The latest update has also introduced new call screening features. Those apps that utilize these features can take advantage of new APIs and utilize post-call screens and more.

Google has also added 12 more updateable modules for Android, especially relating to privacy controls. The hope is that more OEMs will push these important updates out to users, resulting in greater security and consistency across the Android ecosystem. So don’t ignore the changes!

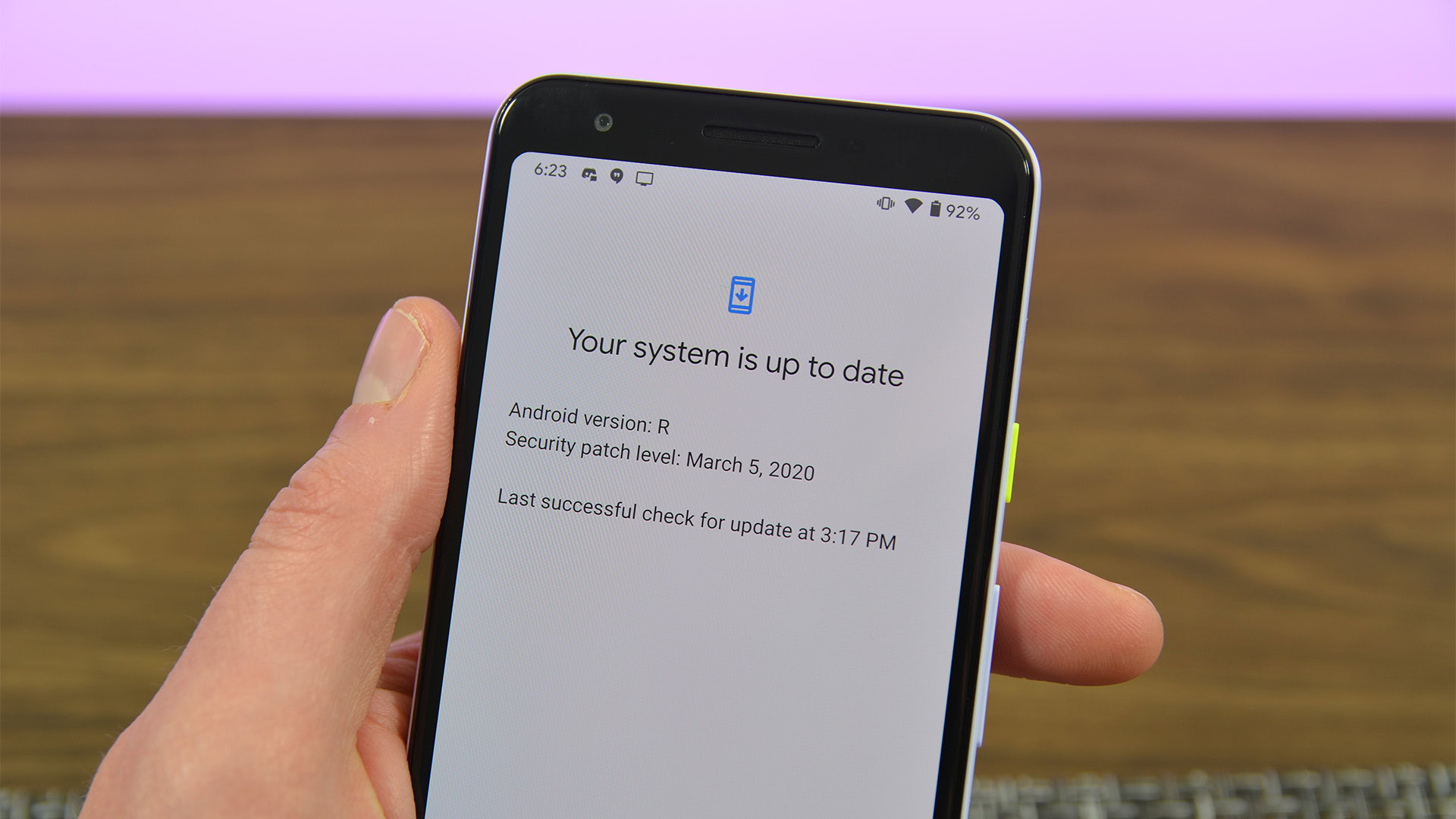

Testing, debugging, and compatibility

Reportedly, developers told Google last year that it was hard preparing for Android 10 without a concrete deadline for final changes. No duh! To minimize this frustration with Android 11, Google has committed to “platform stability” by early next June. This update will include the final SDK and NDK APIs, along with any changes to internal APIs and system behavior.

Google has committed to “platform stability” by early next June.

To help smooth the transition from Android 10 to 11, Google has ensured most potentially app-breaking updates are toggleable. Devs can this way identify which new updates are causing compatibility issues, then turn those features off while they work on a fix. This will hopefully make it quicker to get your apps onto new devices, as you won’t need to constantly toy around with targetSdkVersion or recompiling.

Google has also updated the lists of restricted non-SDK interfaces, and provided a public API for loading resources and assets dynamically at runtime.

This area is where DP 3 has brought the most action. ADB Incremental will allow installations of large APKs up to 10x more quickly over ADB. It also brings wireless debugging with no cable necessary for set-up. W also get GWP-ASan heap analysis to help developers find memory safety issues. New wireless debugging removes the need for a cable during setup. And an update to the exit reasons API will help devs identify why an app was closed.

Connectivity in Android 11 Developer Preview

If you own a call-screening app, you will now be able to retrieve the STIR/SHAKEN verification status of incoming calls, and customize system-provided post call screens that allow users to easily mark calls as spam and take other actions.

The Wi-Fi suggestions API has new features for WiFi management apps and other tools. For instance, devs can now force disconnections by removing network suggestions, and gain more detailed information about connection quality.

Passpoint enhancements will enforce and notify the expiration of Passport profiles. The Wi-Fi suggestions API now includes the option to manage Passpoint networks.

Camera

ImageDecoder API now supports decoding and rendering image sequence animations from HEIF files, thus allowing the use of high quality assets with minimal impact on network data/APK sizes. Using decodeDrawable on a HEIF source will let devs show the highly efficient HEIF image sequences in apps, just like GIFs. Where the source contains an image sequence, an AnimatedImageDrawable will be returned.

The Native Image Decoder API for NDK will support encoding and decoding image files from native code for graphics and post-processing. This removes the need for external libraries, keeping APK sizes down.

ImageDecoder API now supports decoding and rendering image sequence animations from HEIF files.

New APIs also allow developers to mute vibrations and notifications during active recording sessions. Metadata tags will now allow bokeh modes for camera capture requests on compatible devices.

Camera support is now available in the Android emulator for both back and front shooters.

Media Streaming

Low-latency video decoding in MediaCodec returns the first frame of a stream as soon as it is ready; a critical feature for services like Google’s own Stadia. New API features allow apps to check and configure low-latency playback for specific codecs.

HDMI low-latency mode

Time to get testing!

Some additional features and upgrades will prove useful for users, but may not have a huge impact on developers. For example, dark theme can now be set to change automatically based on time of day. And baked-in screen recording is once again meant to be making its way into our pockets, which could be useful for bug testing and marketing.

As usual, developers can try this preview by flashing the device system image onto a compatible device, or by installing it through the Android Emulator in Android Studio (Canary channel). The latter option also includes experimental support for ARM 32-bit and 64-bit binary app code running on 64-bit x86 Android Emulator system images.

Read also: Android 11 release date: when can you expect it to launch?

So what do you make of all this? Do any of these features benefit your apps? What else would you like to see in future betas?

Thank you for being part of our community. Read our Comment Policy before posting.