Affiliate links on Android Authority may earn us a commission. Learn more.

New Samsung Galaxy S7 image sensor explained

Samsung has long been a notable player in the mobile image sensor game and the company is taking a new approach to photography with the introduction of its Galaxy S7 smartphone range. The company is throwing out its high pixel count 16 megapixel sensor from last year’s flagships in favour of a lower resolution 12 megapixel sensor that features larger individual pixels. So let’s explore why Samsung is making this change.

Why use larger pixels?

We are regularly told, perhaps wrongly, that smartphone image sensors are catching up with the features and quality offered by much more expensive pieces of equipment. However, the fact is that the compact size of smartphone camera components leaves designers having to make compromises. Often we have seen engineers increase sensor resolutions in an attempt hide noise and imperfections, but this comes at the expense of colour quality and low-light performance. Samsung looks to be tackling this particular trade-off head-on, by reducing the megapixel count and opting for larger pixel sizes.

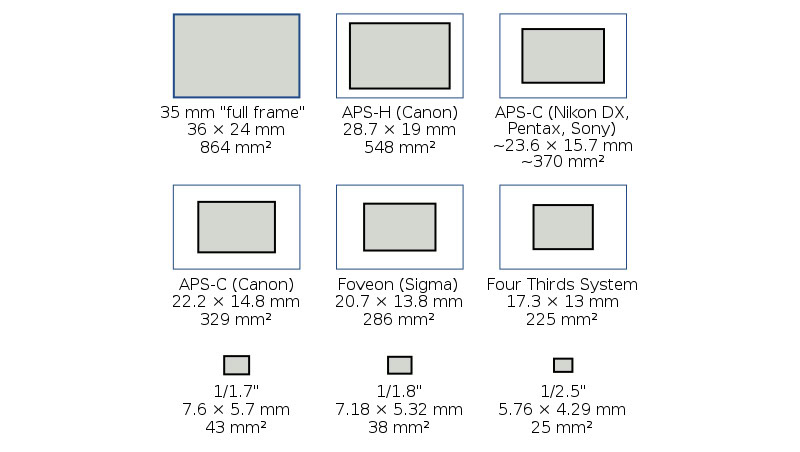

As photography enthusiasts are probably aware, it isn’t the megapixel count that matters so much for capturing high quality imagery. Extra pixels are handy for cropping down images at a later date, and it might interest you to know that just 6 megapixels is enough data for a detailed A4 sized picture print out. Instead it’s the size of actual image sensor and the ability of the photosites (or sensor pixels) to capture enough light that is highly important to image quality.

Simply put, larger image sensor sizes allow for bigger photosites, which means more light per pixel. Typically, this results in a superior dynamic range, less noise, and better low light performance than smaller image sensors with an overly high pixel count. Of course, compact smartphone form factors mean that we’ll never have quite enough space to match DSLR sensor sizes, so compromises have to be made to find the right balance between noise, resolution and low light performance. Rather than pursuing additional resolution, Samsung is looking to increase image quality by maximising the space available for each photosite.

Of course, CMOS image sensor design is a little more complicated than just that. Backplane electronics and isolation between pixels can have a substantial impact on attributes such as noise due to crosstalk between pixels. The lens placed on top of the sensor and the image signal processor used to interpret the data are equally important too. Unfortunately, Samsung hasn’t spilt enough beans for us to piece together everything that is going on inside its latest smartphone camera, but here’s what we do know.

Samsung’s sensor specs

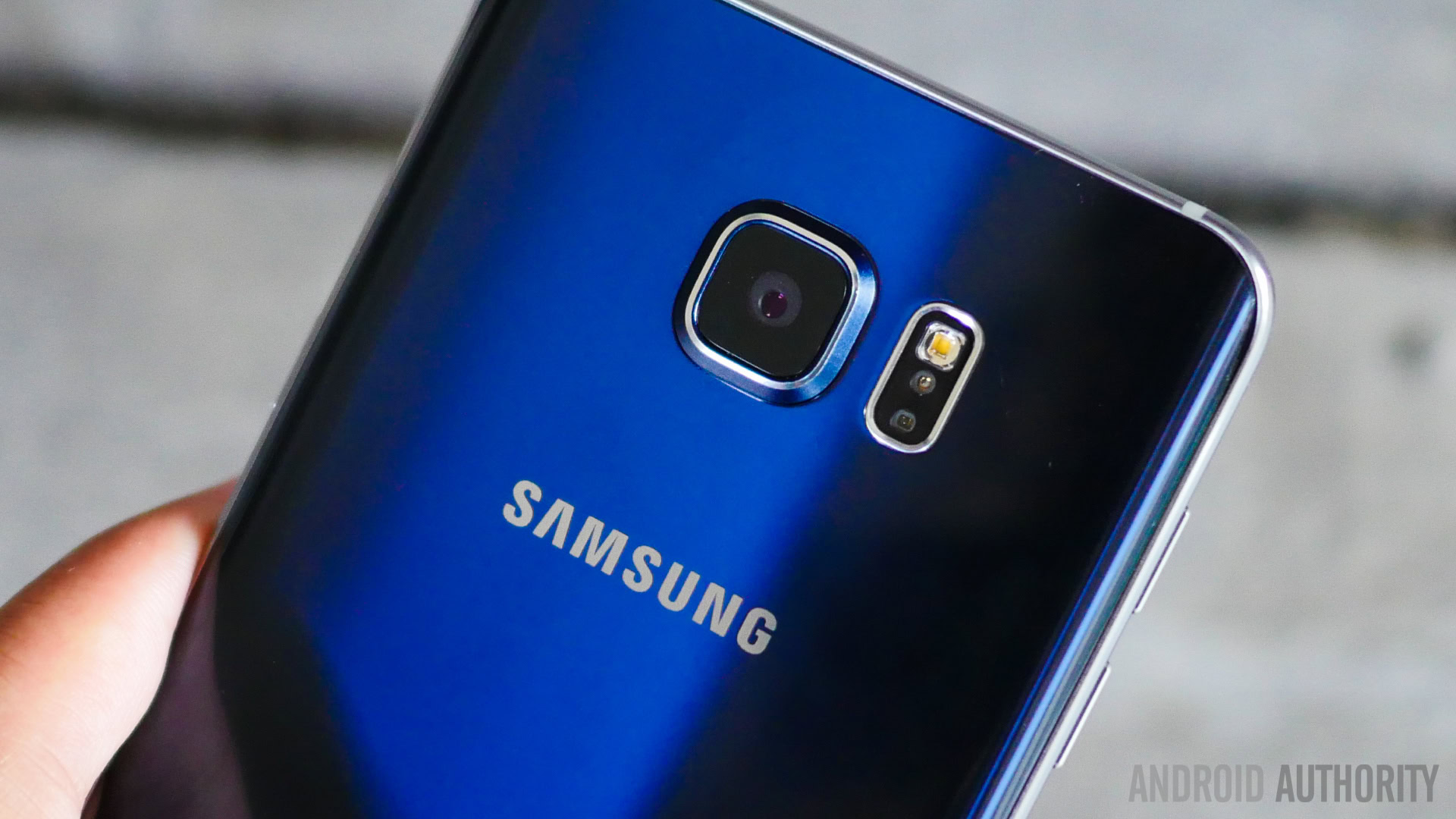

Samsung has increased its pixel size from 1.0um in its Isocell sensors up to 1.4µm in the Galaxy S7, allowing for additional light capture in each photosite. This marks a 56 percent increase in pixel size compared with the Galaxy S6.

This isn’t quite as large as the 2.0µm sizes found inside HTC’s Ultrapixel technology and is still slightly smaller than the 1.55µm photosites found inside the Nexis 6P’s sensor, which was a consistently excellent performer in our own testing. However, Samsung has also greatly increased the size of the opening in the accompanying lens so that additional light can make its way to the sensor. The Samsung Galaxy S7’s lens has an aperture of F/1.7, up from the F/1.9 aperture in the Galaxy S6’s 16 megapixel camera, offering up to 25 percent more brightness.

Combined, Samsung states that this allows the camera in the Galaxy S7 and S7 Edge to capture 95 percent more light compared with its predecessor, which should greatly improve low light performance and help to de-noise images, a common problem with small smartphone sensors.

The future of fast focusing

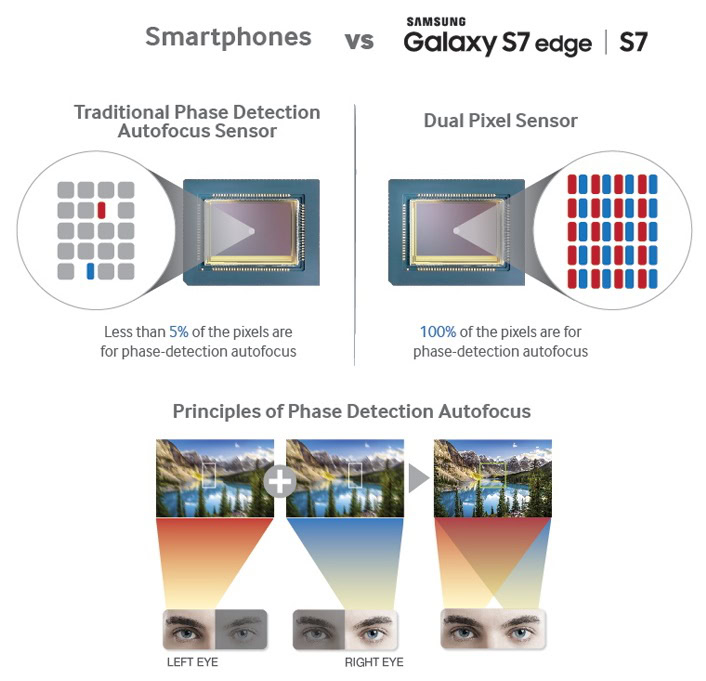

The extent of Samsung’s changes with its new image sensor don’t just stop at light capture. The company is also the first to implement dual-pixel on-chip phase detection across every single pixel in its sensor.

This phase autofocus technology has been used in some DSLR camera sensors and works by detecting the phase of light received at two separate pixel locations. This information can then be used to focus in on a specific object or part of the frame, in a way not too dissimilar to the way that the human eye perceives depth.

Other image sensors, such as high-end Sony Exmor RS models, implement a small number of phase detection diodes across the sensor, but these usually account for around just one percent of pixels. Samsung is the first company to implement phase detection across every pixel in its sensor. The major benefit here is that focusing can be achieved much faster than before and focus time is less dependent on the content of pixels located in certain places on the sensor. Samsung shows off just how fast the Galaxy S7 (right) can focus compared with the Galaxy S6 (left) in the video below:

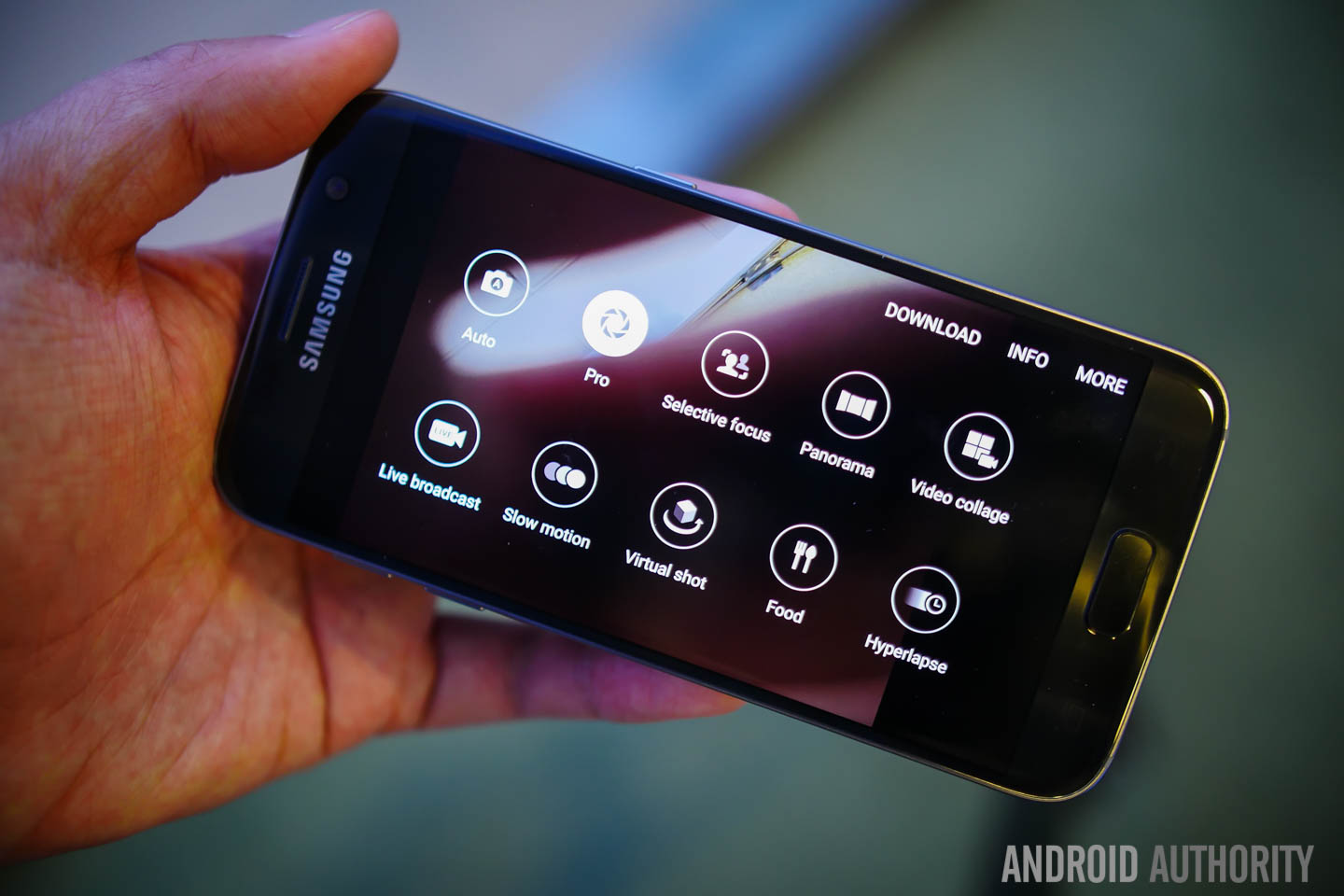

The Samsung Galaxy S7 has certainly set itself up with quite a different take on photography than the company’s previous flagship models, and the theory sounds right on paper to really bump up the picture quality. Ultimately though, it’s the final image quality that matters the most and there’s more to a good picture than just the sensor. We’re going to be putting the handset’s camera through plenty of testing when it comes do doing a full review.

Thank you for being part of our community. Read our Comment Policy before posting.