Affiliate links on Android Authority may earn us a commission. Learn more.

Qualcomm launches its new AI Engine, works on existing Snapdragon processors

Most mobile Machine Learning (ML) tasks, like image or voice recognition, are currently performed in the cloud. Your smartphone sends data up to the cloud where it is processed and the results are returned to your device. However, the ability to perform machine learning tasks locally on your device, rather than remotely via the cloud, is becoming increasingly important. To help developers provide better machine learning-based enhancements, Qualcomm has launched a new brand to encapsulate its current ML offerings. The Qualcomm Artificial Intelligence (AI) Engine consists of several hardware and software components that can be used, by app developers, to provide “AI-powered user experiences”, with or without a network connection.

Machine learning consists of two distinct stages: training and inference. In the training stage the Machine Learning algorithm (probably a Neural Network) is fed lots of examples (photos, voice, whatever) along with the corresponding classification. Then, once trained, the Neural Network is used to classify new data. For example, the ML system might be trained with thousands of photos of dogs and then in the inference stage it is shown a new, previously unseen, picture of a dog and based on its training it will be able to recognize that the image contains a dog.

Read Next: Qualcomm AI – an idealistic vision for on-device AI

This inference stage works on almost any type of processing unit including CPUs, GPUs, DSPs and dedicated inference engines like Huawei’s Neural Processing Unit (NPU) or Arm’s recently announced Machine Learning Processor. The key difference between these processing units is how fast they can perform the inference and how much power they use to do it.

There is a very valid argument for not needing dedicated hardware to perform inference and that is Qualcomm’s current position. However, the performance and efficiency argument is also valid and it is the position currently touted by Arm and HUAWEI.

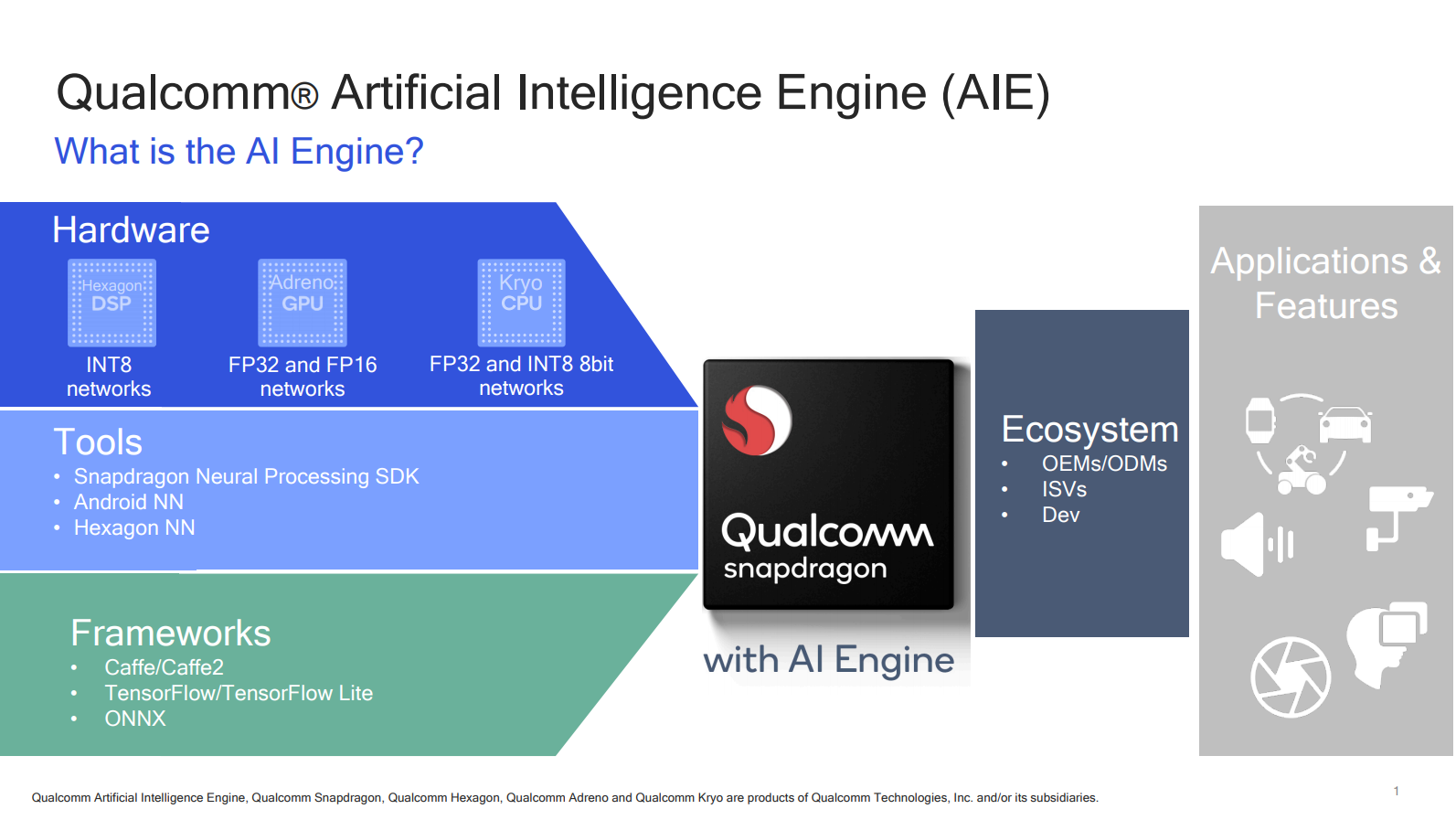

The Qualcomm AI Engine uses the existing CPU, GPU and DSP components found in some of the leading Snapdragon processors (the 845, the 835, the 820 and the 660). The key component in these processors is the inclusion of the Hexagon DSP with the Hexagon Vector eXtensions (HVX).

On the software side the Qualcomm AI Engine offers three components:

- Snapdragon Neural Processing Engine (NPE) software framework – A top level heterogeneous library that supports the Tensorflow, Caffe and Caffe2 frameworks, in addition to the Open Neural Network Exchange (ONNX) interchange format. The idea here is that the NPE picks the right component (CPU, GPU, DSP) for any given task.

- Android Oreo’s Neural Networks API – Support for Android’s NN will appear first in Snapdragon 845.

- Hexagon Neural Network (NN) library – Works exclusively with the Hexagon Vector Processor.

Several of Qualcomm’s device partners are already using the AI Engine’s components. They include Xiaomi, OnePlus, Motorola, ASUS and ZTE.

As for software developers, Qualcomm is working with several different companies. For example, SenseTime and Face++ offer a variety of pre-trained neural networks for image and camera features including single camera bokeh, face unlock, and scene detection. Uncanny Vision, on the other hand, provides optimized models for people, vehicle and license plate detection and recognition. Also, Tencent recently launched a feature in the Mobile QQ app called High Energy Dance Studio. The Mobile QQ application for Android uses AI Engine components to accelerate frame rates of the game.

While Qualcomm’s AI Engine is indeed capable, the cynics among you may agree with me that this “branding” effort is really just a reaction from Qualcomm to Arm’s Project Trillium announcement from last week. I wouldn’t be surprised if future Snapdragon processors include a dedicated inference engine, either Arm’s new ML, or an in-house development from Qualcomm. Time will tell.

What do you think of Qualcomm’s AI Engine? Should Qualcomm including a dedicated “NPU” in its processors? Please let me know in the comments below.

Thank you for being part of our community. Read our Comment Policy before posting.