Affiliate links on Android Authority may earn us a commission. Learn more.

Those slick, new Google Lens features are rolling out now — here's how they work

- The Google Lens features announced at I/O 2018 are rolling out to users right now.

- The new features include the ability to copy text from the real world to your phone.

- No app updates are required on the user’s part, as the rollout seems to be on Google’s end.

The new Google Lens features made for quite an impression at Google I/O 2018, as the company demonstrated a variety of machine vision features. Now, it looks like Google is quietly delivering a new UI and these features to a growing number of users.

The rollout, first spotted by 9to5 Google, has also arrived on my personal Oreo-toting Galaxy S8 — no user-side updates required. You clearly don’t need to run the Android P developer preview to get it, either. The update brings a new UI and an overhauled introductory section. However, the redesign plays second fiddle to the new features.

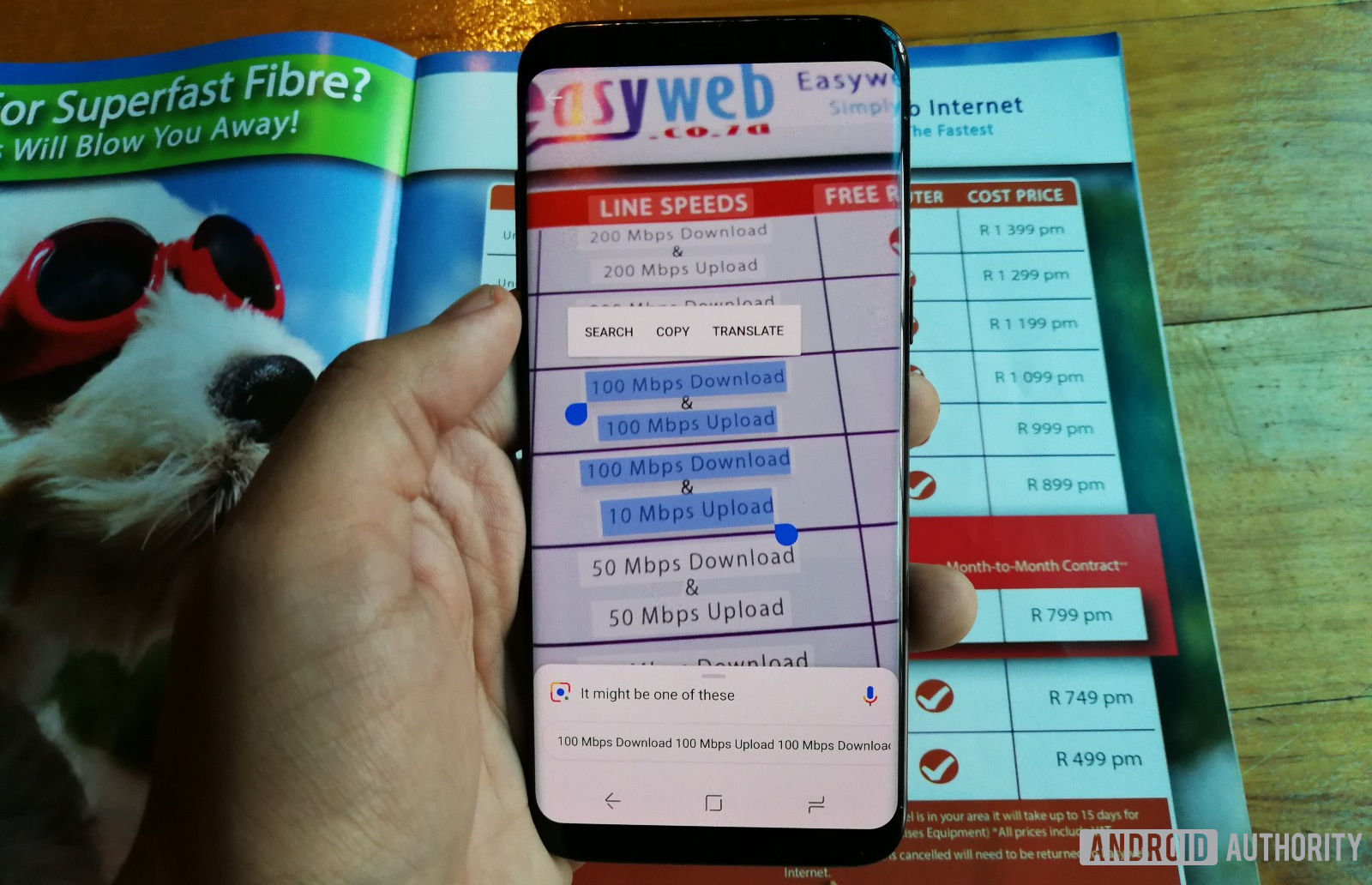

First up, we have the slick ability to copy text from the real world (such as documents and magazines) onto your smartphone’s clipboard. And it’s very polished already, as you tap on the text via your viewfinder to help Lens recognize any words. From here, you tap and hold on the words to bring up the usual copy/paste dialog. This could be a great alternative to simply taking a photo of the text, or for transcribing a quote from a printed press release.

Then there’s new functionality that passively surfaces “anchor points” of information in your viewfinder — the user doesn’t need to activate it. You simply tap on the anchor when it pops up to instantly reveal the object/subject information in question. This works well enough, it takes two or three seconds on average for anchors to pop up, in my experience so far.

This information isn’t always accurate, however: Google Lens had trouble identifying a Nintendo Switch, for example. It also had trouble with identifying plant species, but that’s generally a tough task for machine vision/machine learning anyway. If Google Lens still doesn’t identify the object you’re looking at, you can simply tap on it to search manually.

The final Google Lens feature is the ability to identify objects and clothing with a similar style. So point your camera at a particular table or jacket and you should find one with a similar style. I say “should,” because Google gave me seemingly generic results for a couch.

Nevertheless, I’m pretty impressed with the text-copying functionality already, while the anchor point feature is pretty intuitive too. Now, about launching those Google Photos features outlined at I/O 2018…

Not sure if you have the new Google Lens? Try holding down your home button to summon Google Assistant. If you’ve got the new update, you should see the Google Lens icon in the bottom right corner.

Have you received the new Google Lens? Then drop us a comment below, along with your phone model!

Thank you for being part of our community. Read our Comment Policy before posting.