Affiliate links on Android Authority may earn us a commission. Learn more.

Google Pixel 4 Motion Sense explained: What it can (and can't) do for now

Today, Google took the wraps off its latest pair of smartphones: the Google Pixel 4 and Pixel 4 XL. A new feature that comes with those new devices is called Motion Sense, which is Google’s marketing language for the Pixel 4’s radar-based features.

You might be thinking: “Radar? As in what they use to track airplanes and submarines?” Yes, that is the very same technology housed in the front-facing sensor system of the Pixel 4. Radar allows users to control aspects of their phone without the need to physically touch the device.

Although contactless smartphone control is definitely a highlight of Motion Sense, it’s not the only thing that’s possible.

Project Soli: The beginning of Motion Sense

Motion Sense had its beginnings years ago as part of Google’s Project Soli. An offshoot of Google’s secretive and experimental “X” project, Soli set out to discover if radar had any practical applications in mobile devices, including smartphones, smartwatches, laptops, etc.

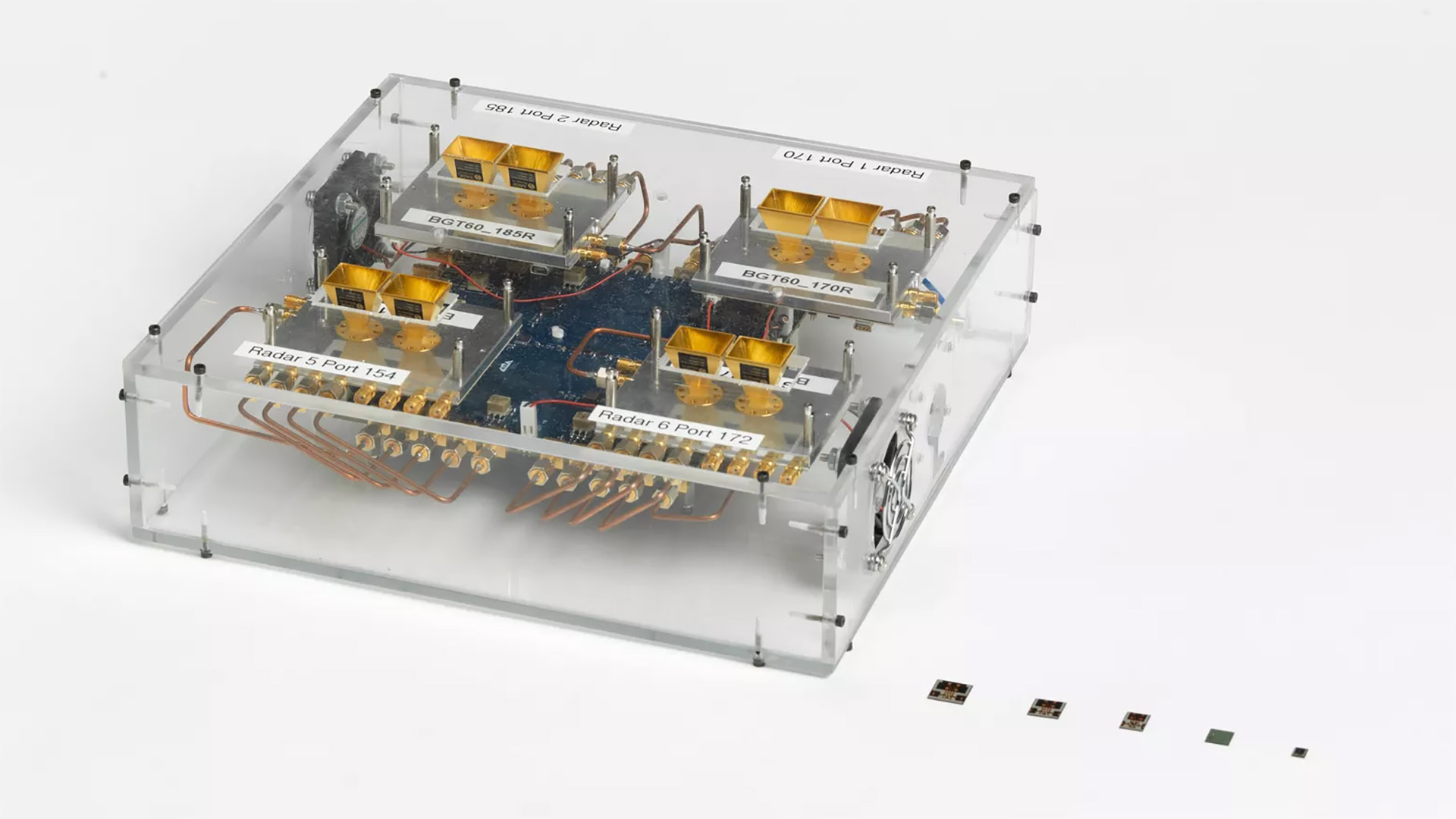

The biggest hurdle for Project Soli was size: a traditional radar system is far too large to be practical on any mobile device. It took years for the team to shrink the device down to a more manageable size.

Even when it succeeded in dramatically shrinking Soli (see the image above), the team needed to then figure out a way to translate radar signals into something a smartphone would understand. This might sound simple but it’s actually quite tricky.

If Motion Sense seems pretty simple right now, don't hold that against it. This is very, very complicated technology.

For example, if someone told you to swipe your hand in front of a radar sensor, how would you do it? Would you go from left to right? Right to left? Up and down? Would your palm be open or closed? Would you go slow or fast? These are all variables the team needed to take into account just to interpret something as simple as a swipe of the hand.

With this in mind, when you take the Google Pixel 4 out of its box, the number of functions related to Motion Sense will be pretty limited. That’s not a ding on the system, though: it’s simply a current limitation due to the technology still being in its infancy. As things move forward, more and more functions will be possible.

Motion Sense features: What you can do right now

There are currently three primary variables that Motion Sense uses to create smartphone responses: presence, reach, and gestures. Those are essentially in order from simplest to most intricate.

Let’s start with presence. The radar system within the Pixel 4 creates a sensor field with about a one-foot radius around the device at all times (unless it is face down). In the simplest terms, this field determines whether or not you are near your phone. If you are near your phone, certain things will happen. For example, the always-on display will illuminate if you are nearby and turn off if you aren’t.

Motion Sense tracks three different variables at the moment: presence, reach, and gestures.

The next system is reach. This system tracks whether or not you are in the process of reaching for your phone. If you are, it will respond in simple ways, such as turning on the front-facing sensors that control Face Unlock. It will also make an alarm or phone call quiet down a bit if you are in the process of reaching for your phone.

Finally, there are gestures. A swipe of your hand in front of your Pixel 4 will have multiple effects depending on what’s happening on your device at the moment. For example, a swipe over an incoming call will reject it and a swipe over an alarm will turn the alarm off. You can also skip tracks with this gesture if you’re listening to music.

It should be noted that, due to the implementation of government-regulated radar sensors, the Pixel 4 will only be sold in certain areas around the world. As of now, that includes the United States, Australia, Canada, France, Germany, Ireland, Italy, Japan, Singapore, Spain, Taiwan, and the United Kingdom. It’s possible other countries could see the Pixel 4 in the future if Google can meet their individual radar regulations.

What you could possibly do in the future

The trickiest part of making Motion Sense work was the implementation of the hardware. With that in place, Google just needs to issue software updates to add more intricate gestures.

The sky is pretty much the limit for how far Google could take this. For example, it could implement a gesture that allows you to easily turn on Do Not Disturb mode. This could be great if you’re in a meeting and forgot to set your phone into a silent mode — you could then do so without even needing to touch it.

Related: Google Pixel 4 and Pixel 4 XL: Price, release date, availability, and deals

Another idea is to implement a gesture that takes a photo. You could prop up your camera and get your group of friends together and then issue a gesture from quite a distance away, and snap: your phone takes the shot, no Bluetooth controller or selfie stick necessary.

It’s quite possible Google has these ideas and others in mind already.

The true test for Motion Sense will be whether or not Google can make it into more than a gimmick. We’ve seen other smartphone gesture systems come and go that offered similar functionality (not based on radar, though, which is much more accurate and energy-efficient than any prior system). Can Motion Sense reach new heights and rise above these other systems? We’ll need to wait to see how seriously Google takes Motion Sense going forward.

Thank you for being part of our community. Read our Comment Policy before posting.