Affiliate links on Android Authority may earn us a commission. Learn more.

Google AI’s photo recognition achieves 94 percent accuracy

We’ve all enjoyed the simple benefits of Google’s artificial intelligence photo recognition. Google Photos employs a very stripped down version of the algorithm to identify pictures as containing cats, dogs, food, or specific people. However, the search giant has been working on much more advanced photo recognition capabilities, and today they’ve released their progress to developers.

The Google Research Blog reports that the Google Brain team’s AI image captioning system has achieved a 93.9 percent accuracy rating. Their results in 2014 used the Inception V1 image classification model and achieved 89.6 percent accuracy. This might not seem like a vast improvement, but when it comes to emulating natural human language activity, such as captioning a photo, the curve becomes quite steep.

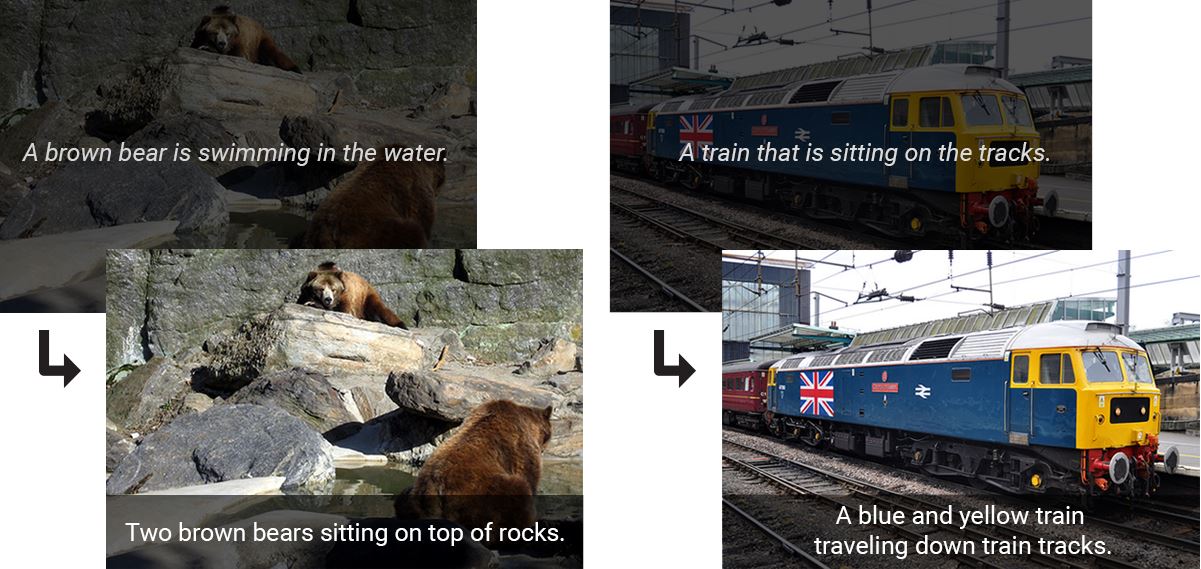

The image above demonstrates improvements since 2014. The system is not only much better at identifying objects, but it’s also better at describing them with specific colors and actions.

Part of what makes this year’s Inception V3 model so effective is that it not only identifies individual objects within a photo, but it also interrelates them. Google Brain Team Software Engineer Chris Shallue describes it thus:

For example, an image classification model will tell you that a dog, grass and a frisbee are in the image, but a natural description should also tell you the color of the grass and how the dog relates to the frisbee.

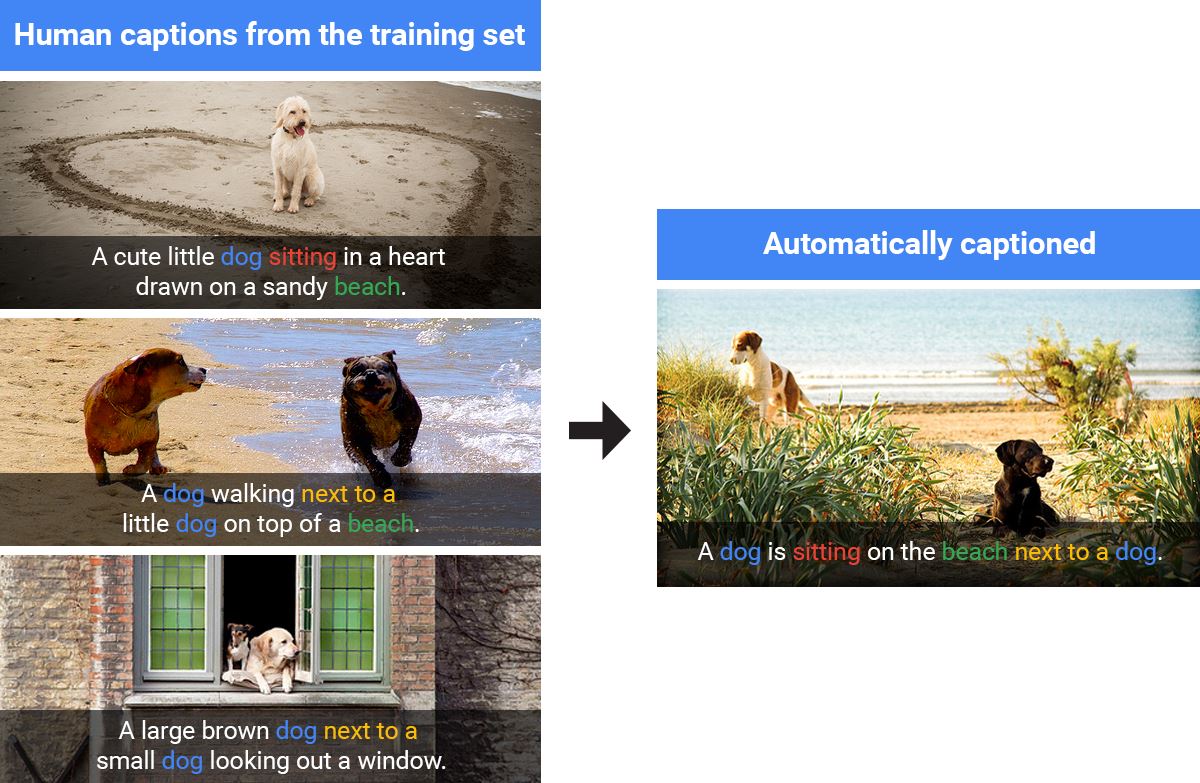

These results were achieved by having humans caption hundreds of thousands of photos, and then feeding this data into TensorFlow. Although the algorithm will reuse human-generated captions if the image is similar enough, it will also generate its own descriptions on the fly when presented with something new.

Google has released this most recent model of TensorFlow in the hopes that developers will take what they’ve developed so far and run with it. If you want to get started using this technology to your own ends, check out the model’s home page here. If you’re fascinated by the technical aspects of photo recognition, you can read the paper Google recently released about it here.

As always, let us know what you think about this evolving technology in the comments below!

Thank you for being part of our community. Read our Comment Policy before posting.