Affiliate links on Android Authority may earn us a commission. Learn more.

I tested Google’s upcoming Gemini Nano 4 — its faster, smarter AI isn’t what I expected

Earlier this month, Google lifted the lid on its latest and most powerful Gemma 4 AI models that you can run on your own hardware. Gemma competes on performance with other models like GLM5 and Qwen3.5, but its closed Gemini model remains the flagship to take on OpenAI and Anthropic. Still, the exciting news is that Gemma 4 has versions small enough to run on your smartphone.

Specifically, Gemma 4 E2B and E4B are distilled down to effective two- and four-billion-parameter footprints. At just 4.2GB and 5.9GB, these can more easily fit into phones with 12GB of RAM or more. These are also the foundations for Google’s next-generation Gemini Nano smartphone models — Gemini Nano 4 Fast and Nano 4 Full — scheduled to launch later this year.

Google notes that the new models offer improved reasoning, math skills, time understanding, and image capabilities. The Full model retains greater reasoning power for complex tasks, while Fast is optimized for lower latency responses. In fact, Google claims the Fast model is up to 4x speedier than previous versions and consumes up to 60% less battery when running on the TPU.

Gemma 4 promises to be Google's fastest and smartest on-device AI tools.

That all sounds pretty impressive, and to help developers make a head start on integrating these models with their Android apps, Google has released early access to Gemini Nano 4 via an AICore Developer Preview. I grabbed a copy of the app on my Google Pixel 10 Pro XL, which lets you run these AI models on the Tensor G5’s TPU, to see just what sort of improvements might be on offer when Nano 4 arrives in prime time.

The AICore Developer Preview app offers access to Gemini Nano 3, Nano 4 Fast, and Nano 4 Full, so I decided to run comparisons against the existing mainstream model to better gauge exactly what’s changing. Of course, things might be tweaked here and there before full release, but let’s jump in anyway.

Is Google focusing too much on AI at the cost of hardware?

Testing some prompts

The first thing I wanted to check was how well all of these models perform on tasks you might reasonably run on an on-device AI model. Nothing huge or multi-step. Instead, I focused on logic, math, and text-summary prompts to see how they fared.

As smart as modern AI LLMs are, they are still susceptible to very silly mistakes — especially when condensed to so few parameters. I managed to catch all three out with the classic r’s in strawberry question. However, Nano 4 Full actually got this right if I capitalized the R. It’s unclear why this matters, but such quirks are common in large language models. Nano 3 also made a mistake with a quick algebra question, reinforcing Google’s claims that its new Nano is indeed better at math and reasoning.

Despite some errors, all three models produced pretty decent results on the logic and text-summary prompts I gave them. The table below shows just some of the prompts I experimented with.

| Prompt | Gemini Nano 3 | Gemini Nano 4 Fast | Gemini Nano 4 Full |

|---|---|---|---|

| Prompt You buy 3 items at $4.70 each and pay with $20. What change do you get? | Gemini Nano 3 Here's how to calculate the change: * **Cost of the items:** 3 items * $4.70/item = $14.10 * **Change:** $20.00 - $14.10 = $5.90 You get $5.90 in change. | Gemini Nano 4 Fast "Here's how to calculate the change: **1. Calculate the total cost of the items:** * 3 items * $4.70/item = $14.10 **2. Calculate the change:** * Total paid - Total cost = Change * $20.00 - $14.10 = $5.90 **Answer:** You get **$5.90** in change." | Gemini Nano 4 Full Here's how to solve the problem: 1. **Calculate the total cost:** 3 items * $4.70/item = $14.10 2. **Calculate the change:** $20.00 (amount paid) - $14.10 (total cost) = $5.90 You will get **$5.90** in change. |

| Prompt How many r's in the word strawberry? | Gemini Nano 3 There is one "r" in the word strawberry. | Gemini Nano 4 Fast There are **two** "r"s in the word "strawberry". | Gemini Nano 4 Full There are **two** "r"s in the word "strawberry". |

| Prompt The event is at 6PM on August 18th, and a reminder should be sent out 10 hours before the event. Return the time and date the reminder should be sent | Gemini Nano 3 "The reminder should be sent at **8:00 AM on August 18th**. Here's the breakdown: * Event time: 6:00 PM on August 18th * Reminder time before event: 10 hours * Calculation: 6:00 PM - 10 hours = 8:00 AM" | Gemini Nano 4 Fast "Here's how to calculate the reminder time and date: * **Event Time:** 6:00 PM on August 18th * **Reminder Time:** 10 hours before the event **Calculation:** 1. **Subtract 10 hours from 6:00 PM:** * 6:00 PM minus 6 hours is 12:00 PM (Noon) on the same day (August 18th). * You still need to subtract 4 more hours (10 - 6 = 4). * 12:00 PM minus 4 hours is 8:00 AM. **The reminder should be sent at 8:00 AM on August 18th.**" | Gemini Nano 4 Full The reminder should be sent on **August 18th at 8:00 AM**. |

| Prompt Without writing steps, solve: 3x+27=48 | Gemini Nano 3 x = 8 | Gemini Nano 4 Fast "$3x = 48 - 27$ $3x = 21$ $x = \frac{21}{3}$ $x = 7$" | Gemini Nano 4 Full $3x = 21$ $x = 7$ |

| Prompt Sumarize the key info from this text into a single sentence: The report claims that customer satisfaction improved significantly after the redesign, rising from 78% to 85%, yet survey response rates dropped by nearly half during the same period. This raises questions about whether the improvement reflects actual sentiment or simply a smaller, potentially biased sample of respondents. | Gemini Nano 3 While customer satisfaction reportedly increased after a redesign, a significant drop in survey response rates raises concerns about the reliability and representativeness of the reported improvement. | Gemini Nano 4 Fast Customer satisfaction significantly improved after a redesign, but the drop in survey response rates suggests the improvement may be skewed by a smaller, potentially biased sample. | Gemini Nano 4 Full While customer satisfaction rose significantly after the redesign, the report's credibility is questioned due to a nearly 50% drop in survey response rates. |

Even after just a few prompts, I noticed some common themes and differences between Google’s models. Nano 4 is more likely to produce verbose answers and spend more time thinking through its steps, even when you explicitly ask it not to. It’s slightly less direct, which, while it has advantages for accuracy, can mean that simple direct requests yield multiple possible answers you might not want. This is particularly true of the Fast model, while Nano 4 Full is sometimes more confident in giving a concise answer.

Even in my short time testing, it’s safe to say that the new Nano 4 Full gives the best answers in terms of accuracy and conciseness, but it is rather slow. Nano 4 Fast is more accurate than Nano 3, but is also much more verbose, which may not appeal to all users (myself included). That said, Google says there is a major speed boost to be had here, so let’s see if it’s worth the trade-off.

How fast is Nano 4 Fast?

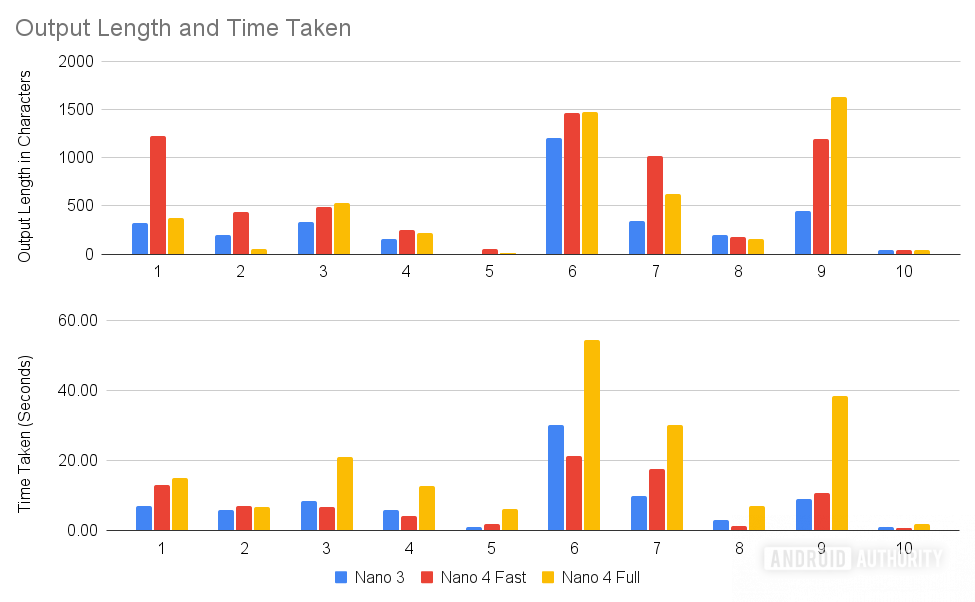

Google’s AICore development app also tracks the inference time — in other words, how long it took from submitting the prompt to producing the output. I made sure the model was loaded first for each test, since that can influence initial results, and kept a log for each test prompt.

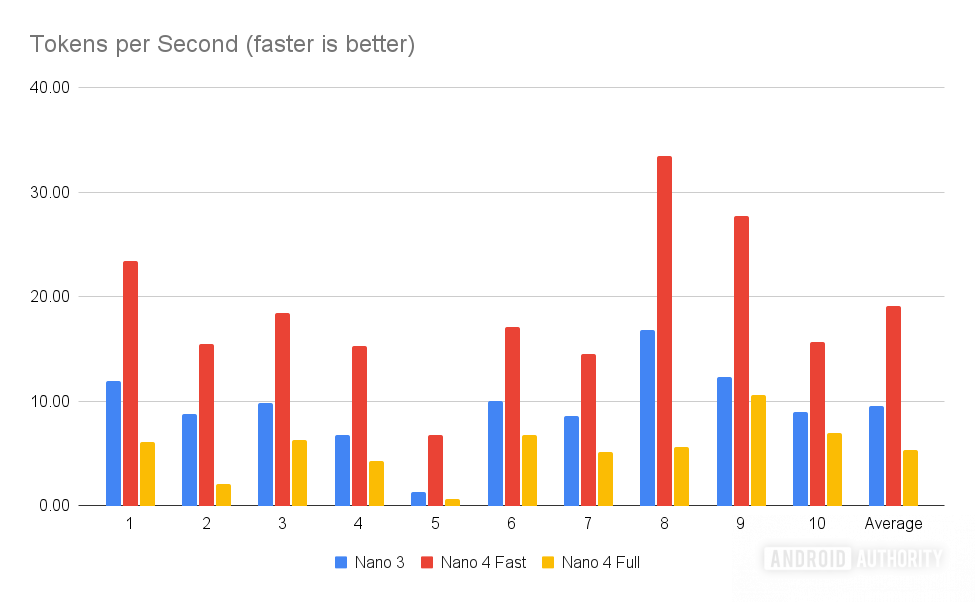

However, as you’ve seen, result lengths differ by model, so I counted the number of characters in the output. Assuming Gemini Nano uses the same rough four characters per token as Gemma, we can calculate the number of tokens generated per second, a classic metric in tracking AI text generation performance. Here are the results.

Typically, we can read around 5-10 tokens per second, making it a good benchmark for how quickly we want results to appear, at a bare minimum. However, tasks like coding are ideally even faster.

As we can see, Gemini Nano 3 was already fairly acceptable in this regard, averaging 9.6 t/s across the range of prompts I tested. The upcoming Gemini Nano 4 Fast is, well, even faster. It averages 19.14 t/s, making its outputs far faster than a human can read easily. That’s not 4x faster, but around 2x on average, with room to be faster still in best-case scenarios.

As expected, Gemini Nano 4 Full is much slower than Fast and is sluggish compared to the last-gen model as well. It outputs an average of 5.3 t/s, just about acceptable for a slow reader. However, some tasks are slower than that, with some results as low as 2 t/s, which is rather painful. Hence, Google plans to use this model for complex but less time-sensitive tasks.

Gemini Nano 4 Fast is quicker but also more talkative.

Despite Gemini Nano 4 Fast’s apparent speed advantage in raw output, there’s a major caveat. 4 Fast is typically the most verbose of the three models tested here, regularly outputting 50% more text and sometimes even double the amount for the same query. While this means it can provide more thorough answers in a short timeframe, it also means the model is not always the fastest at actually finishing a full response. It’s also sometimes overly chatty, giving longer, meandering answers than Nano 4 Full.

The end result is that Nano 4 Fast often performs similarly to Nano 3 overall; sometimes faster, but often a little slower to finish. Whether you prefer your AI responses to be snappy and concise or full of step-by-step explanations will likely determine whether you love or hate this change. Due to its slow token rate, Nano 4 Full is always the slowest in my tests.

Get ready for Gemini Nano 4

While looking at the capabilities of Gemini Nano 4 though a basic developer interface doesn’t do the final product justice, it has allowed us to glimpse some of the capabilities it will offer when embedded into Google’s own services and third-party apps in the coming months.

While not groundbreaking in terms of the responses I received during testing, the combination of Nano 4 Fast’s speedy replies and Nano 4 Full’s better accuracy definitely makes this a meaningful upgrade. Importantly, Nano 4 will run on AI accelerators in the latest chipsets from Google, MediaTek, and Qualcomm (with no word on Samsung’s Exynos), in addition to CPU support on older and other processors. This ensures that most of the Android ecosystem can benefit from the promised performance and energy efficiency gains when it rolls out to devices later this year.

With tool calling and thinking mode yet to come, Nano 4 could bring serious power to on-device AI.

I should caveat this by noting that small two- and four-billion-parameter models are never going to compete with the accuracy and capabilities of the hundreds of billions of parameter LLMs you can access via pricey cloud infrastructure. But for completing small, simple tasks with low-latency and the added privacy of on-device processing, Nano 4 is a promising step forward.

And this is just the beginning, Google plans to continue improving its latest on-device models with advanced capabilities in the near future. Gemini Nano 4 is set to support tool calling, structured outputs, system prompts, and a thinking mode that will bring Nano closer to the feature set available in other larger, often cloud-dependent AI platforms. Tool support is essential if Google ever plans to pair concepts like Gemini Agent with the security and latency benefits of on-device AI.

If you fancy exploring what’s possible with Gemma 4 and Gemma 3 (as well as small versions of DeepSeek and Qwen), you should check out Google’s AI Edge Gallery — a more user-friendly way to explore longer text chats, query images, and transcribe audio with AI.

Don’t want to miss the best from Android Authority?

- Set us as a favorite source in Google Discover to never miss our latest exclusive reports, expert analysis, and much more.

- You can also set us as a preferred source in Google Search by clicking the button below.

Thank you for being part of our community. Read our Comment Policy before posting.