Affiliate links on Android Authority may earn us a commission. Learn more.

Best of Android: How we test performance

Testing how well a smartphone performs is no small source of controversy. Normally people look to benchmarks for the answer, the truth is there’s no one way to do it — so we have to pick and choose what we’re going to focus on to contextualize our results.

How we test

When we test a phone for its performance, we load up a stock backup for the phone, and then run each of our target benchmarks three to five times and log the average. This way, unexpected outliers don’t make it to publish, and a more realistic score is what you read.

While that may seem a little too srtaightforward, there’s really nothing else to it. The software does the heavy lifting.

A word of caution

Most benchmarks only look at one aspect of performance, and that seems like a pretty smart way to go. People tend to forget that smartphones are made of lots of parts like memory, RAM, a processor, integrated GPU, and so on.

However, the commercially-available benchmarks are trying to establish peak performance, and don’t necessarily offer us much insight into how a phone is going to work when you’ve been using it for a while. Quick benchmarks sometimes offer very different information than what many people are looking for when they ask: “how well does my phone perform?”

Sometimes the numbers don’t matter

As we’ve seen before, sometimes benchmarks can be gamed a bit. While companies have differing strategies and reasons for manipulating performance, it’s not always actually “cheating.” Sometimes it can be simply something a company does in certain situations to free up resources for common tasks that require a little more juice. Other times, it’s straight-up tomfoolery.

Smartphones have come an unbelievably long way since their inception, and the problems of 2013 just don't exist in today's world.

In order to sidestep this, we’ve taken steps to defeat straight-up software gaming by partnering with friends in the benchmark industry. This way, we can see if the results are truly gamed or not. For our CPU tests, we’ll be comparing only the results we’ve confirmed to be accurate.

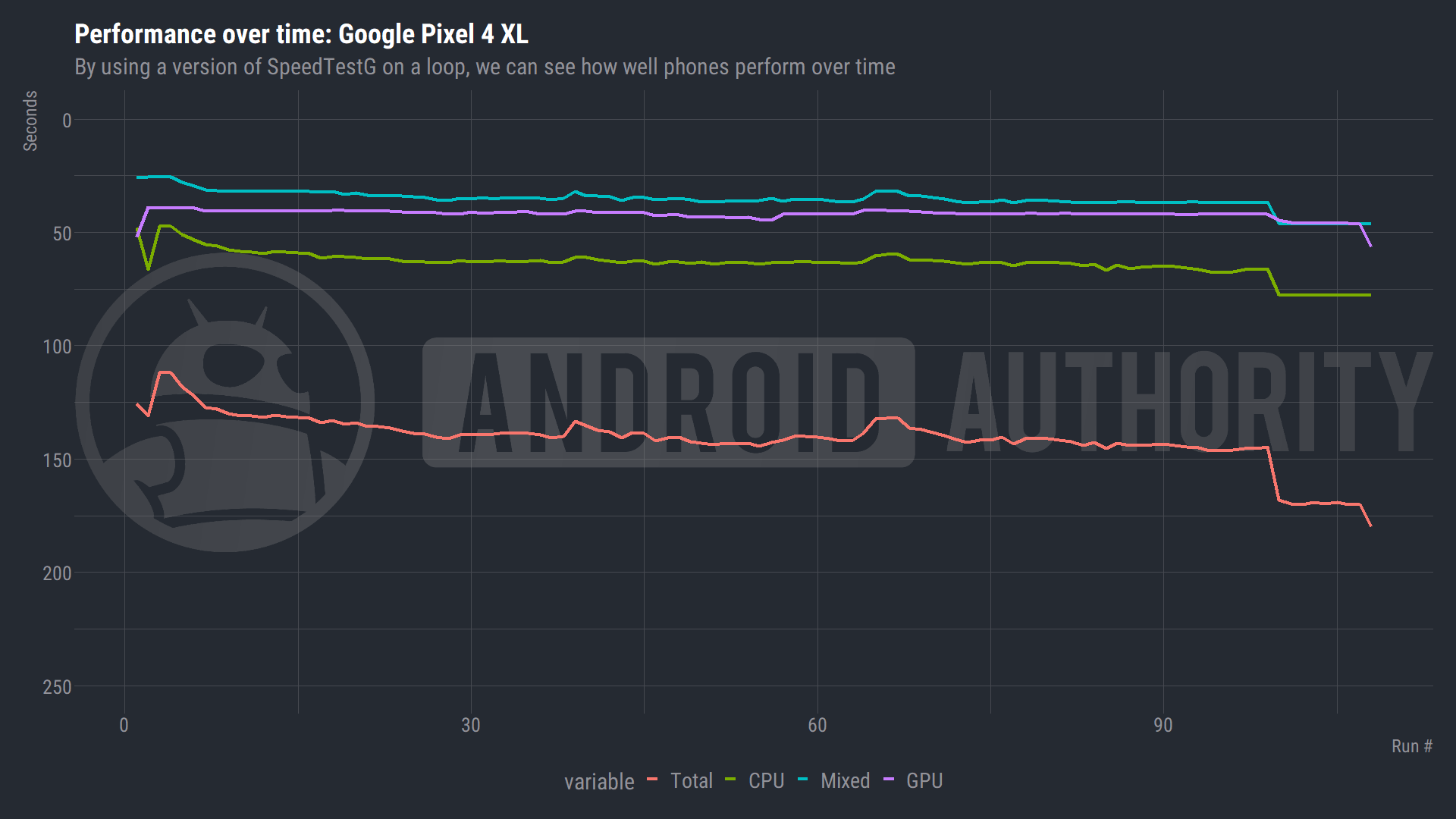

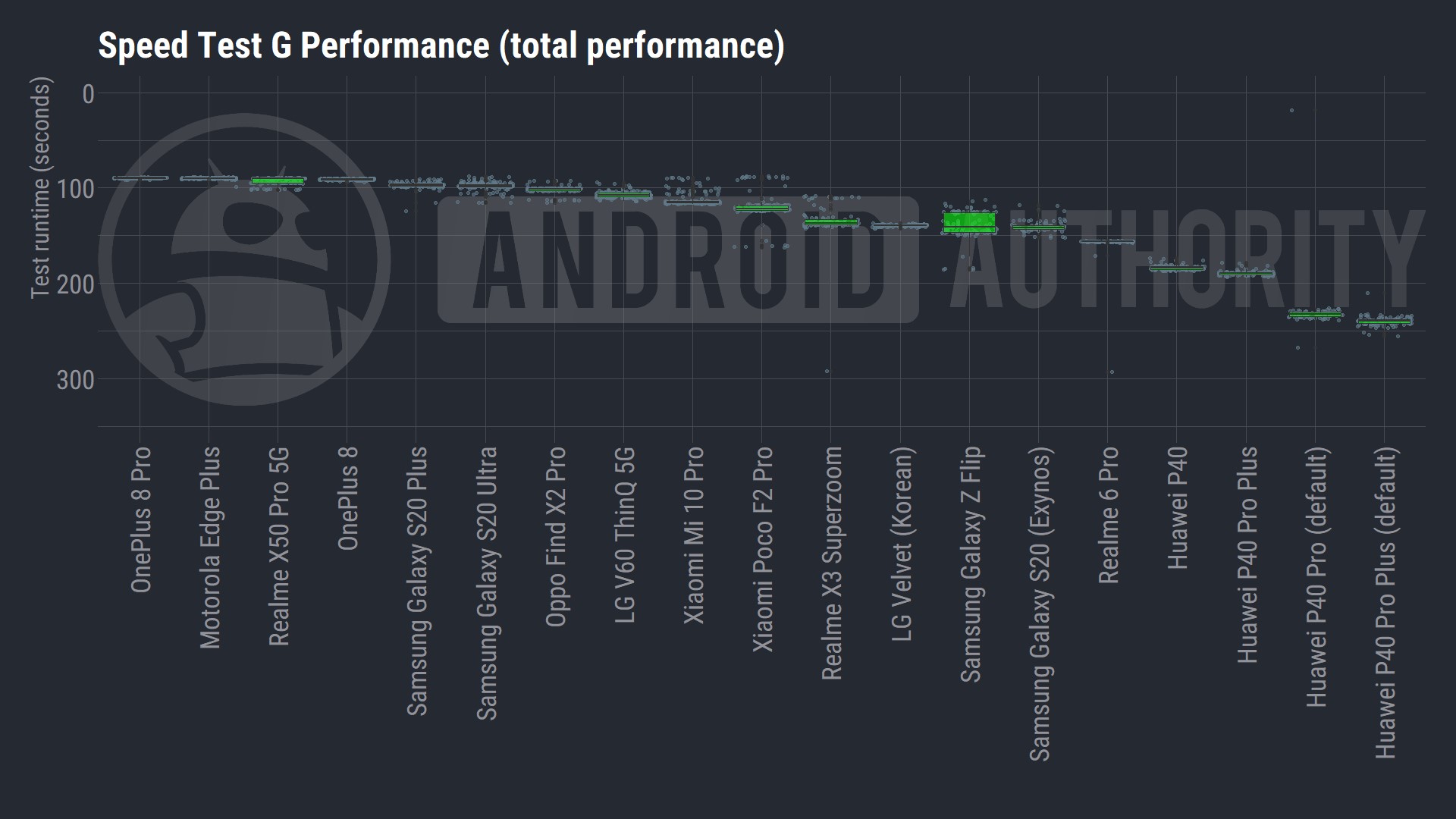

Sustained performance

Because what your average consumer views as “performance” is probably a lot different than a long string of numbers, we have our own in-house solution to test sustained performance. By using a version of Speed Test G on a loop, we can record and contextualize hundreds of data collections across the entire run of a phone’s discharge cycle. For example, we can wait out any sport modes, monitor chips deciding to underclock, and even get a worst-case-scenario battery life reading.

Of course, this is a completely new way of doing things, so some of the charts can be a little confusing. Don’t worry, the results are explained in each review so you don’t get lost in a sea of numbers. But the main thing you need to know is that the faster the result, the better.

By taking so many collections, we can also get a better handle on what kind of performance you’ll actually be getting, rather than just a snapshot from when the phone was at optimal conditions. We find that by plotting a standard deviation in either way, we can get a better picture of where your performance results will be most of the time.

Don’t panic

If you’re worried about your phone not scoring as well as others, don’t panic. Smartphones have come an unbelievably long way since their inception, and the problems of 2013 just don’t exist in today’s world. Even the “worst” phones are still pretty darned good. At a certain point, the benchmarks are just a number.

Thank you for being part of our community. Read our Comment Policy before posting.