Affiliate links on Android Authority may earn us a commission. Learn more.

Google ATAP's Project Soli will make interacting with wearables a breeze

Wearable devices and smaller screens are popping up everywhere nowadays. Smartwatches and the crazy new Project Jacquard initiative are great examples of how far wearables have come over the years, though different companies seem to take a different approach on how exactly we navigate around these tiny screens. Google wants you to interact with your Android Wear device by voice dictation, and Apple wants you to control your Apple Watch by touching it. But thanks to a new project out of Google ATAP, wearables (as well as other forms of tech) may soon be controlled in a much different way.

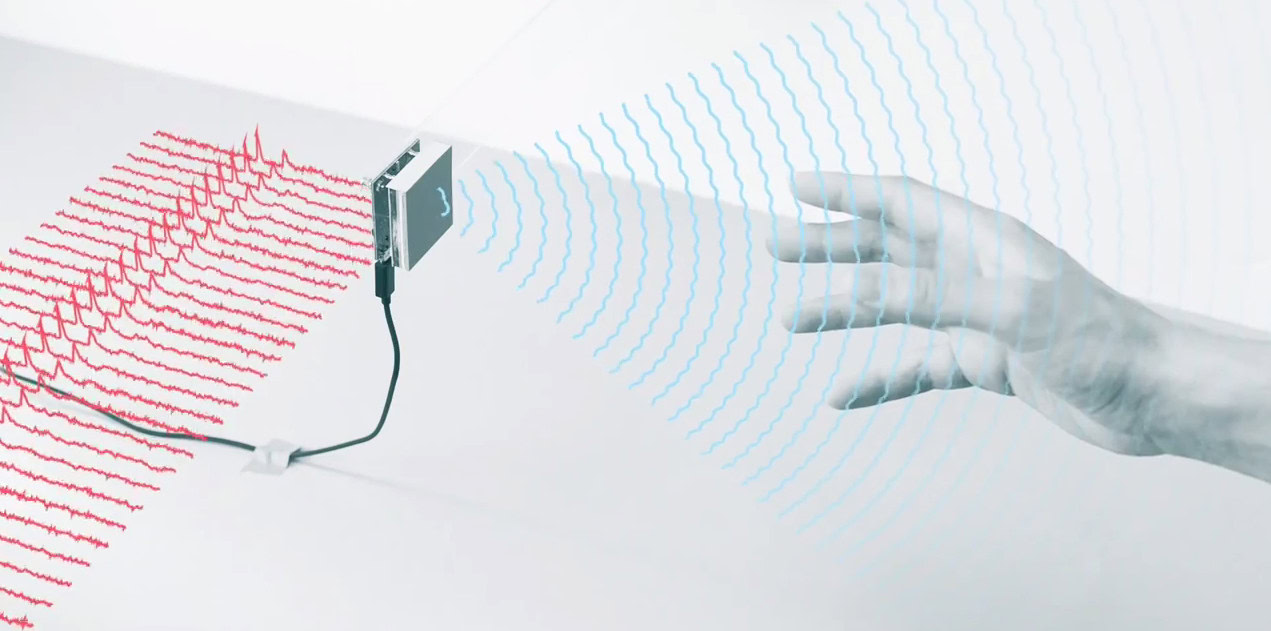

Project Soli, which was just announced at Google I/O, aims to create a new way of controlling the technology around us, especially smaller screens. Instead of physically touching our devices, Soli wants us to use our “hand motion vocabulary”. The technology Soli is working on would be able to detect incredibly small motions, allowing you to use natural hand motions to perform specific tasks. So, if you think of your hand as an interface, performing a hand motion over a small sensor would be able to complete a task on a smartwatch, alarm clock or anything else, even if the piece of technology isn’t around you at that exact moment.

ATAP is using a radar sensor to complete this task, which the group has supposedly shrunk down from the size of a gaming console to the size of a quarter. ATAP will release an API which will give developers access to the translated signal info, which will let them do basically whatever they want with the tech. These APIs will be available sometime later this year, targeted at smaller form factors, such as smartwatches.

Just think – the next generation of smartwatches (or the generation after that) might include some really advanced gesture support. For more information on Soli, be sure to check out the video attached above.

Thank you for being part of our community. Read our Comment Policy before posting.