Affiliate links on Android Authority may earn us a commission. Learn more.

AI and AR - is this the future of mobile?

2016 was arguably one of the biggest years in hardware and software innovation in recent history. Through techniques like machine learning, our devices are finally beginning to understand us on a much more fundamental level than ever before. Though true artificial intelligence is still not here quite yet, contextual data storage combined with the simplicity of almost perfected speech recognition has changed the way we interact with our devices, and will only be iterated upon until technology is so seamless that we will forget we are even using it.

It seems quite clear that AI will be the future, but what about AR? Will augmented reality integrate with artificial intelligence to make our lives as simplistic as possible? Let’s take a look a few possible scenarios, along with technologies on the market today that seem to be headed towards this transition.

What is AI?

Artificial intelligence is used to describe a technology that can make decisions based on varying efficiency algorithms. They can be low level (such as an easy mode game AI), or high level, such as AlphaGo, Google’s AI project that defeated the world Go champion this past summer. In the consumer space, AI has been a bit different. Though not a true AI, which could theoretically do everything humans can do (even write more AI software), these assistive technologies currently thriving in the market can help make our lives just a little bit easier through automation and voice recognition.

The two current leaders in the assistive technology space are Google (Google Home) and Amazon (Amazon Alexa), smart home technologies that can play music, look up facts, and control things like lighting with various voice actions. While these products may seem like magic to some, they are still in what many would consider the first generation of what assistive tech could become. Machine learning has arguably evolved more in the last 5 years than it did in the previous 20, largely due to huge developments in compute technologies that allow us to index and search for similar labeled objects in an instant. Through these developments, assistive technology can now understand a query, index that query, and return a result faster than ever before, allowing for almost instantaneous answers to questions and actions based on voice queries.

But how can these technologies be integrated into our lives to an even more seamless extent? Many are thinking the next big step is AR, or augmented reality.

What is AR?

Augmented reality is a technology which overlays images or holograms over the real world. There are multiple tiers of this technology, with lower level implementations using a phone’s camera to add images to your surroundings, while higher level versions like Microsoft’s HoloLens measure space to a higher degree to allow for intractable overlays. This tech is still in its infancy, though many companies have attempted to make higher level and more interactive versions over the years. Quite a few have come and gone, in the last couple of years even, and it seems that many of these technologies are simply too early to market.

Google Glass was a simplistic and interactive way to integrate AR overlays into our everyday lives. It connected to smartphones to allow for image projection and help us get things done in a much easier way. Though it was relatively simplistic in its first generation, it allowed for integration of technology in an almost completely seamless way. Want to record a video? You could do that in an instant. Map to a location? Google Glass showed your next turn right in front of your eyes, without having to take your attention off the road. Glass was a huge first step making AR part of our daily life, as it allowed us to utilize technology whenever we wanted to with almost no effort at all. The biggest issue with the project was its timing, along with its feature set. The public was not quite ready to deal with technology having the ability to record their every move at any moment, especially when there was no indication that it was doing so. Though the project is now effectively dead, it seems to be coming back in the form of multiple other projects from various companies that are looking to take its place.

HoloLens is a holographic overlay headset developed by Microsoft. The technology has the ability to create images and objects over the real world that are intractable in various ways. Through apps like Skype, Minecraft, and others, users can projects screens onto walls, manipulate 3D objects, and more. The headset is still in a relatively early developer stage, but shows an iteration in what Google glass was originally designed to do. Check out this post over at VRSource for more information on the project.

How can AI and AR work together?

AI and AR are both technologies that are made to augment our lives more seamlessly with helpful technology and information. While one aims to automate our processes through simplistic speech processing and other forms of input, the other works to develop new ways of information manipulation to make our jobs, entertainment, and overall life better. So how might these two technologies work together? There are a few potential possibilities.

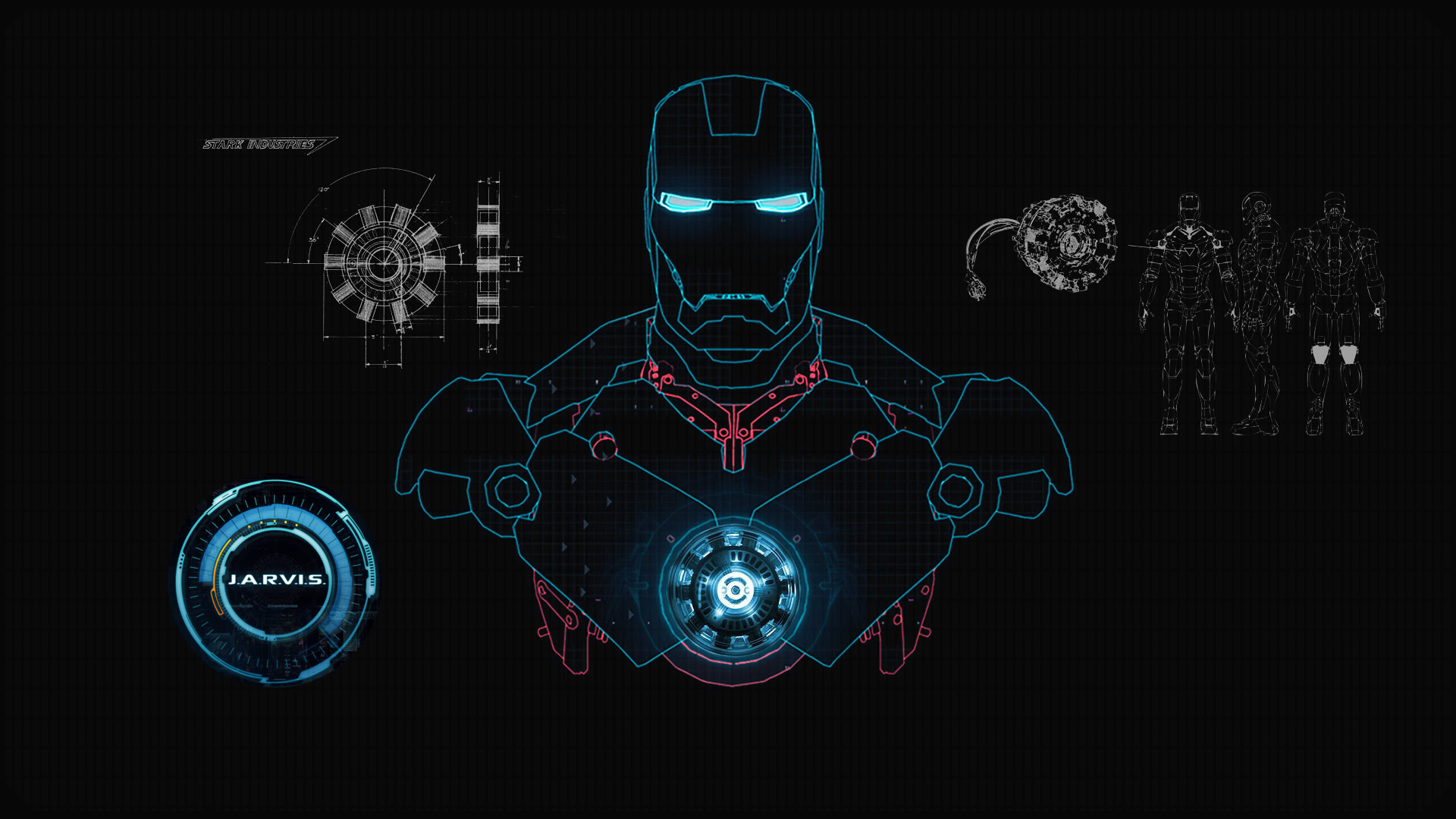

The Iron Man ‘Jarvis’ scenario

In the Iron Man series, Tony Stark has an AI system he developed called J.A.R.V.I.S. This AI is so advanced, it knows the ins and outs of Tony’s behavior better than he knows himself. It automates almost all of his processes, and is able to provide him accurate information as soon as Tony asks for it. In Stark’s Iron man suit, he has a heads up display showing multiple different metrics in the real world that could be of interest. Whether it’s a rocket flying at him at high speeds or his suit’s power levels decreasing, Tony Stark can ask Jarvis for up to date information that provides him with the power he needs to get things done more efficiently.

The closest version of the AR technology we currently have is HoloLens, which, like Iron Man’s suit can overlay images and allow us to interact with them in real time. HoloLens currently doesn’t have AI technology, but could it feasibly in the future? Assistive technologies like Google Home and Amazon Alexa give us fast access to information and can help manipulate things like our homes, but if evolved enough, could be much more useful in the space. Even mobile AI like Siri and Google Assistant can remember our individual preferences, so an integration of the two technologies would essentially be a low level form of what Stark created in a comic stemming from 1968.

A technology like this in our everyday lives could have a lot of potential use cases. In fact, if information is available to us right in front of our eyes, many of the wearable devices we currently use today could be completely replaced. Need to know your heart rate? It’s in the top right corner of your vision. Need an Uber to pick you up? Simple voice command. Want to know what someone’s name is? Their identity can be displayed above them at any time. In this way, the entire need for interaction with a handheld device could be removed in its entirety. Or can it?

Could this technology replace our mobile devices?

The Google Glass V2 scenario

To make a product like this more feasible for the consumer market, it would need to be reduced to a much more feasible size, as well as form factor. Sound familiar? This sounds a lot like what further generations of Google Glass could have become, but the consumer and media fear of recording capabilities shut the project down while still in its infancy. Still, there are ways for a technology like this to re-surface in the market. Other companies like Snapchat have worked around secretive recording hysteria by adding things like lights to let people know what you are doing, and they seem to be doing alright so far.

Imagine Microsoft’s HoloLens technology mixed in with an AI that can answer all of your questions, and helps you do things before you need them done. What previously sounded like a far off sci-fi comic in the 60’s is now a feasible possibility, and one that could only be a few years away. Though Microsoft has not announced any sort of consumer version of its HoloLens headset, an iteration of similar technology could very much be in the works, whether it be from Microsoft themselves, Google, or any other equally capable developer.

Is this something you would like to see?

As with any technology, there are some pretty substantial downsides to all this; privacy being one of the biggest concerns. Do we want to live in a world where we are so dependent on assistive technology that we don’t understand life without it? Obviously, putting that much power in the hands of one company is quite a risky move, especially when every move and metric of our daily lives are being measured to the furthest extent. You could argue that these calculations are all for the good of the end user, but sometimes it is better to be more cautious about how much data we give any one entity. I’m obviously not going to rant here about why you should be more careful with your data, but as our technologies evolve to be more and more essential to our daily lives, we need to consider who this reliance is coming from, and what we are willing to give up to use it.

As we barrel headfirst into the future of computing, there are amazing technologies coming to the forefront of our culture every day. They say technology is on an exponential curve, and based on the change we’ve seen in the last 5 years alone, it’s hard not to agree.

How long do you think it will be before this technology hits the market? There’s no doubt it is an interesting and futuristic venture, and there are probably loads of companies attempting to perfect it as we speak.