Affiliate links on Android Authority may earn us a commission. Learn more.

New AI chip could bring artificial intelligence to your smartphone

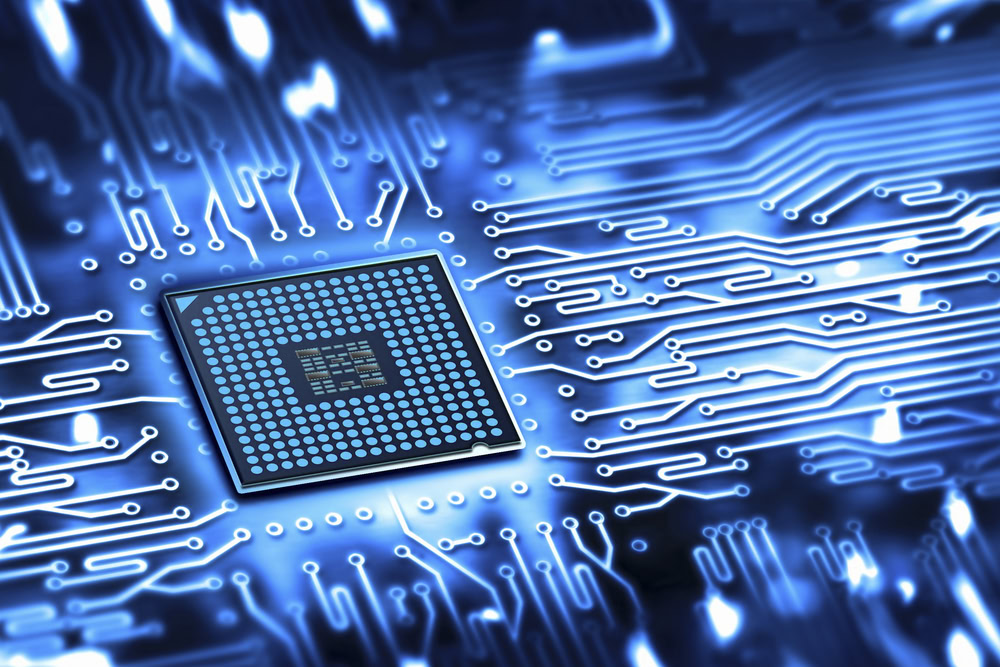

Researchers at MIT have unveiled a major breakthrough in artificial intelligence: a low-power neural-network chip that consumes ten times less power than a mobile GPU. This means that smartphone-based AI tasks are much closer than you might think. Skynet has a new name, kids, and it’s Eyeriss.

The research around Eyeriss was presented at the recent International Solid State Circuits Conference in San Francisco, where the researchers noted: “In recent years, some of the most exciting advances in artificial intelligence have come courtesy of convolutional neural networks, large virtual networks of simple information-processing units, which are loosely modeled on the anatomy of the human brain.”

Convolutional neural networks are loosely modeled on the anatomy of the human brain.”

The researchers demonstrated the low-power chip performing a complex image recognition task – the first time a state-of-the-art neural network has been run on a custom chip. Eyeriss’ secret sauce is its energy-friendly nature. Consuming one-tenth of the power a standard mobile GPU requires, Eyeriss is a natural choice for mobile AI.

The secret to low-power AI

Eyeriss utilizes several tricks to keep power consumption to an absolute minimum. Unlike most GPUs, each of the 168 cores in Eyeriss has its own memory, so there is less need for time-consuming and power-hungry communication with a large central memory bank.

Data is compressed before being sent to a core for processing and a special delegation circuit gives each core the maximum amount of work it can handle without needing to access more data. Furthermore, each core in Eyeriss is able to communicate directly with its neighboring cores, so data can be shared locally rather than constantly routing through central memory.

What Eyeriss means for mobile AI

Partially funded by DARPA, the research picks up on neural network research that was studied aggressively in the early days of AI research in the 70s and then largely dropped. Neural nets were typically seen as being too power-hungry for use in mobile applications, but as the researchers claim, Eyeriss “is useful for many applications, such as object recognition, speech, face detection” and could be used to usher in the Internet of Things.

When an Eyeriss chip is installed in a smartphone, it will negate the need to send data to the cloud for high-power processing of AI algorithms, improving speed, security and the need for a Wi-Fi or data connection. Complex AI tasks will be able to be processed locally, brining machine learning to your handheld device.

[related_videos title=”RELATED VIDEOS” align=”center” type=”custom” videos=”615783,664381,593512,654054″]

What’s more, individual Eyeriss chips won’t need to learn everything from scratch either, because “a trained neural network could simply be exported to a mobile device,” adding that “onboard neural networks would be useful for battery-powered autonomous robots”.

The applications are immense, although there was no timeline given for when an Eyeriss chip might make its way into a commercial mobile device. However, when one of the principal investigators of the work is a research scientist at NVIDIA, it might be sooner than you think.

What kinds of AI tasks can you see being run on mobile devices? When do you think it will happen?

Thank you for being part of our community. Read our Comment Policy before posting.