Affiliate links on Android Authority may earn us a commission. Learn more.

Qualcomm future of AI photography

In addition to computation photography, high-quality camera hardware, and image signal processors, cutting edge mobile photography is increasingly powered by machine learning algorithms — also known as artificial intelligence (AI). This photography technique promises to improve quality in the push towards DSLR-like quality while offering creative new ways to shoot and edit pictures and video.

The key to machine learning is the use of neural networks. This is a type of algorithm that is often likened to the human brain. This comparison is drawn from a neural network’s ability to be trained, through the use of data, to recognize patterns, allowing it to make highly accurate classifications for complex data types like audio and images.

When it comes to photography, the ability to observe, learn, generate, and classify has a wide range of applications. These applications can include features such as building on computational photography techniques to improving post-processing algorithms, real-time software bokeh with 4K video, or even completely swapping out the colors of the clothes you’re wearing.

How neural networks work

Neural networks are a hugely complex topic, so we’re only going to cover the basics here. For more advanced reading, check out guides here and here.

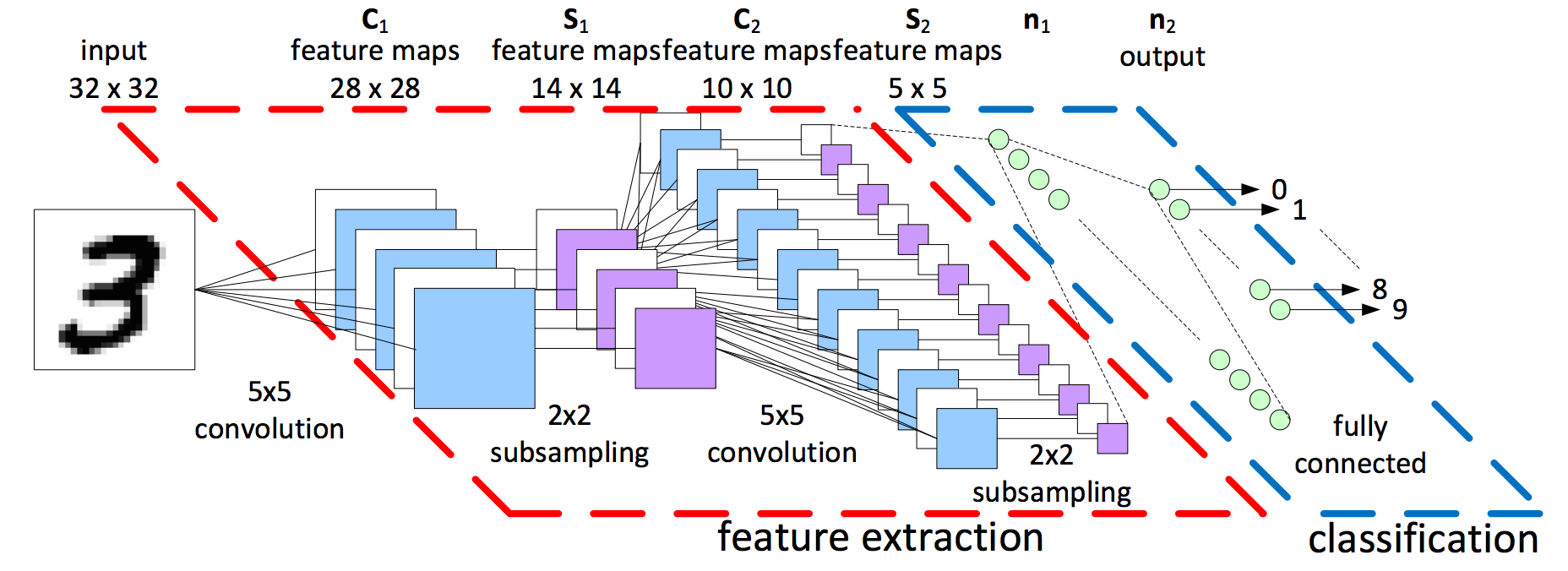

Neural networks are made up of nodes, which is a signifier for where some computation is done. Each node combines an input with a weight which amplifies or attenuates the significance of that particular node. Several nodes often work in parallel, creating a layer of nodes that performs a larger task. This could be feature detection within an image, for example. Multiple nodes and layers can be summed together and passed on to other nodes and layers, forming a deeper network with more powerful capabilities.

The output from each node and layer is scaled as a probability function. By looking at lots of different features and attributes, a neural network can rate the input as a probability match against all of the expected potential outputs. This is how image detection algorithms decide whether a picture looks more like a cat or an orange, but you have to tell it what to look for first.

Neural networks aren’t programmed quite like traditional computer algorithms. Instead, they are trained on datasets, such as images, sound files, etc. The weights of each node are adjusted gradually over time via a feedback loop, based on how well the network did at matching the inputs to the correct outputs. This gradual “learning” of the rules takes considerable preparation, time, and computing power, but produces phenomenally accurate results.

Neural networks inside your smartphone

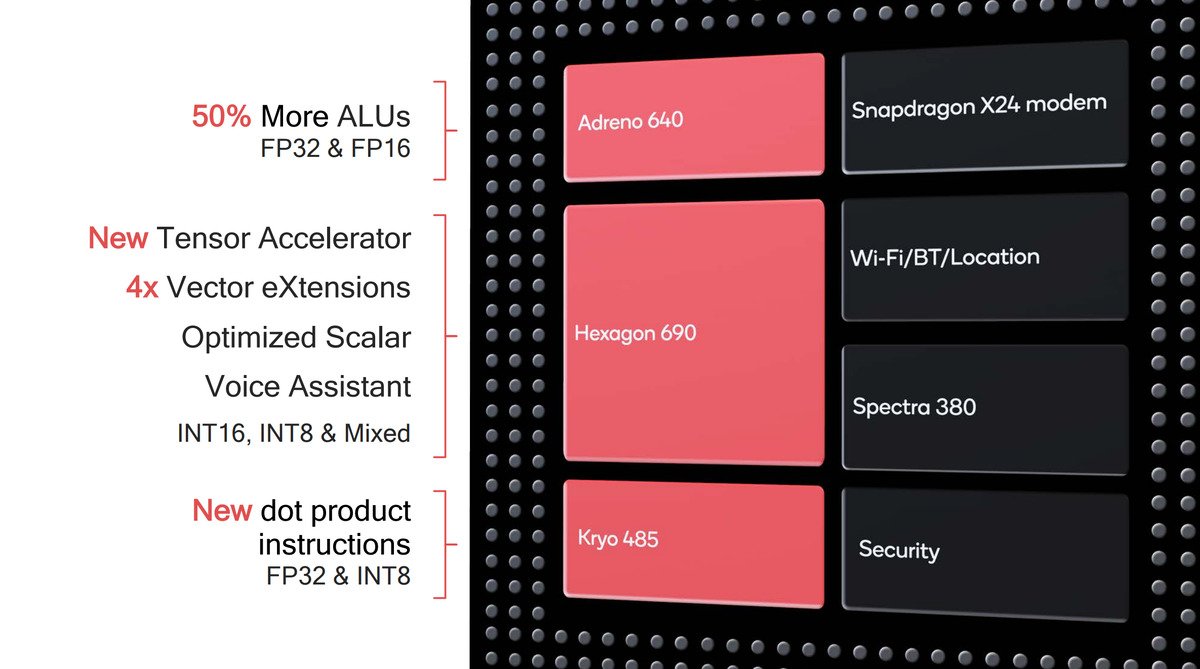

Neural networks can run on a variety of hardware components, including the CPU and GPU parts common inside a range of computing devices, including your smartphone. However, some neural networks can require more processing power than these hardware components can give, and dedicated hardware can provide the optimal processing needed.

Inside the Qualcomm® Snapdragon™ 855 Mobile Platform, for instance, you’ll find the latest Qualcomm® Hexagon™ 690 Digital Signal Processor (DSP), boasting improved Vector processing units and a new Tensor Accelerator specifically for machine learning tasks. Other Snapdragon Mobile Platforms also feature the Hexagon DSP component, with varying capabilities. With that said, neural nets aren’t limited to just running on the DSP on Snapdragon and other mobile platforms. The type of processor used depends on the workload.

Qualcomm Technologies opens up its DSP and machine learning capabilities to third-party developers through its Qualcomm® Neural Processing SDK. This allows apps to run neural nets across any of the hardware cores inside a Snapdragon Mobile Platform. For example, Google Pixel smartphones tap into the Hexagon DSP and its own Visual Core to accelerate its impressive HDR+ photography feature. Qualcomm Technologies works with software vendors such as Arcsoft, Elevoc, Polar, Loom, Mobius, Morpho, and more, supporting features ranging from video bokeh to avatar creation using machine learning running on the DSP.

AI could shape the future of photography

Now we know how neural networks work, the important question is what could it do for us and our photographs?

Neural networks are used to improve a range of common photography algorithms. De-noise, for example, could be improved with training to offer superior image clean up tailored to the specific camera or type of shot. Likewise, for low light, a neural net could detect bright and dark parts of the image, allowing for light and color enhancements in specific parts of the scene.

More advanced use cases are increasingly common in smartphone photography. Super-resolution zooms use neural nets to combine multiple images into a single high-resolution shot for superior looking digital zoom. Neural nets could also be trained to accurately stitch multiple photo exposures together for enhanced HDR and night shots.

AI photography could include super-resolution zoom, real-time bokeh, and improved image quality.

Video could also benefit from the adoption of this technology. Real-time object detection is designed to allow apps to introduce software bokeh effects straight into video as you record. Similar techniques also support real-time object swapping and removal. This includes swapping out the background in a video, changing or removing colors, and even replacing items of clothing or superimposing digital avatars directly into your video.

The power of neural networking and AI photography ranges from quality enhancements to help close the gap on DSLR to powerful creativity tools that help make producing unique content a breeze. Either way, it’s a powerful technology that’s fundamental to future improvements heading to mobile photography.

Next: Google Pixel 3 XL international giveaway!

Content sponsored by Qualcomm Technologies, Inc.

Qualcomm Snapdragon, Qualcomm Hexagon, Qualcomm Adreno, Qualcomm Spectra, Qualcomm AI Engine and Qualcomm Kryo are products of Qualcomm Technologies, Inc. and/or its subsidiaries.

Thank you for being part of our community. Read our Comment Policy before posting.