Affiliate links on Android Authority may earn us a commission. Learn more.

GPU vs CPU: What's the difference?

Modern smartphones are essentially miniaturized computers with various processing components. You likely already know about the Central Processing Unit (CPU) from computers, but between the Graphics Processing Unit (GPU), Image Signal Processor (ISP), and machine learning accelerators, there are a lot of highly specialized components too. All of these come together in a system-on-a-chip (SoC). But what sets a GPU apart vs a CPU and why do graphics and other specialized tasks need one? Here’s everything you need to know.

How does a CPU work?

Put simply, the CPU is the brain of the whole operation and is responsible for running the operating system and apps on any computer. It excels at executing instructions and does so in a serial manner — one after another. The CPU’s job is relatively straightforward: fetch the next instruction, decode what needs to be done, and finally execute it.

What exactly is an instruction? It depends — you can have arithmetic instructions like addition and subtraction, logical operations like AND and OR, and many others. These are handled by the CPU’s Arithmetic/Logic Unit (ALU). CPUs have a large instruction set, allowing them to perform a wide range of tasks.

A CPU processes new instructions one after another, as quickly as possible.

Modern CPUs also have more than one core, which means that they can execute multiple instructions at the same time. But there’s a practical limit to the number of cores as they each need to run extremely quickly. We measure CPU performance using instructions per cycle (IPC). The number of cycles per second, meanwhile, depends on the CPU’s clock speed. That can be as high as 6GHz on desktop CPUs or 3.2GHz on mobile chips like the Snapdragon 8 Gen 2.

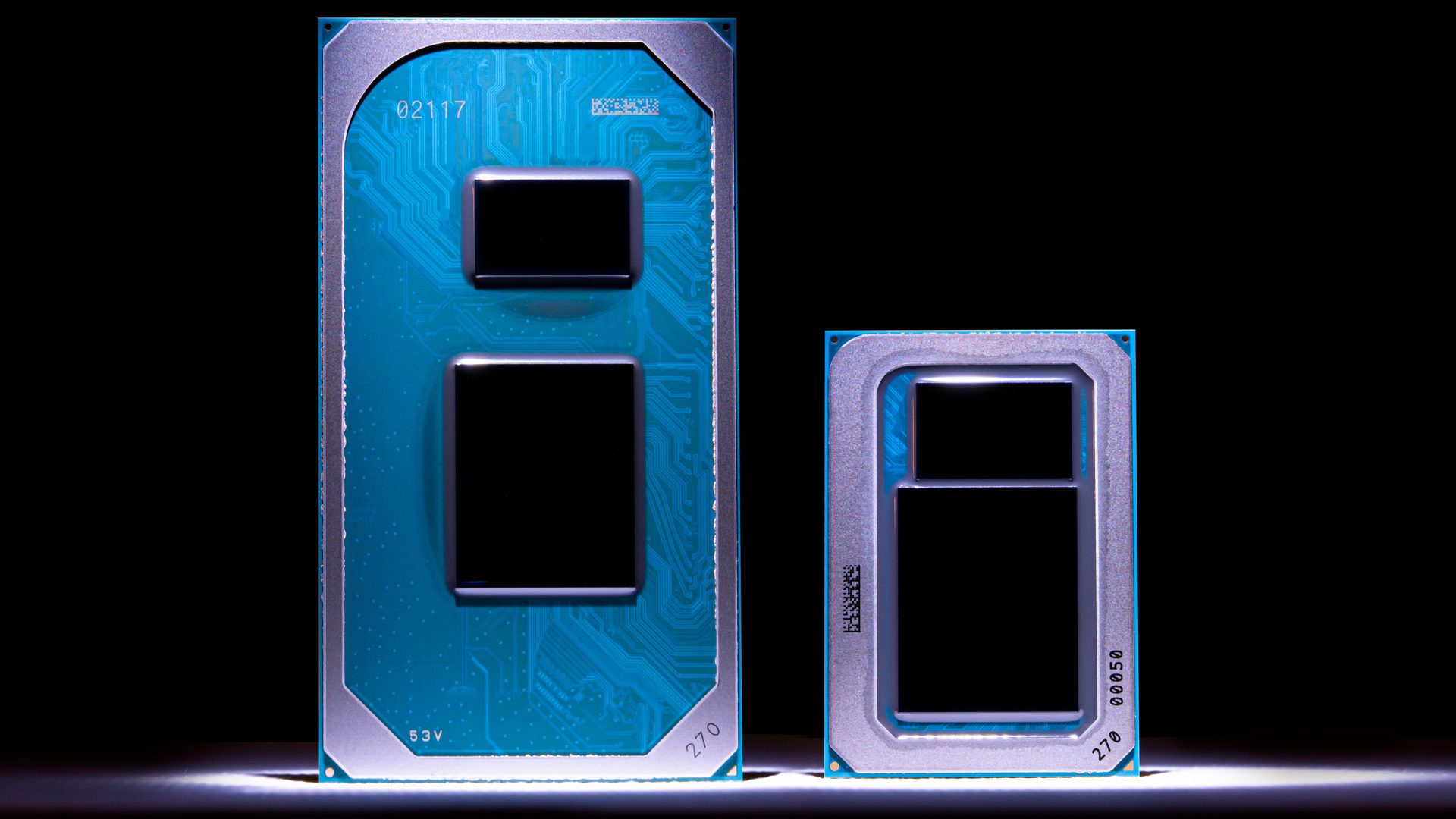

A high clock speed and IPC are the most important aspects of any CPU, so much so that you’ll often find a large area of a physical CPU die dedicated to fast cache memory. This ensures that the CPU isn’t wasting precious cycles retrieving data or instructions from RAM.

Related: What’s the difference between Arm and x86 CPU architectures?

How does a GPU work?

A specialized processing component, a GPU performs geometric calculations based on the data it receives from the CPU. In the past, most GPUs were designed around what’s known as a graphics pipeline, but newer architectures are much more flexible in processing non-graphical workloads too.

Unlike a CPU, getting through a queue of instructions as quickly as possible isn’t necessarily the top priority. Instead, a GPU needs maximum throughput — or the ability to process several instructions at once. To that end, you’ll typically find that GPUs have many times the number of cores as a CPU. However, each one runs at a slower clock speed.

A GPU breaks down a single complex job into smaller chunks and processes them in parallel.

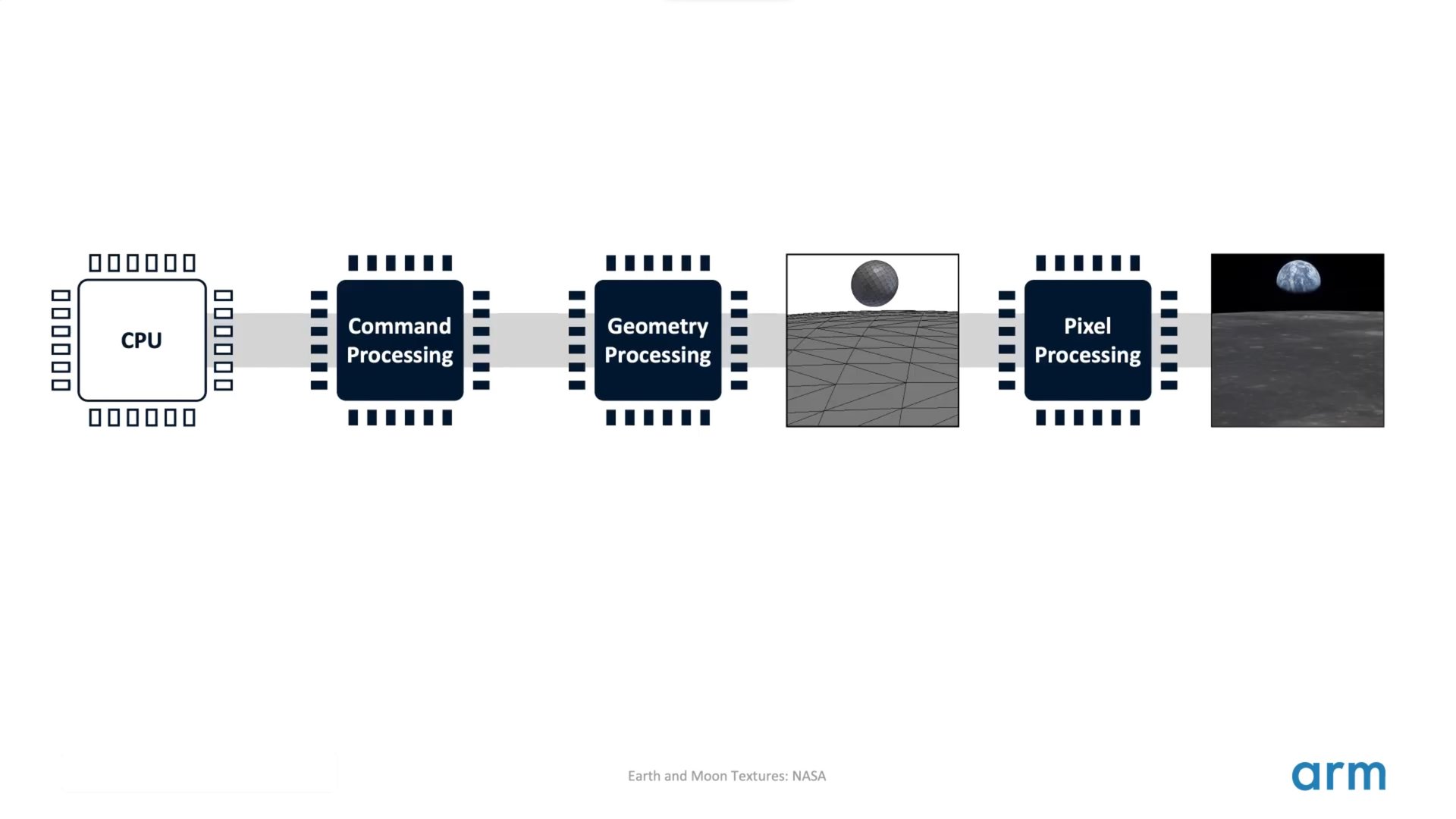

Coming back to the graphics pipeline, you can think of it as a factory assembly line where the output of one stage is used as an input in the next step.

The pipeline starts off with Vertex Processing, which essentially involves plotting each individual vertex (a point in geometrical terms) on a 2D screen. Next, these points are assembled to form triangles or “primitives” in a stage known as rasterization. In computer graphics, every 3D object is fundamentally made up of triangles (also called polygons). With a basic shape in hand, we can now determine the color and other attributes of each polygon, depending on the scene’s lighting and the object’s material. This stage is known as shading.

The GPU can also add textures to the surface of objects for added realism. In a video game, for example, artists will often use textures for character models, the sky, and other elements that we’re familiar with in the real world. These textures start out as 2D images that are mapped onto the surface of a model. You can see a high-level overview of this process in the following block diagram:

All in all, the GPU has a set sequence of tasks that it must complete in order to draw an image. And that’s just what goes into drawing a single still image, which is rarely what you need when using a computer or smartphone. The Android operating system alone has many animations. This means the GPU has to generate new high-resolution updates every 16 milliseconds (for an animation running at 60 frames per second).

Luckily, a GPU can break down this singular complex task into smaller chunks and process them simultaneously. And instead of relying on a handful of processing cores like you’d find in a CPU, it uses hundreds or even thousands of tiny cores (called execution units). Parallel processing is important because the GPU needs to provide a constant stream of data and output images on the screen.

In fact, the GPU’s ability to perform simultaneous calculations also makes it useful in some non-graphical workloads. Machine learning, video rendering, and cryptocurrency mining algorithms all require huge amounts of data to be processed in parallel. These tasks require repeated and nearly identical computations, so they’re not too far off from how the graphics pipeline functions. Developers have adapted these algorithms to run on GPUs, despite their limited instruction set.

Related: A breakdown of Immortalis-G715, Arm’s latest graphics cores for mobile

GPU vs CPU: The bottom line

Now that we know the roles of the CPU and GPU individually, how do they work together in a practical workload, like say running a video game? Put simply, the CPU handles physics calculations, game logic, simulations like enemy behavior, and player inputs. It then sends positional and geometry data to the GPU, which renders 3D shapes and lighting on a display through the graphics pipeline.

Related: What is hardware acceleration?

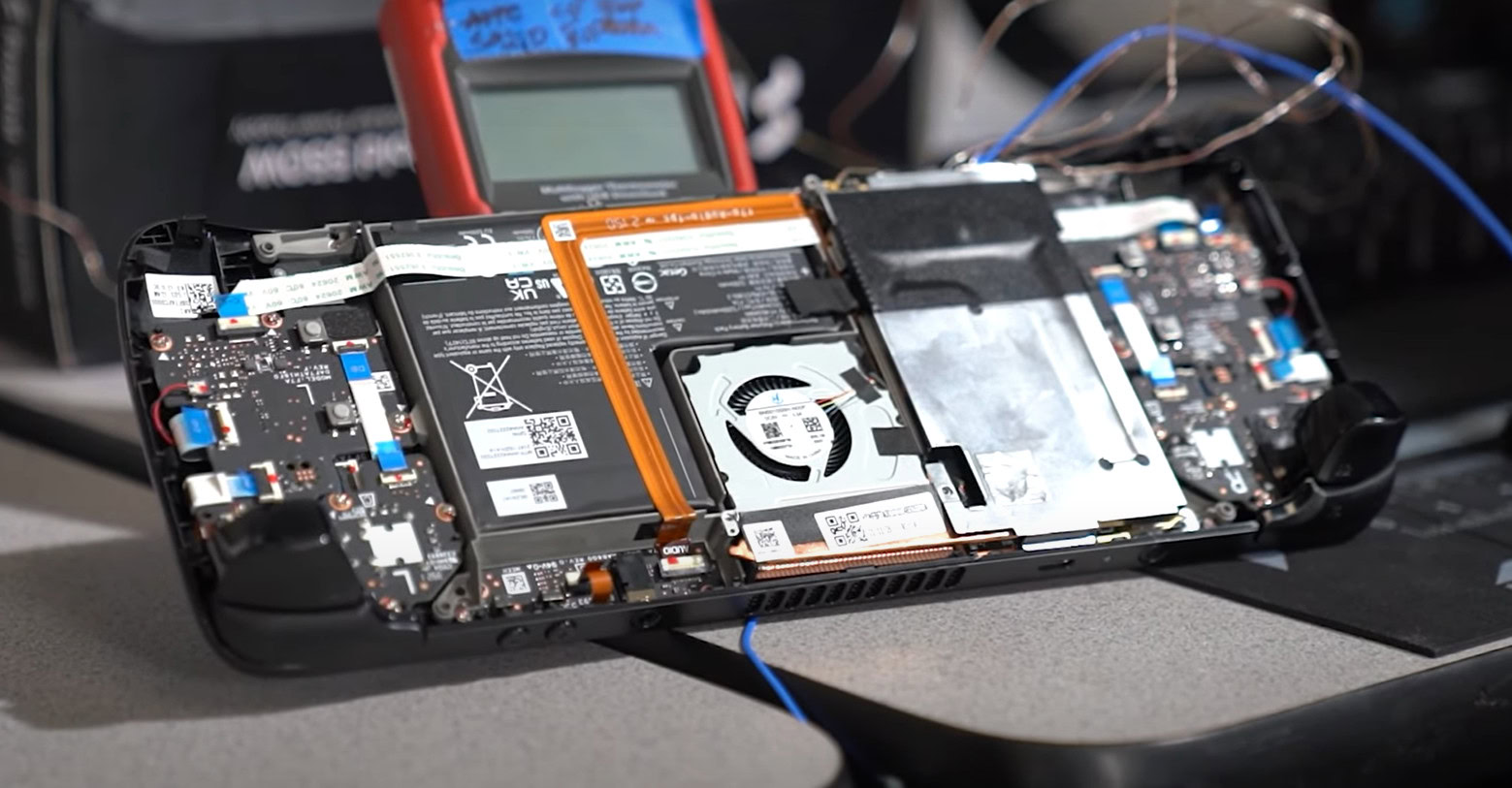

So to summarize, while the CPU and GPU both perform complex calculations quickly, there’s not a whole lot of overlap in terms of what each one can do efficiently. You could force a CPU to render videos or even play games, but chances are that it will be extremely slow. Moreover, the reverse simply isn’t possible — you can’t use a GPU in place of a CPU as it cannot handle general-purpose instructions. That’s why virtually all modern computers include both of them. Some compact devices even combine the CPU and GPU into one single package known as Accelerated Processing Unit or APU. For example, the PlayStation 5 and Steam Deck (pictured above) rely on powerful APUs that house both computational components.

Thank you for being part of our community. Read our Comment Policy before posting.