Affiliate links on Android Authority may earn us a commission. Learn more.

Caltech sensor could turn your phone into a 3D scanner

3D printing technology is gradually becoming slightly more affordable, but we’re not all CAD experts and a cheap 3D scanner to help produce our own objects remains elusive. Fortunately, CalTech researchers, working under electrical engineer Ali Hajimiri, are working on a new “nanophotonic coherent imager” (NCI) that may one day allow users to scan 3D images with just their smartphone.

The tiny NCI chip measures less than a square millimetre and is based on Light Detection and Ranging (LIDAR) technology, which beams a laser onto a subject and analyses the light waves reflected back. From this data, the sensor can provide accurate height, width and depth information for each pixel in the shot. Typically, image sensors are only interested in the light intensity of each pixel, which doesn’t offer any distance information.

Each “pixel” within the sensor is a LIDAR, allowing for multiple data points to analyse the phase, frequency and intensity of the reflected waves. Combining all the data together forms a detailed 3D image, which is apparently accurate to within microns of the original scene. Here’s the explanation of how it works:

If two light waves are coherent, the waves have the same frequency, and the peaks and troughs of light waves are exactly aligned with one another. In the NCI, the object is illuminated with this coherent light. The light that is reflected off of the object is then picked up by on-chip detectors, called grating couplers, that serve as “pixels,” as the light detected from each coupler represents one pixel on the 3-D image. On the NCI chip, the phase, frequency, and intensity of the reflected light from different points on the object is detected and used to determine the exact distance of the target point.

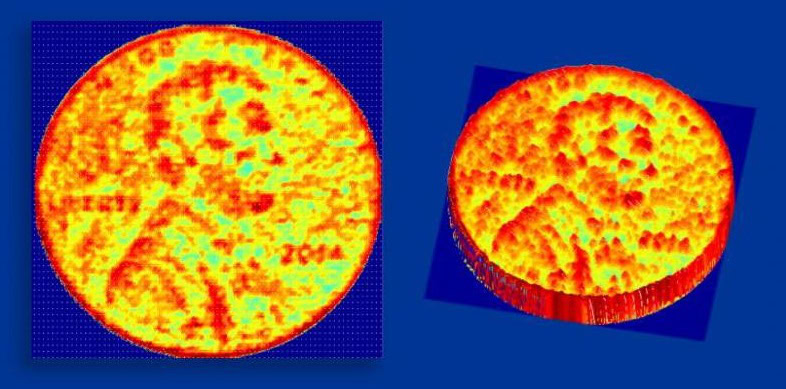

As promising as the technology is, the first proof of concept chip produced in the lab only has 16 LIDAR pixels in the sensor and is therefore only capable of capturing small image segments. The 3D coin image, picture above, required movement of the camera in between shots, but the development team is working on scaling up the technology into a larger sensor. In the future, Hajimiri says, that the current array of 16 pixels could also be easily scaled up to hundreds of thousands of pixels, enough for a low resolution camera.

This 3D scanner technology is already found in self-driving cars and robots, and, thanks to this research, will perhaps one day make its way into our smartphones too.