How we test: The Android Authority testing methodology

Reviewing a product involves a blend of subjective analysis and objective data. For features like software, where personal preference plays a significant role, we provide detailed impressions to help readers gauge how well a device might suit their individual needs and tastes.

On the other hand, aspects like performance lend themselves to quantitative analysis. At Android Authority, we use a systematic approach to testing. By collecting concrete data on various performance metrics, we can draw comparisons based on hard evidence rather than opinion.

This methodical testing allows us to position a new smartphone in the broader context of the market. We measure how it stacks up not just against its immediate predecessor, but also against its current competitors. By tracking and analyzing these results, we ensure that our reviews provide a clear, unbiased picture of where each smartphone excels and where it may fall short.

Here’s a quick breakdown of our testing methodology and principles. Also read our How we review page for a broader look at what goes into reviews on Android Authority.

Benchmarking

Your phone’s performance is crucial, whether it’s multitasking throughout your day or excelling at high-end gaming. While it’s rare to find a poorly performing phone these days, knowing how your phone stacks up against the competition is increasingly important, especially when making a purchase you hope will last four to five years or longer.

We use three benchmark tests to evaluate performance:

- Geekbench 6 is a CPU test covering a broad range of workloads, including file compression, PDF rendering, machine learning, image editing, and ray tracing. It’s a good mix of real-world and CPU-intensive tasks. While most daily apps won’t push your CPU to its limits, a solid Geekbench 6 score indicates your phone can handle demanding workloads, gaming, and future tasks.

- PCMark’s Work 3.0 suite suite focuses on system performance, encompassing web rendering, document and media editing, and data manipulation using common Android APIs. RAM, storage class, and CPU/battery optimizations play a significant role here as well. If your phone performs well in this test, it will likely feel responsive across a wide range of use cases.

- 3DMark Wild Life stress test is a rigorous graphics test, pushing your phone as hard, if not harder, than the latest high-end games. In addition to peak GPU performance, this test examines how the phone performs over a 20-minute session to assess frame rate stability. While more demanding than most games, it’s an excellent indicator for gamers interested in long sessions and future-proofing. We also run the more intensive Wild Life Extreme and Solar Bay ray tracing stress tests to gauge how devices handle cutting-edge graphics rendering techniques.

Battery life

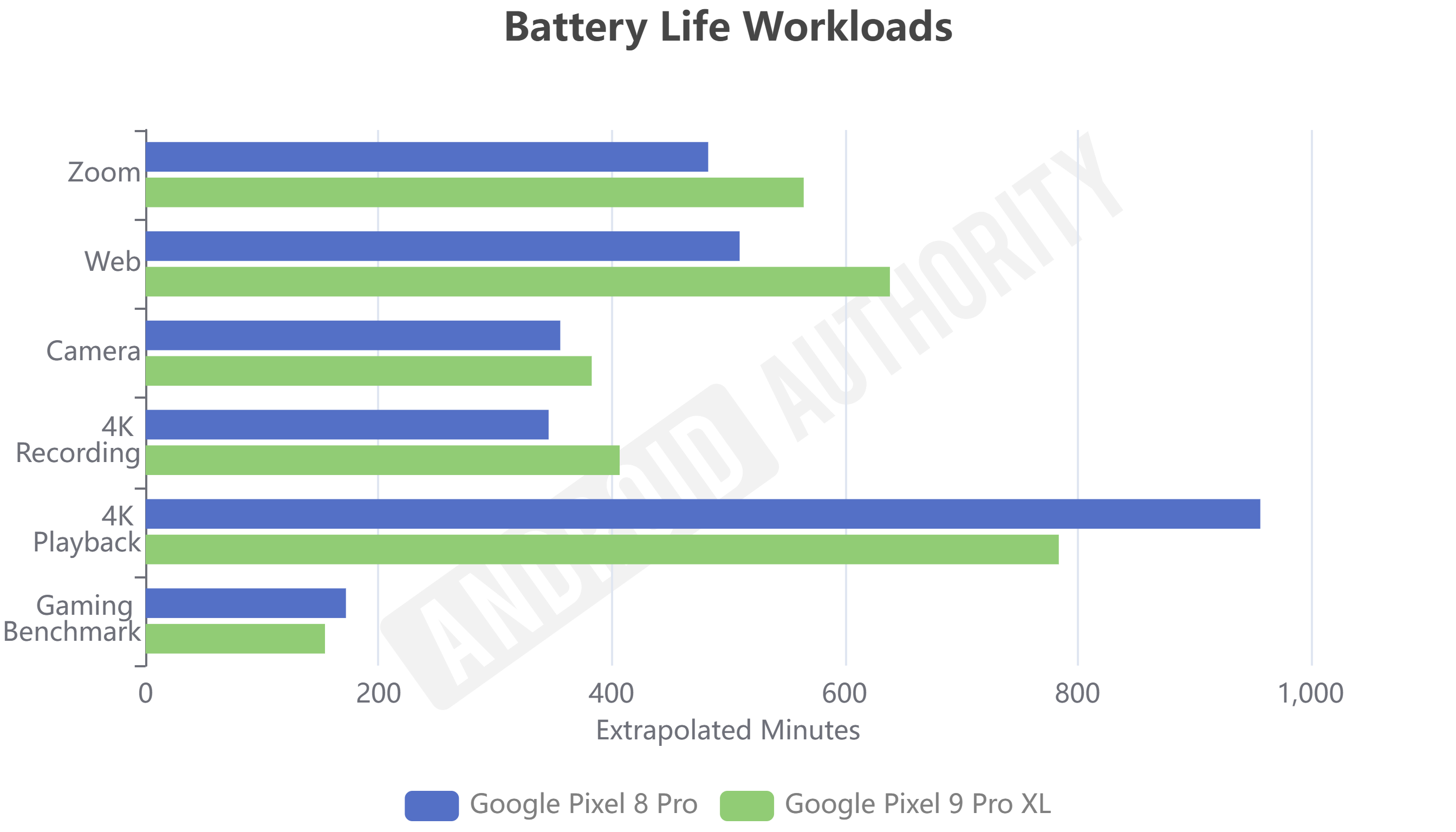

Everyone wants to know if their next phone will last all day, so we run five battery tests covering the most common daily use cases — web browsing, video playback, camera capture, video recording, and video calls — and data from our graphics benchmarking session as well.

Our battery tests are fully automated to ensure repeatable and reliable results across devices. Each test runs down the battery by 7% and then extrapolates the maximum number of hours the phone can handle each task. For the battery benchmark, we calculate total runtime based on our 20-minute stress test result.

Before testing battery life, displays are calibrated to a standard 300 nits, which simulates typical indoor and outdoor brightness levels, providing a balanced measure rather than a best- or worst-case scenario.

Charging

Even if a phone has excellent battery life, you’ll need to charge it eventually. Knowing how long it takes to recharge and which chargers are optimal is vital in today’s landscape of varied charging standards, cables, and power levels.

Our test involves a tracking the battery percentage over a full charge cycle, capturing power delivered to the handset and monitoring internal battery temperatures as it charges to full. Using this data, we calculate key milestones, such as the time to reach 50% battery, to give an idea of how quickly you can resume using the phone even if you can’t wait for a full charge.

We also monitor power levels at the phone’s USB port throughout the charging cycle to verify brands’ power claims. Additionally, we confirm the protocol used for third-party compatibility and record internal battery temperatures to assess whether fast charging affects long-term battery health.